Differentiable Memory

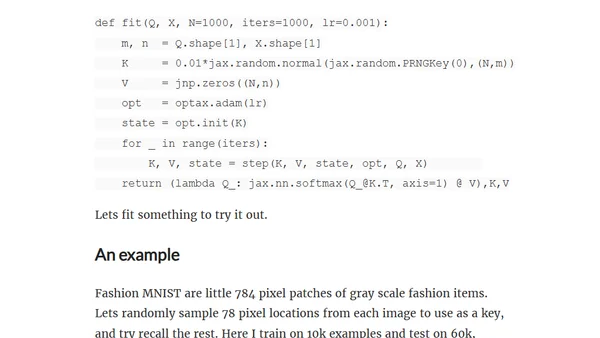

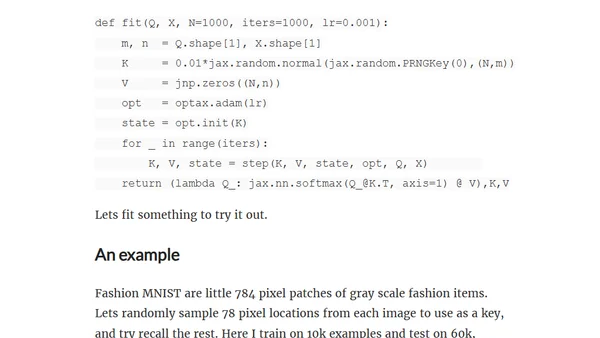

Explores differentiable memory using attention mechanisms and linear algebra, with a practical implementation in Jax/Optax.

Explores differentiable memory using attention mechanisms and linear algebra, with a practical implementation in Jax/Optax.

A detailed academic history tracing the core ideas behind large language models, from distributed representations to the transformer architecture.

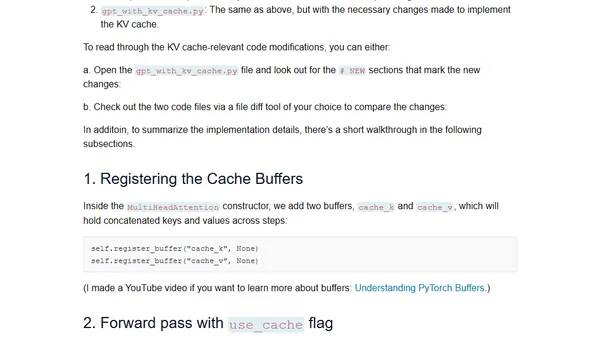

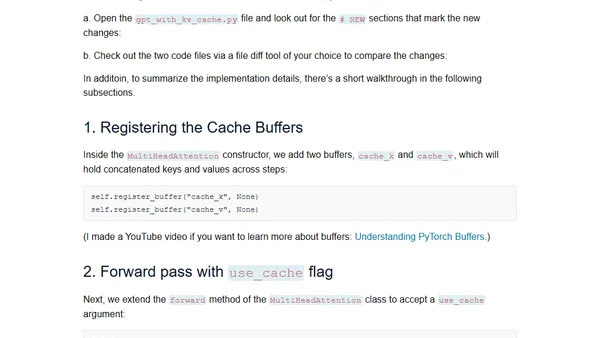

Explains the KV cache technique for efficient LLM inference with a from-scratch code implementation.

A technical tutorial explaining the concept and implementation of KV caches for efficient inference in Large Language Models (LLMs).

A clear explanation of the attention mechanism in Large Language Models, focusing on how words derive meaning from context using vector embeddings.

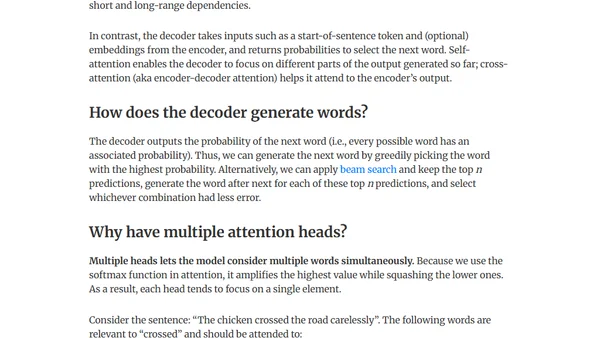

Explains the intuition behind the Attention mechanism and Transformer architecture, focusing on solving issues in machine translation and language modeling.

Announcing the release of the 'transformer' R package on CRAN, implementing a full transformer architecture for AI/ML development.

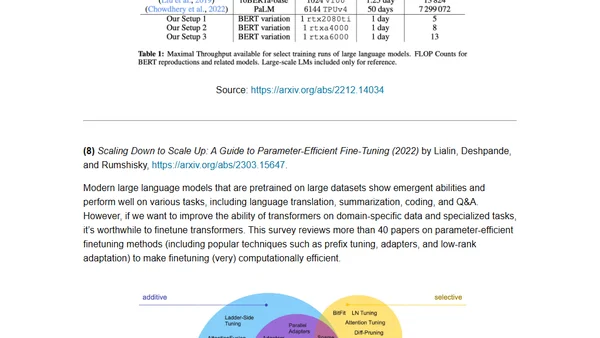

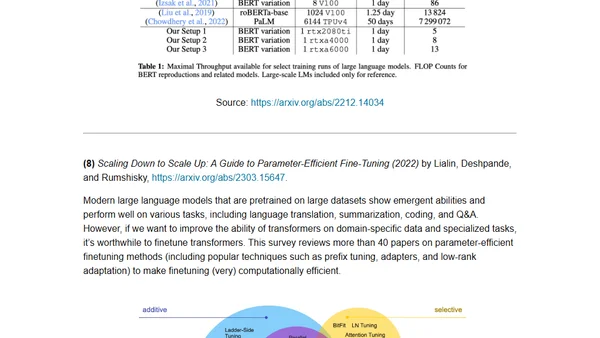

A curated reading list of key academic papers for understanding the development and architecture of large language models and transformers.

A curated reading list of key academic papers for understanding the development and architecture of large language models and transformers.

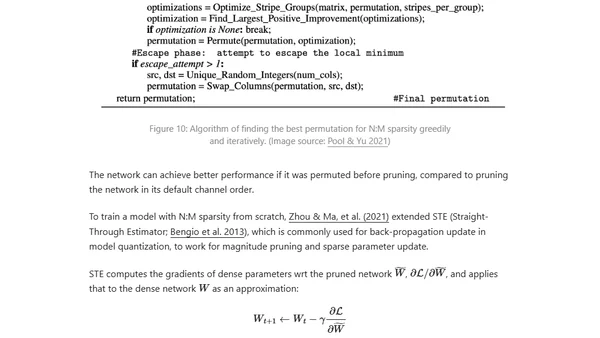

Explores techniques to optimize inference speed and memory usage for large transformer models, including distillation, pruning, and quantization.

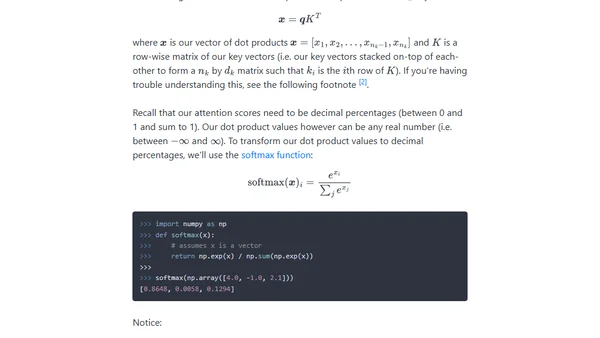

A technical explanation of the attention mechanism in transformers, building intuition from key-value lookups to the scaled dot product equation.

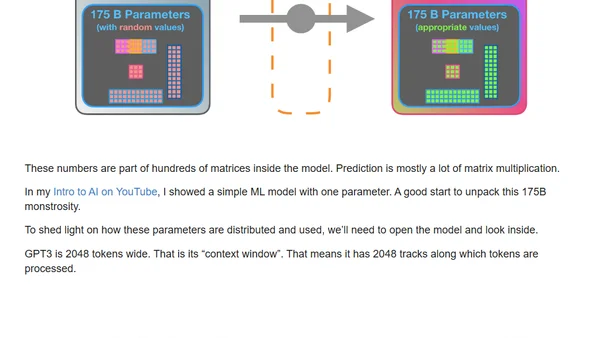

A visual guide explaining how GPT-3 is trained and generates text, breaking down its transformer architecture and massive scale.

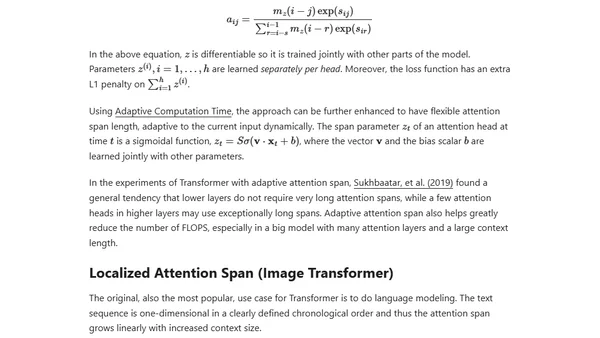

An updated overview of the Transformer model family, covering improvements for longer attention spans, efficiency, and new architectures since 2020.

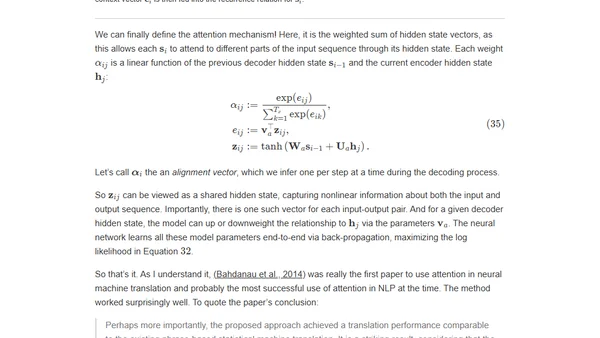

Explains the attention mechanism in deep learning, its motivation from human perception, and its role in improving seq2seq models like Transformers.

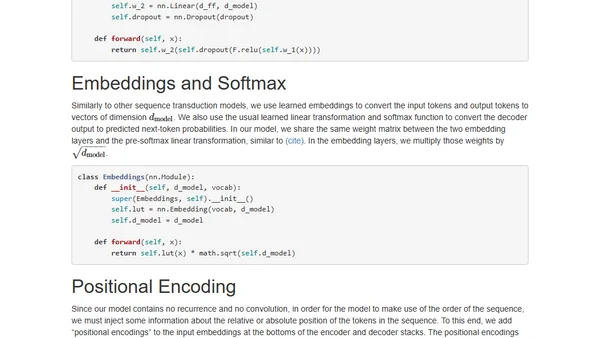

An annotated, line-by-line implementation of the Transformer architecture from 'Attention is All You Need' in PyTorch.

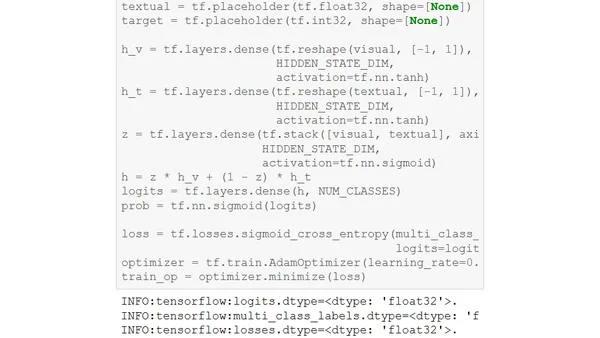

Explains the Gated Multimodal Unit (GMU), a deep learning architecture for intelligently fusing data from different sources like images and text.

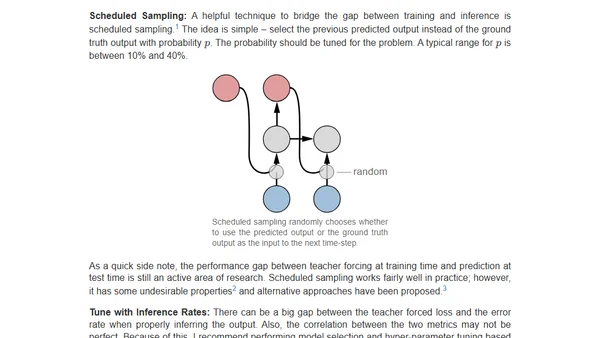

Practical tips for training sequence-to-sequence models with attention, focusing on debugging and ensuring the model learns to condition on input.