Differentiable Memory

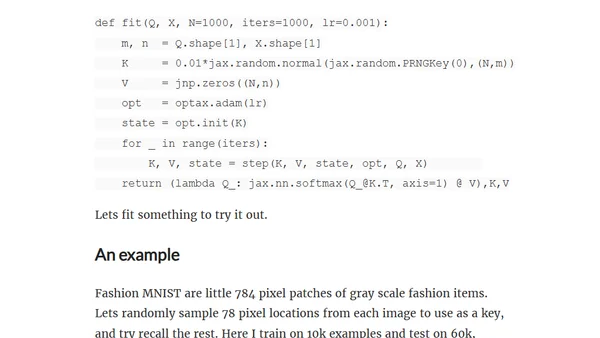

Explores differentiable memory using attention mechanisms and linear algebra, with a practical implementation in Jax/Optax.

Explores differentiable memory using attention mechanisms and linear algebra, with a practical implementation in Jax/Optax.

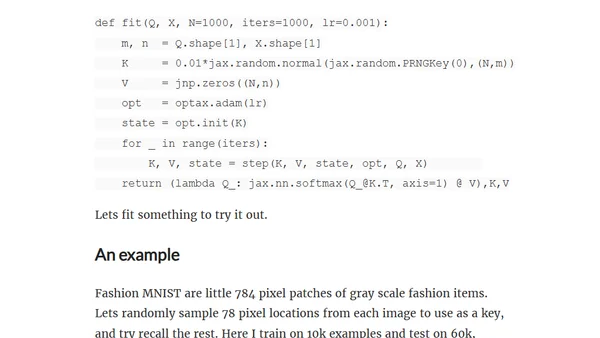

A technical exploration of implementing Laplace approximations using JAX, focusing on sparse autodiff and JAXPR manipulation for efficient gradient computation.

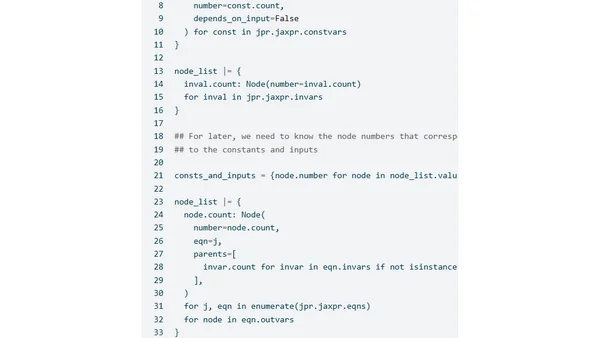

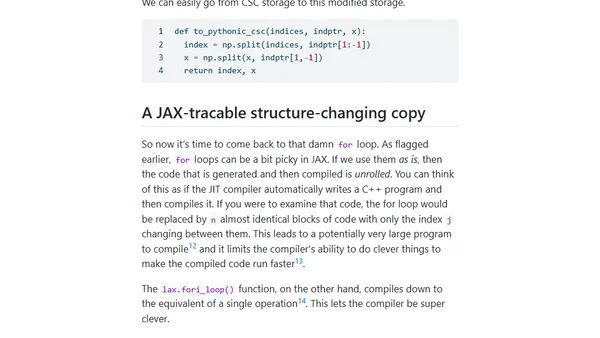

Exploring JAX-compatible sparse Cholesky decomposition, focusing on symbolic factorization and JAX's control flow challenges.

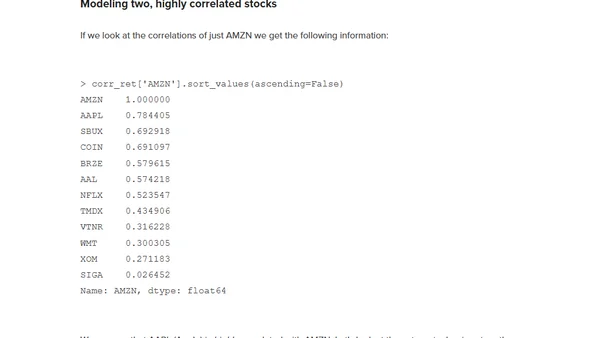

A technical tutorial on applying Modern Portfolio Theory for investment optimization using JAX and differentiable programming.

Explores techniques for flattening and unflattening nested data structures in TensorFlow, JAX, and PyTorch for efficient deep learning model development.

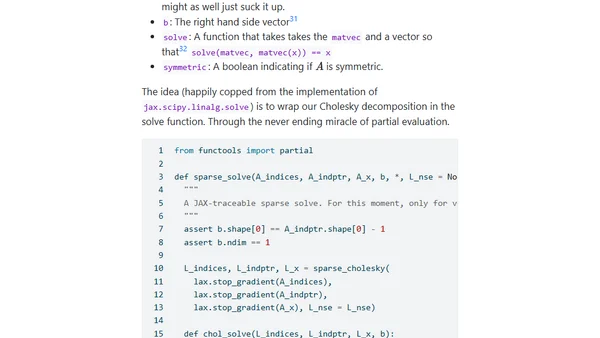

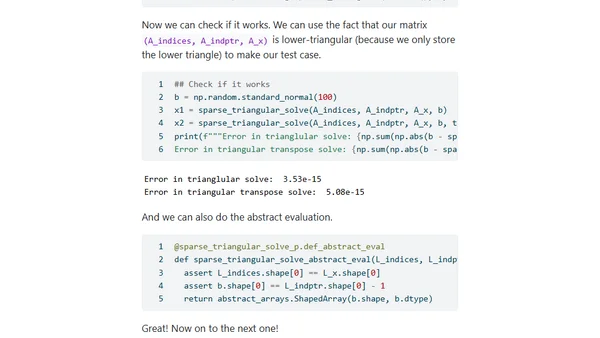

Part 6 of a series on making sparse linear algebra differentiable in JAX, focusing on implementing Jacobian-vector products for custom primitives.

Part five of a series on implementing differentiable sparse linear algebra in JAX, focusing on registering new JAX-traceable primitives.

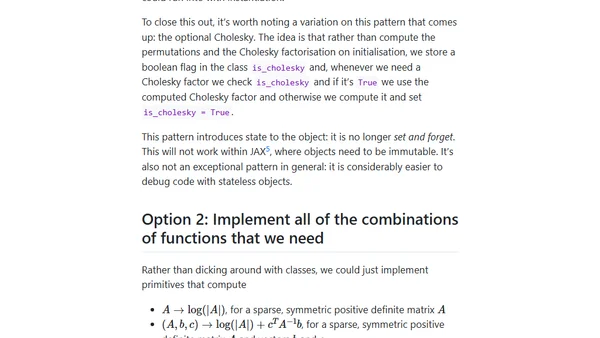

Explores design options for implementing autodifferentiable sparse matrices in JAX to accelerate statistical models, focusing on avoiding redundant computations.

Explores challenges integrating sparse Cholesky factorizations with JAX for faster statistical inference in PyMC.

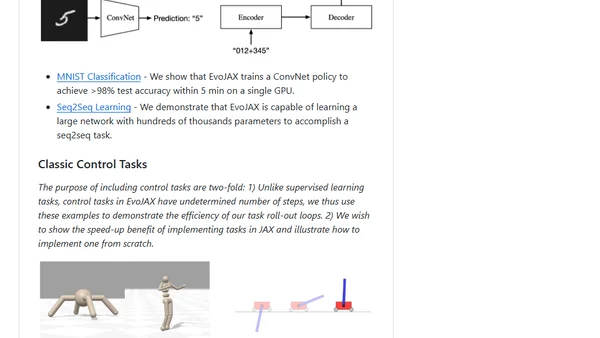

EvoJAX is a hardware-accelerated neuroevolution toolkit built on JAX for running parallel evolution experiments on TPUs/GPUs.

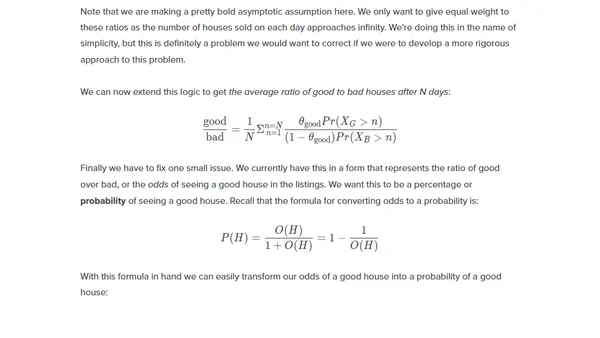

A technical tutorial using Python and JAX to model and correct for survivorship bias in housing market data during the pandemic.