The Transformer Family

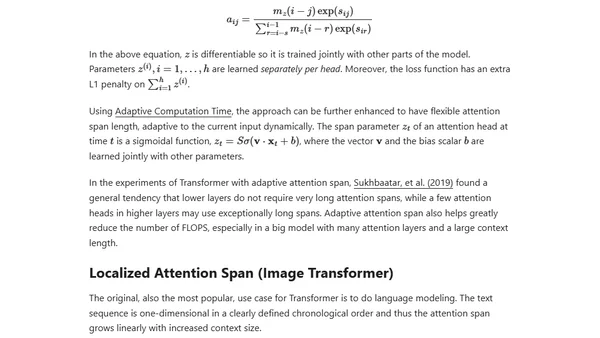

Read OriginalThis technical article provides a comprehensive summary of advancements in Transformer models, focusing on enhancements to the vanilla architecture for better long-term attention, reduced memory/computation costs, and adaptation for RL tasks. It includes detailed notation and explanations of core concepts like attention, self-attention, and multi-head mechanisms, serving as a resource for understanding modern NLP model evolution.

Comments

No comments yet

Be the first to share your thoughts!

Browser Extension

Get instant access to AllDevBlogs from your browser

Top of the Week

No top articles yet