Understanding and Coding the KV Cache in LLMs from Scratch

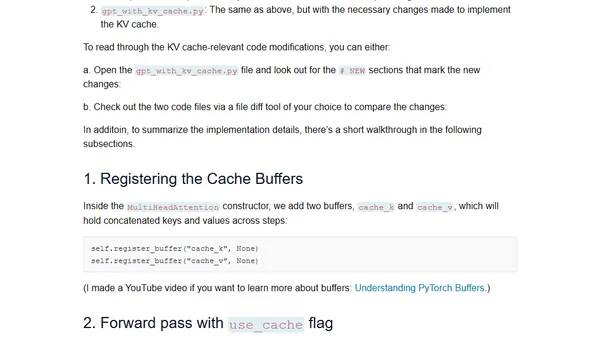

Read OriginalThis technical article provides a detailed conceptual and code-based explanation of KV caches, a critical technique for speeding up Large Language Model inference. It covers how KV caches work, their trade-offs in memory and complexity, and includes a human-readable from-scratch implementation to demonstrate the concept in practice.

Comments

No comments yet

Be the first to share your thoughts!

Browser Extension

Get instant access to AllDevBlogs from your browser

Top of the Week

No top articles yet