Risks and Limitations of AI in the Life Sciences

An expert discusses the overhyped risks and data limitations of applying AI in life sciences, using examples like AlphaFold.

An expert discusses the overhyped risks and data limitations of applying AI in life sciences, using examples like AlphaFold.

Explores the balance between model simplicity and precision in system identification for control engineering and machine learning.

Argues for using general SOTA AI models over custom, specialized ones, predicting cheaper, open-source general models will dominate.

A critique of how quantitative benchmarking and evaluation culture shapes and potentially distorts progress in machine learning research.

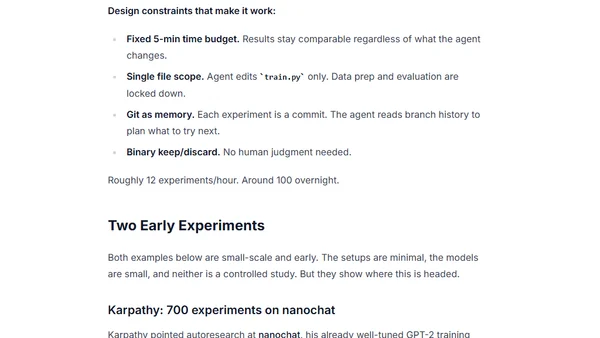

Explores how autonomous AI agents (autoresearch) can optimize small language models by running hundreds of experiments overnight, improving performance without human intervention.

Analyzes the hidden costs and skill erosion of using AI for coding, emphasizing the need for human oversight.

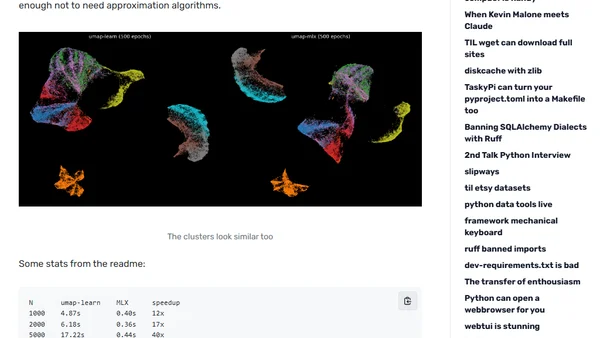

Exploring the UMAP-MLX project, which achieves up to 46x speedups for UMAP using Apple's MLX, with performance benchmarks.

A skeptic's detailed journey using AI coding agents to port scikit-learn to Rust, showcasing the surprising capabilities of modern LLMs.

A skeptic's detailed journey using AI coding agents for complex projects, including porting scikit-learn to Rust, showcasing their surprising capabilities.

An update on the PRISM project's progress in developing methods for validating spatial patterns in machine learning, covering research from 2025-2026.

Explores the theoretical links between optimization algorithms (like gradient descent) and control theory, analyzing them as dynamical systems.

Author shares their experience and study strategy for passing Microsoft's AI-900 Azure AI Fundamentals certification exam.

A blog post discussing a social network exclusively for AI bots, exploring their interactions and the implications of their sci-fi influenced conversations.

A blog post discussing a New York Times article about Moltbook, a social network exclusively for AI bots, and the author's insights on AI behavior.

A 4.5-hour interview discussing the state of AI in 2026, covering LLMs, geopolitics, training, open vs. closed models, AGI timelines, and industry implications.

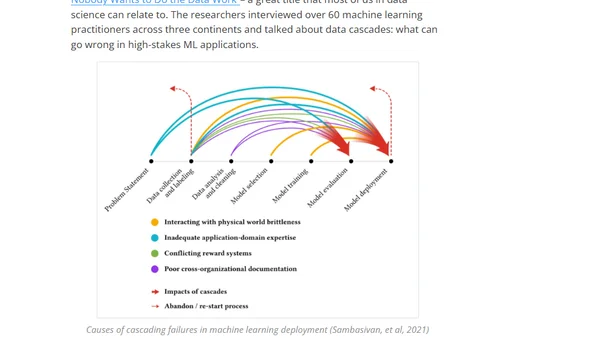

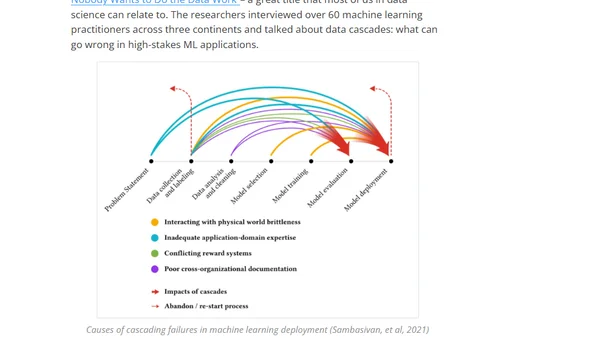

A 2025 AI research review covering tabular machine learning, the societal impacts of AI scale, and open-source data-science tools.

Andrej Karpathy notes a 600x cost reduction in training a GPT-2 level model over 7 years, highlighting rapid efficiency gains in AI.

A reflection on a decade-old blog post about deep learning, examining past predictions on architecture, scaling, and the field's evolution.

Leading AI researchers debate whether current scaling and innovations are sufficient to achieve Artificial General Intelligence (AGI).

Analysis of Microsoft's 2026 Global ML Building Footprints dataset, including technical setup and data exploration using DuckDB and QGIS.