A History of Large Language Models

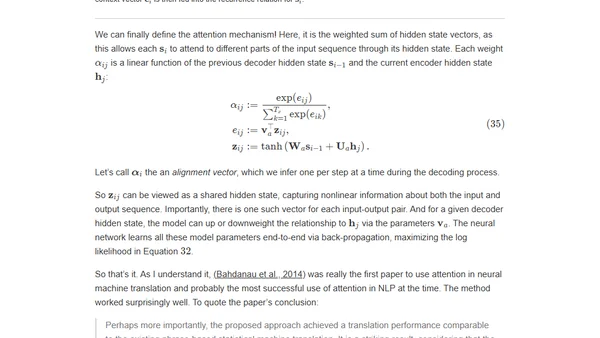

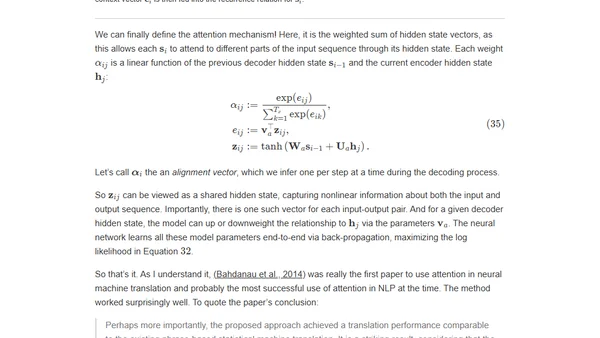

A detailed academic history tracing the core ideas behind large language models, from distributed representations to the transformer architecture.

A detailed academic history tracing the core ideas behind large language models, from distributed representations to the transformer architecture.

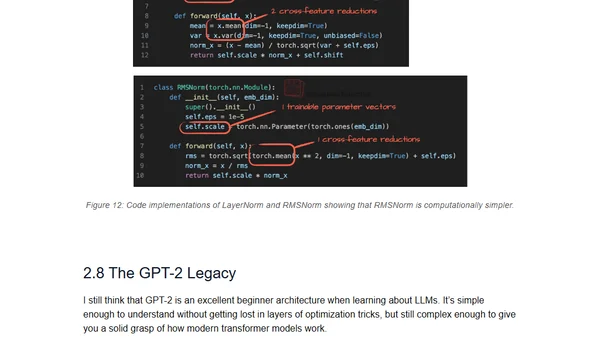

A hands-on guide to understanding and implementing the Qwen3 large language model architecture from scratch using pure PyTorch.

A hands-on tutorial implementing the Qwen3 large language model architecture from scratch using pure PyTorch, explaining its core components.

Analyzes the architectural advancements in OpenAI's new open-weight gpt-oss models, comparing them to GPT-2 and other modern LLMs.

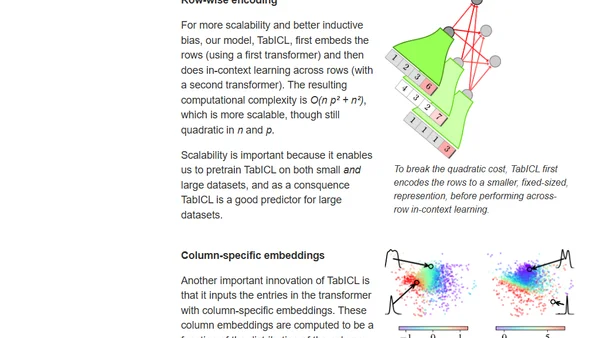

Introducing TabICL, a state-of-the-art table foundation model that uses in-context learning and improved architecture for fast, scalable tabular data prediction.

An analysis of the ARC Prize AI benchmark, questioning if human-level intelligence can be achieved solely through deep learning and transformers.

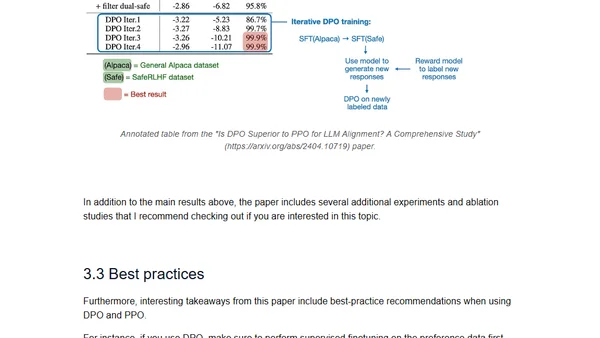

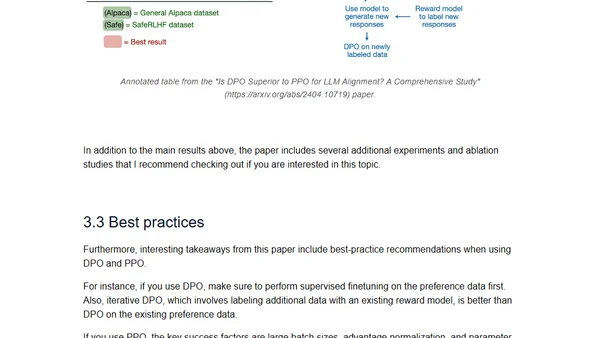

A technical review of April 2024's major open LLM releases (Mixtral, Llama 3, Phi-3, OpenELM) and a comparison of DPO vs PPO for LLM alignment.

A review and comparison of the latest open LLMs (Mixtral, Llama 3, Phi-3, OpenELM) and a study on DPO vs. PPO for LLM alignment.

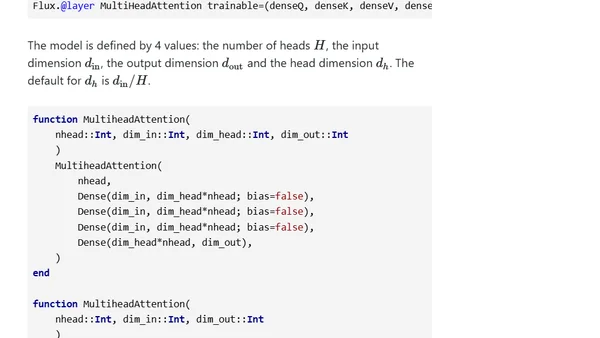

A tutorial on building a generative transformer model from scratch in Julia, trained on Shakespeare to create GPT-like text.

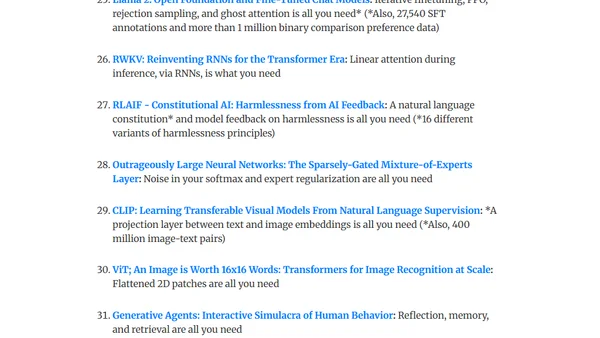

A curated reading list of fundamental language modeling papers with summaries, designed to help start a weekly paper club for learning and discussion.

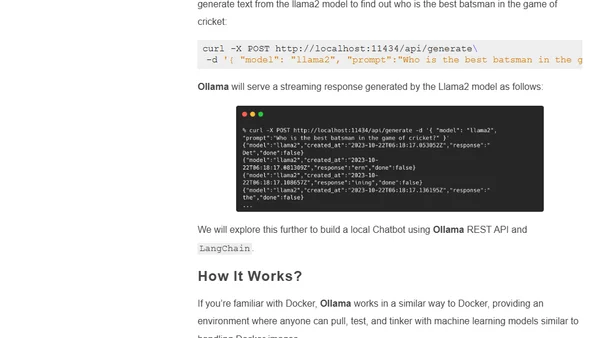

A guide to using Ollama, an open-source CLI tool for running and customizing large language models like Llama 2 locally on your own machine.

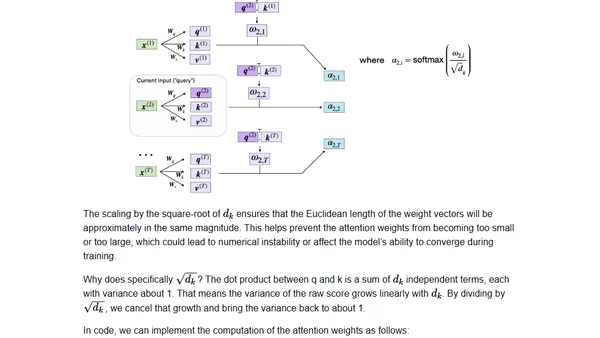

Explains the intuition behind the Attention mechanism and Transformer architecture, focusing on solving issues in machine translation and language modeling.

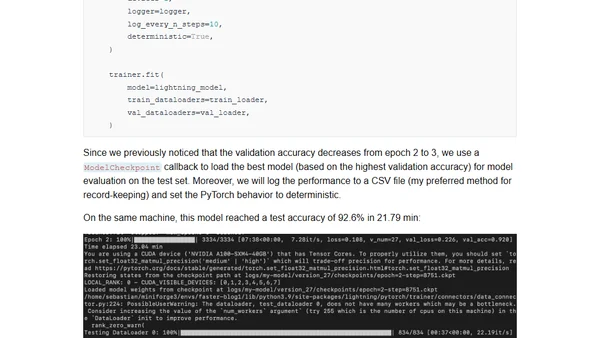

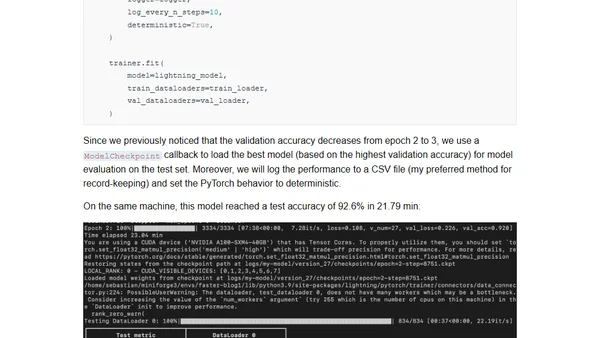

Learn techniques to speed up PyTorch model training by 8x using PyTorch Lightning, maintaining accuracy while reducing training time.

Techniques to accelerate PyTorch model training by 8x using PyTorch Lightning, with a DistilBERT fine-tuning example.

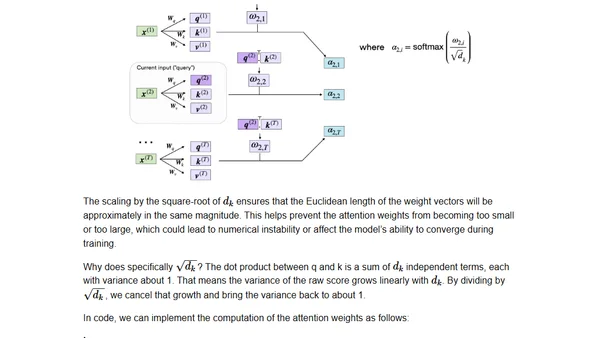

A technical guide to coding the self-attention mechanism from scratch, as used in transformers and large language models.

A technical guide to coding the self-attention mechanism from scratch, as used in transformers and large language models.

A technical guide to implementing a GPT model from scratch using only 60 lines of NumPy code, including loading pre-trained GPT-2 weights.

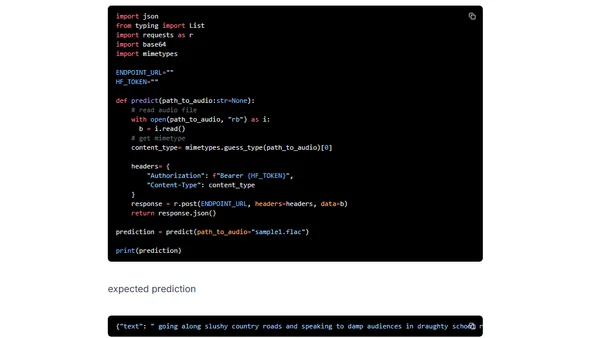

A tutorial on deploying OpenAI's Whisper speech recognition model using Hugging Face Inference Endpoints for scalable transcription APIs.

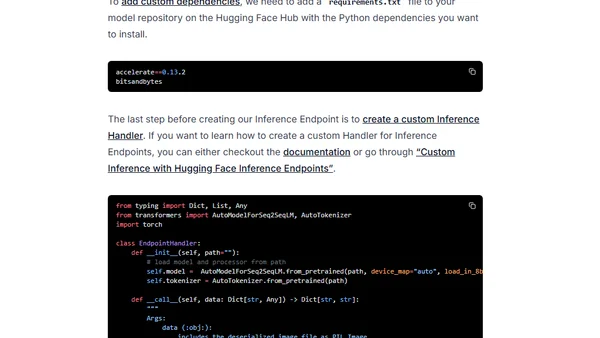

A tutorial on deploying the T5 11B language model for inference using Hugging Face Inference Endpoints on a budget.

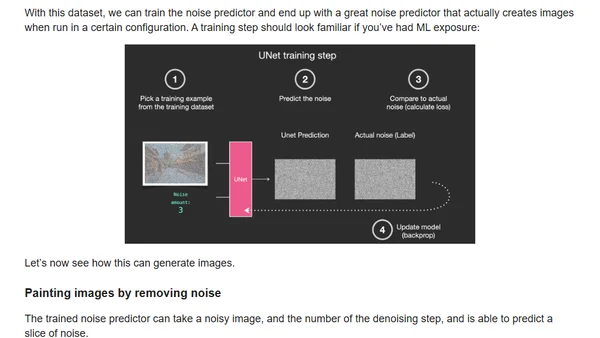

A gentle introduction to how Stable Diffusion works, explaining its components and the process of generating images from text.