Understanding and Coding the KV Cache in LLMs from Scratch

Explains the KV cache technique for efficient LLM inference with a from-scratch code implementation.

Explains the KV cache technique for efficient LLM inference with a from-scratch code implementation.

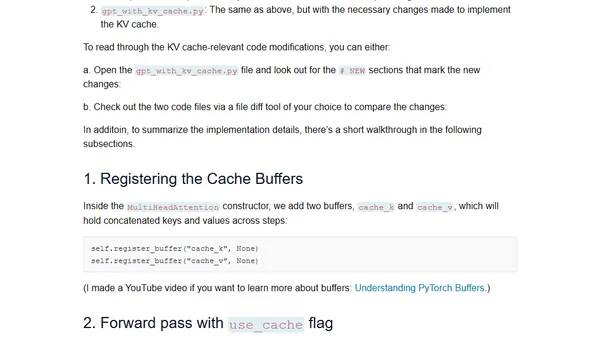

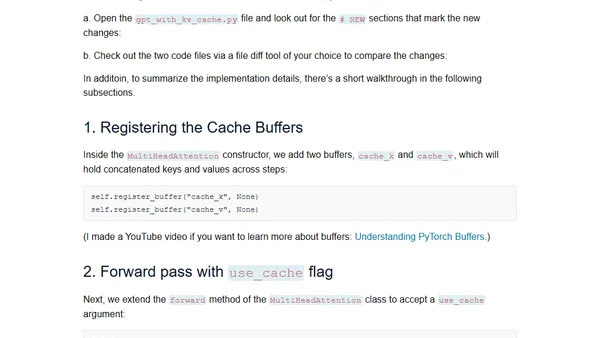

A technical tutorial explaining the concept and implementation of KV caches for efficient inference in Large Language Models (LLMs).

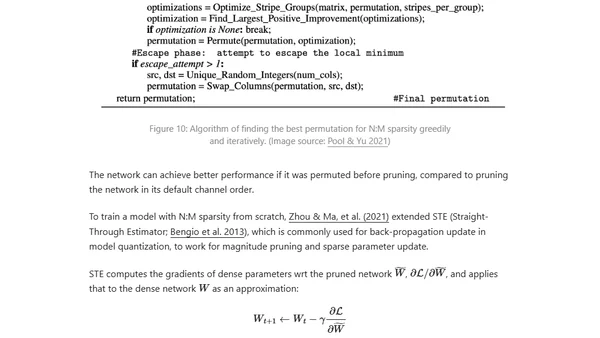

Explores techniques to optimize inference speed and memory usage for large transformer models, including distillation, pruning, and quantization.