Understanding and Coding the KV Cache in LLMs from Scratch

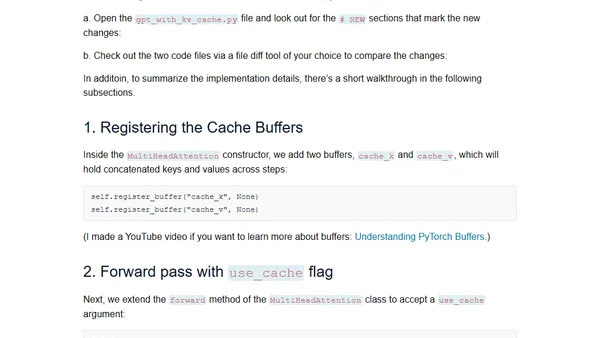

Read OriginalThis article provides a detailed, from-scratch explanation of KV (Key-Value) caches, a critical technique for speeding up text generation in LLMs during inference. It covers the conceptual workings, trade-offs in memory and complexity, and includes a human-readable code implementation to illustrate the mechanism.

Comments

No comments yet

Be the first to share your thoughts!

Browser Extension

Get instant access to AllDevBlogs from your browser

Top of the Week

No top articles yet