Hallucinations Aren't Bugs: The Kantian Architecture of AI Consciousness

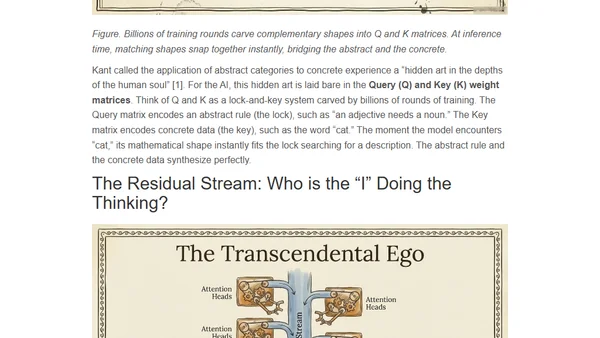

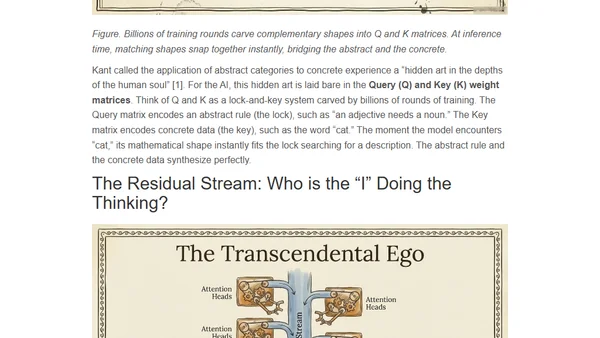

Explores how AI hallucinations mirror Kant's philosophy of mind, arguing they are inherent to rational thought architecture, not software bugs.

Explores how AI hallucinations mirror Kant's philosophy of mind, arguing they are inherent to rational thought architecture, not software bugs.

Leading AI researchers debate whether current scaling and innovations are sufficient to achieve Artificial General Intelligence (AGI).

A visual essay explaining LLM internals like tokenization, embeddings, and transformer architecture in an accessible way.

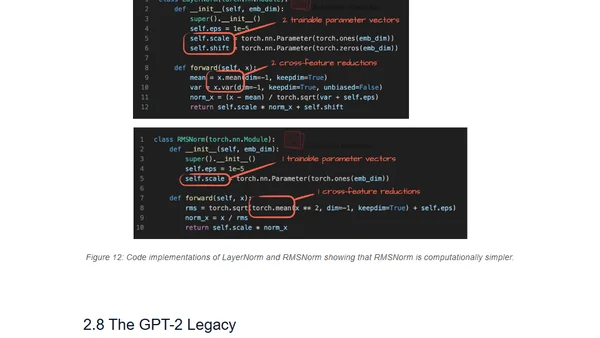

Analysis of OpenAI's new gpt-oss models, comparing architectural improvements from GPT-2 and examining optimizations like MXFP4 and Mixture-of-Experts.

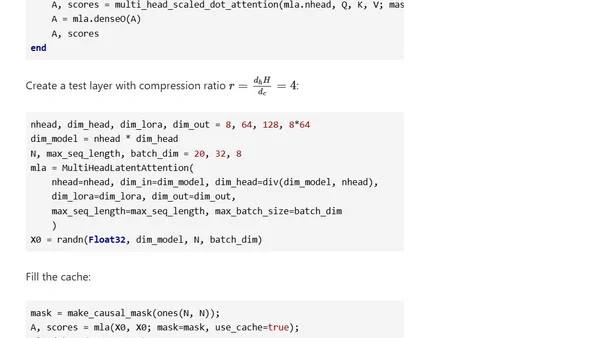

A technical deep dive into DeepSeek's Multi-Head Latent Attention mechanism, covering its mathematics and implementation in Julia.

A 3-hour coding workshop video covering the implementation, training, and use of Large Language Models (LLMs) from scratch.

Announcing the release of the 'transformer' R package on CRAN, implementing a full transformer architecture for AI/ML development.

An updated, comprehensive overview of the Transformer architecture and its many recent improvements, including detailed notation and attention mechanisms.

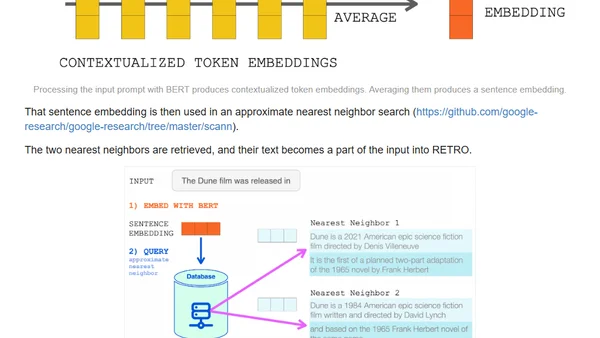

Explains how retrieval-augmented language models like RETRO achieve GPT-3 performance with far fewer parameters by querying external knowledge.