Notes on ‘AI Engineering’ chapter 9: Inference Optimisation

Summary of key concepts for optimizing AI inference performance, covering bottlenecks, metrics, and deployment patterns from Chip Huyen's book.

Summary of key concepts for optimizing AI inference performance, covering bottlenecks, metrics, and deployment patterns from Chip Huyen's book.

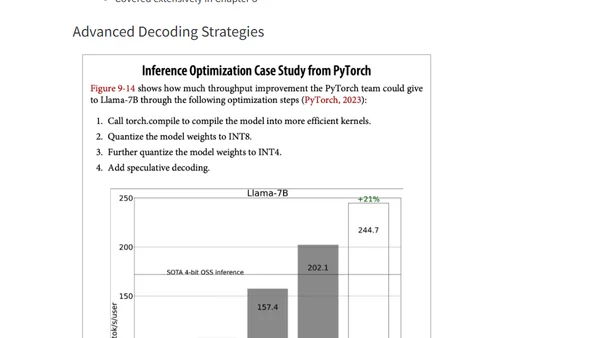

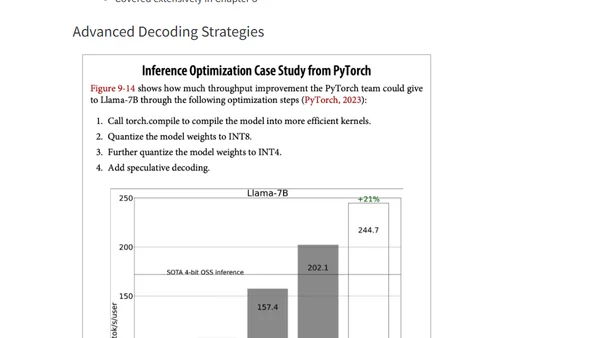

Explores techniques to optimize inference speed and memory usage for large transformer models, including distillation, pruning, and quantization.

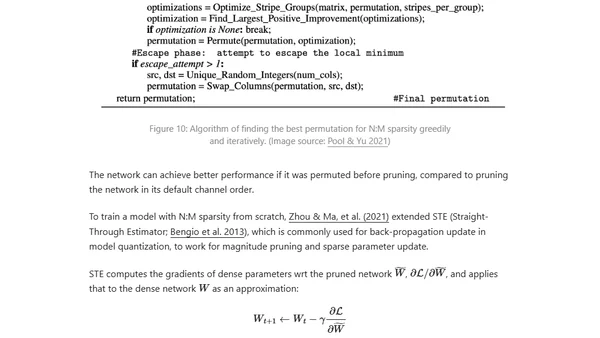

Learn to optimize BERT and RoBERTa models for faster GPU inference using DeepSpeed-Inference, reducing latency from 30ms to 10ms.

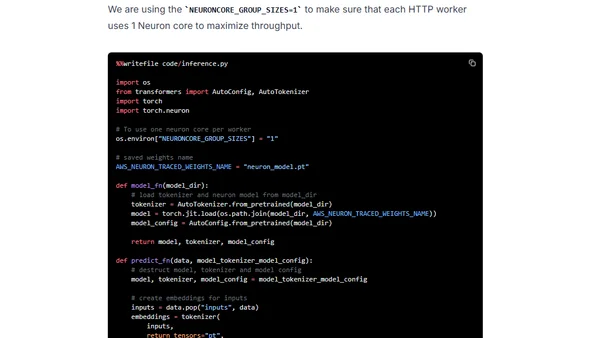

A tutorial on accelerating BERT model inference using Hugging Face Transformers and AWS Inferentia chips for cost-effective, high-performance deployment.

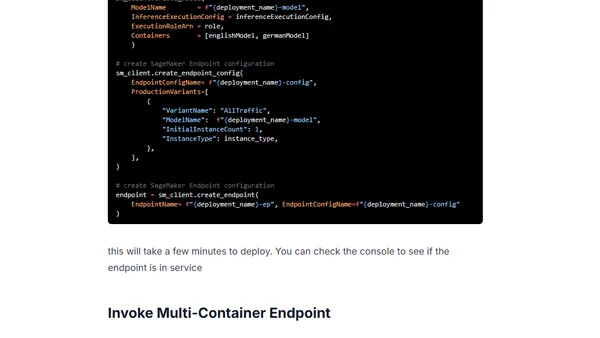

Guide to deploying multiple Hugging Face Transformer models as a cost-optimized Multi-Container Endpoint using Amazon SageMaker.