The Annotated Transformer

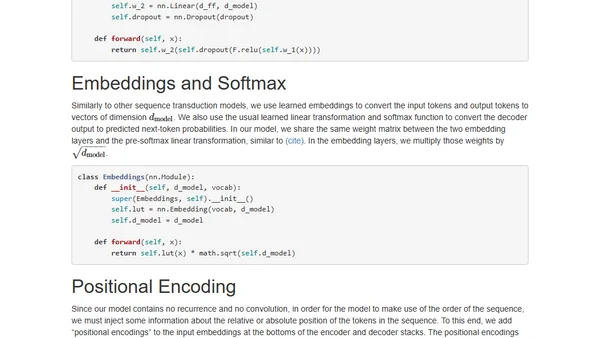

Read OriginalThis article provides a detailed, educational walkthrough of the influential Transformer model for NLP. It presents a working, line-by-line code implementation (about 400 lines) of the architecture from the 'Attention is All You Need' paper, explaining concepts like self-attention, encoder/decoder stacks, and multi-head attention using PyTorch.

Comments

No comments yet

Be the first to share your thoughts!

Browser Extension

Get instant access to AllDevBlogs from your browser

Top of the Week

No top articles yet