The Big LLM Architecture Comparison

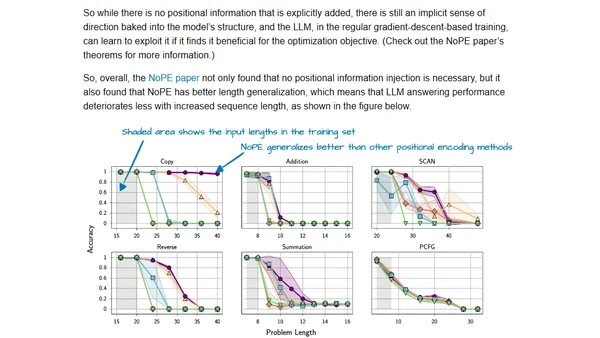

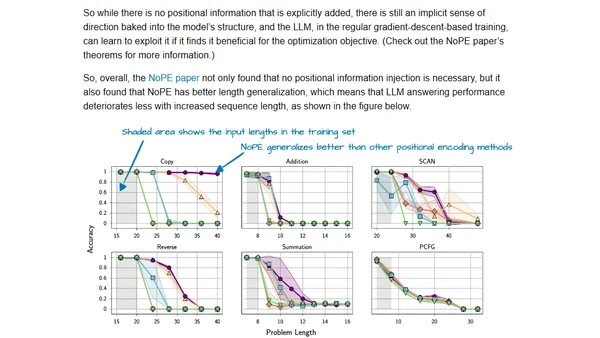

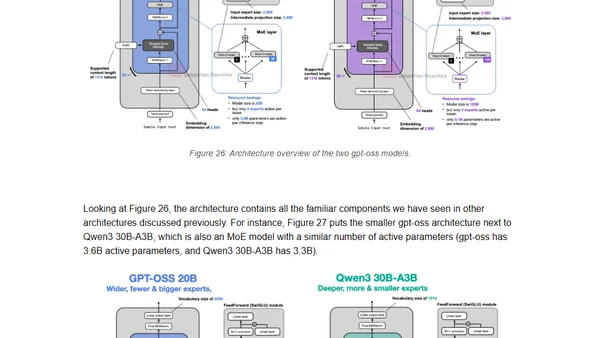

A detailed comparison of architectural developments in major large language models (LLMs) released in 2024-2025, focusing on structural changes beyond benchmarks.

A detailed comparison of architectural developments in major large language models (LLMs) released in 2024-2025, focusing on structural changes beyond benchmarks.

A technical comparison of architectural changes in major Large Language Models (LLMs) from 2024-2025, focusing on structural innovations beyond benchmarks.

Explores the philosophical argument that AI, particularly LLMs, possess a form of understanding and model reality, challenging the notion they are mere token predictors.

A summary and analysis of DeepMind's RoboCat paper, a self-improving foundation agent for robotic manipulation using Transformer models.

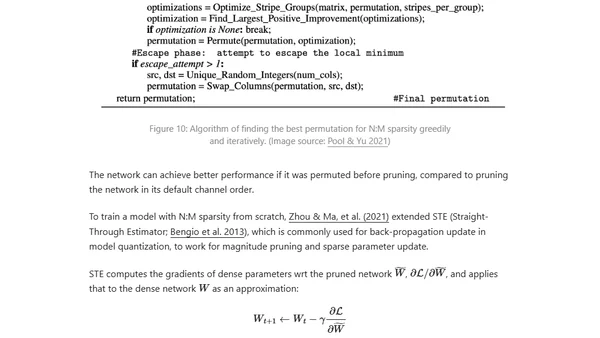

Explores techniques to optimize inference speed and memory usage for large transformer models, including distillation, pruning, and quantization.

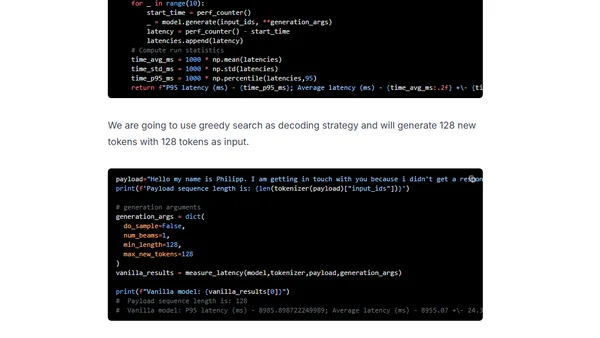

Learn to optimize GPT-J inference using DeepSpeed-Inference and Hugging Face Transformers for faster GPU performance.

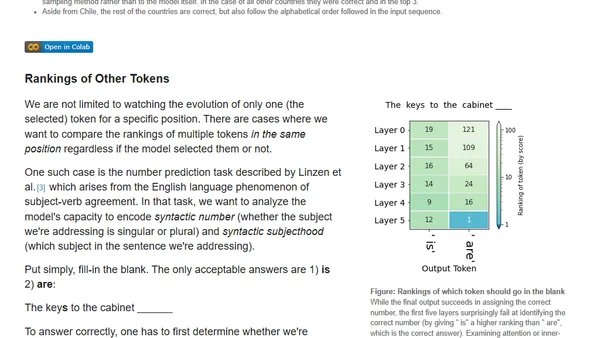

Explores visualizing hidden states in Transformer language models to understand their internal decision-making process during text generation.

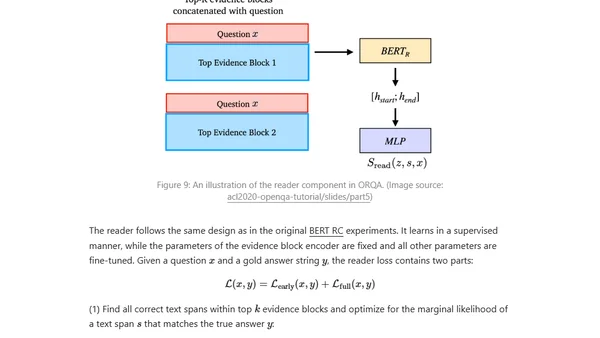

A technical overview of approaches for building open-domain question answering systems using pretrained language models and neural networks.