How Good Are the Latest Open LLMs? And Is DPO Better Than PPO?

A technical review of April 2024's major open LLM releases (Mixtral, Llama 3, Phi-3, OpenELM) and a comparison of DPO vs PPO for LLM alignment.

A technical review of April 2024's major open LLM releases (Mixtral, Llama 3, Phi-3, OpenELM) and a comparison of DPO vs PPO for LLM alignment.

A review and comparison of the latest open LLMs (Mixtral, Llama 3, Phi-3, OpenELM) and a study on DPO vs. PPO for LLM alignment.

Argues against using LLMs to generate SQL queries for novel business questions, highlighting the importance of human analysts for precision.

A technical guide on using Azure AI Language Studio to summarize and optimize grounding documents for improving RAG-based AI solutions.

Practical tips for writing technical documentation that is optimized for LLM question-answering tools, improving developer experience.

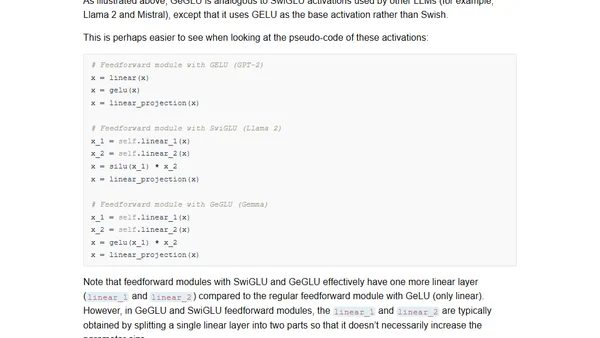

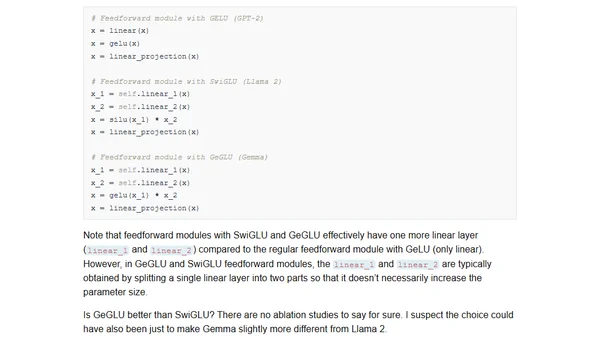

A summary of February 2024 AI research, covering new open-source LLMs like OLMo and Gemma, and a study on small, fine-tuned models for text summarization.

A summary of key AI research papers from February 2024, focusing on new open-source LLMs, small fine-tuned models, and efficient fine-tuning techniques.

Explores the gap between generative AI's perceived quality in open-ended play and its practical effectiveness for specific, goal-oriented tasks.

A developer's critical reflection on GitHub Copilot's impact, questioning if its AI assistance is creating accessibility and quality divides in software development.

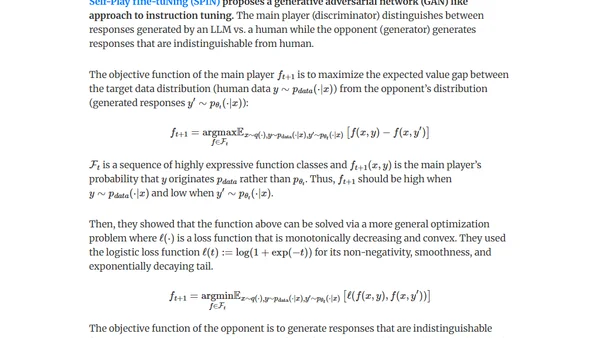

Explores methods for generating synthetic data (distillation & self-improvement) to fine-tune LLMs for pretraining, instruction-tuning, and preference-tuning.

A guide on running a Large Language Model (LLM) locally using Ollama for privacy and offline use, covering setup and performance tips.

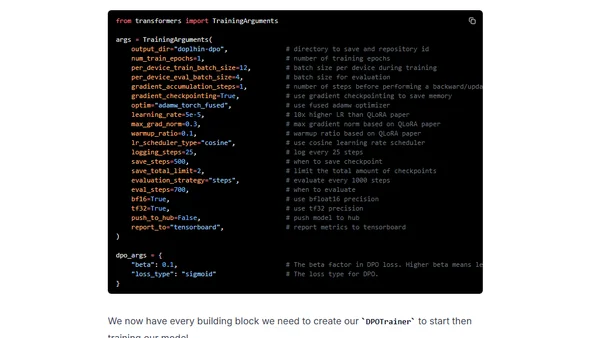

A technical guide on using Direct Preference Optimization (DPO) with Hugging Face's TRL library to align and improve open-source large language models in 2024.

A curated reading list of fundamental language modeling papers with summaries, designed to help start a weekly paper club for learning and discussion.

Investigates why ChatGPT 3.5 API sometimes refuses to summarize arXiv papers, exploring prompts, content, and model behavior.

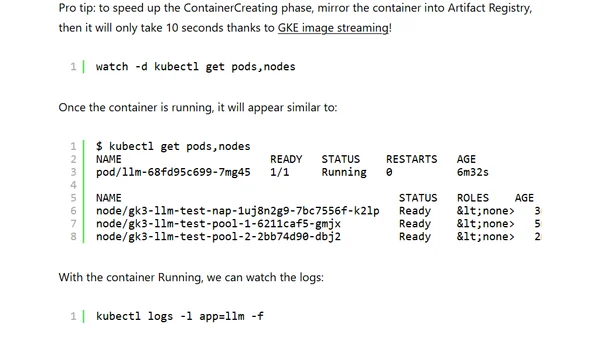

A guide to deploying and running your own LLM on Google Kubernetes Engine (GKE) Autopilot for control, privacy, and cost management.

A simple explanation of Retrieval-Augmented Generation (RAG), covering its core components: LLMs, context, and vector databases.

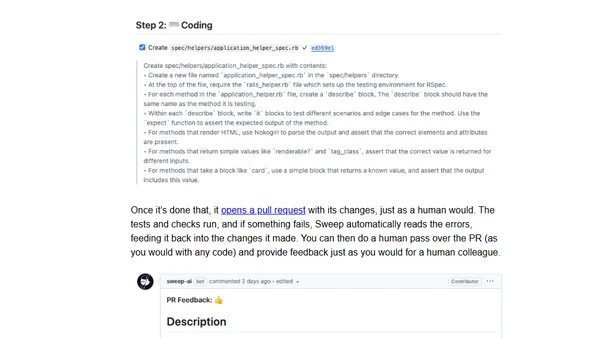

A developer's experience using Sweep, an LLM-powered tool that generates pull requests to write unit tests and fix code in a GitHub workflow.

An analysis of ChatGPT's knowledge cutoff date, testing its accuracy on celebrity death dates to understand the limits of its training data.

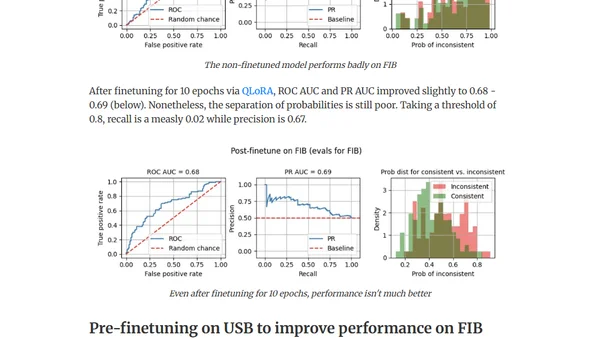

Explores using out-of-domain data to improve LLM finetuning for detecting factual inconsistencies (hallucinations) in text summaries.

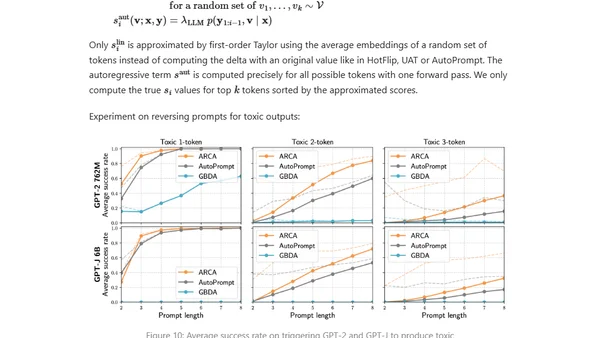

Explores adversarial attacks and jailbreak prompts that can make large language models produce unsafe or undesired outputs, bypassing safety measures.