LLM Model Serving on Autopilot

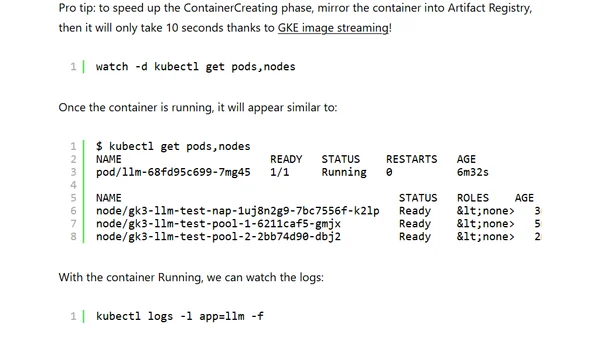

Read OriginalThis technical tutorial explains how to deploy and serve a large language model (LLM) like Falcon-40b on Google Kubernetes Engine (GKE) in Autopilot mode. It details the configuration changes needed for Autopilot's pod-based model, such as using an ephemeral volume, and covers cluster setup, GPU selection (NVIDIA L4), and deployment YAML specifics to run a self-hosted LLM API for business applications.

Comments

No comments yet

Be the first to share your thoughts!

Browser Extension

Get instant access to AllDevBlogs from your browser

Top of the Week

No top articles yet