TIL: Masked Language Models Are Surprisingly Capable Zero-Shot Learners

Explores using a masked language model's head for zero-shot tasks, achieving strong results without task-specific heads.

Explores using a masked language model's head for zero-shot tasks, achieving strong results without task-specific heads.

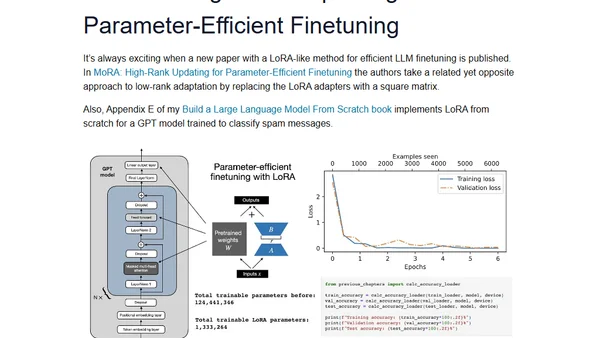

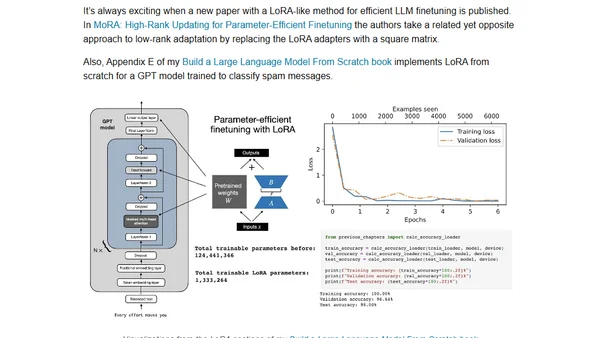

Analysis of new LLM research on instruction masking and LoRA finetuning methods, with practical insights for developers.

Explores new research on instruction masking and LoRA finetuning techniques for improving large language models (LLMs).

A developer compares 8 LLMs on a custom retrieval task using medical transcripts, analyzing performance on simple to complex questions.

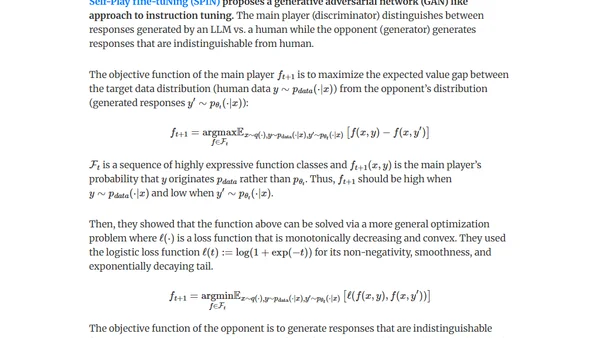

Explores methods for generating synthetic data (distillation & self-improvement) to fine-tune LLMs for pretraining, instruction-tuning, and preference-tuning.

Strategies for improving LLM performance through dataset-centric fine-tuning, focusing on instruction datasets rather than model architecture changes.

Explores dataset-centric strategies for fine-tuning LLMs, focusing on instruction datasets to improve model performance without altering architecture.

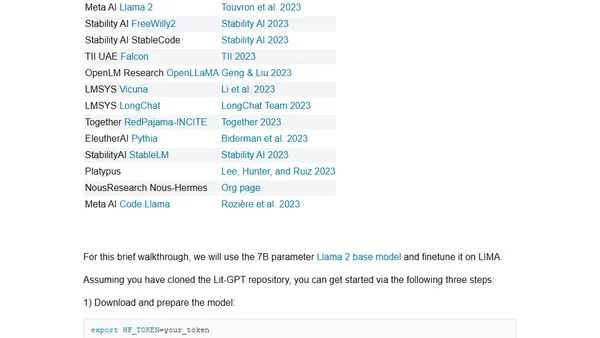

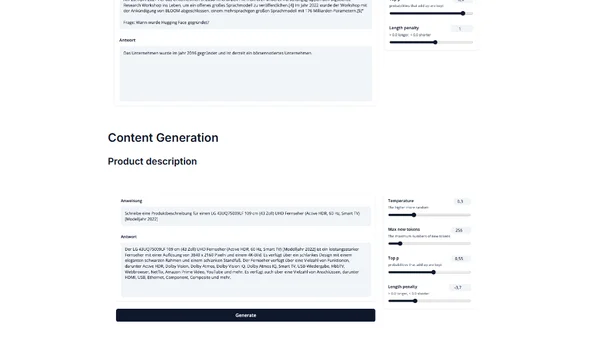

A technical guide on instruction-tuning Meta's Llama 2 model to generate instructions from inputs, enabling personalized LLM applications.

Introduces IGEL, an instruction-tuned German large language model based on BLOOM, for NLP tasks like translation and QA.