DeepSeek V4 - almost on the frontier, a fraction of the price

DeepSeek releases V4 Pro and Flash AI models, offering frontier-level performance at significantly lower costs.

DeepSeek releases V4 Pro and Flash AI models, offering frontier-level performance at significantly lower costs.

DeepSeek V4 preview models offer frontier-level performance at a fraction of the cost, with up to 1M token context and open weights.

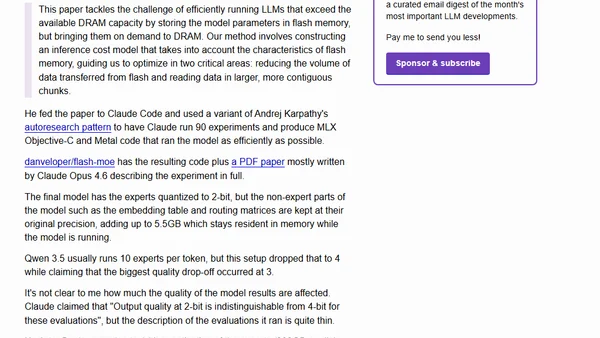

Explores using Apple's 'LLM in a Flash' research to run a massive 397B parameter AI model locally on a MacBook by streaming weights from SSD.

Explores using Apple's 'LLM in a Flash' research to run a massive 397B parameter AI model locally on a MacBook by streaming weights from SSD.

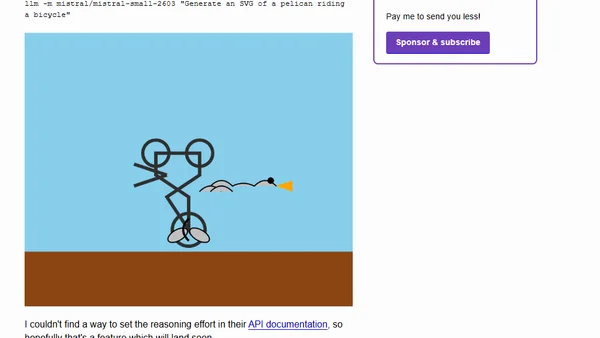

Mistral AI releases Mistral Small 4, a new 119B parameter open model combining reasoning, multimodal, and coding capabilities.

Mistral AI releases Mistral Small 4, a new 119B parameter open model combining reasoning, multimodal, and coding capabilities.

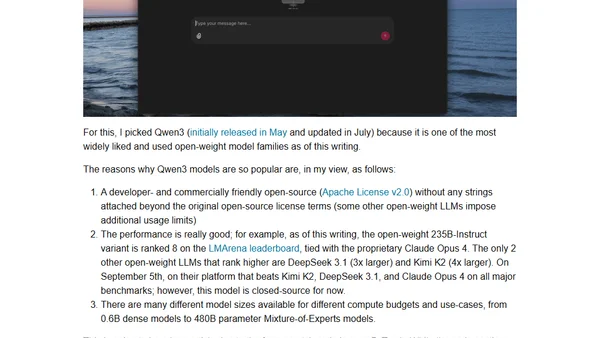

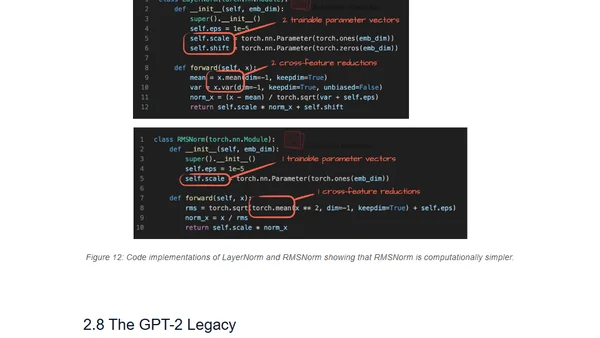

A hands-on guide to understanding and implementing the Qwen3 large language model architecture from scratch using pure PyTorch.

A hands-on tutorial implementing the Qwen3 large language model architecture from scratch using pure PyTorch, explaining its core components.

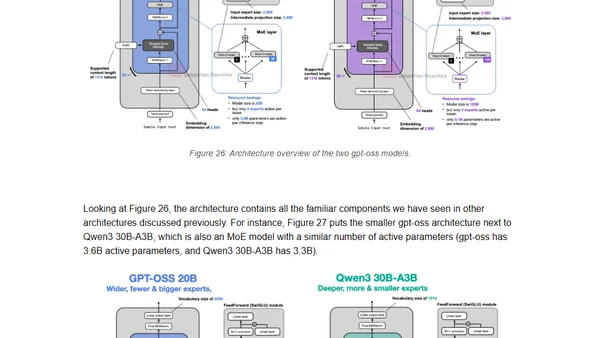

Analysis of OpenAI's new gpt-oss models, comparing architectural improvements from GPT-2 and examining optimizations like MXFP4 and Mixture-of-Experts.

A technical comparison of architectural changes in major Large Language Models (LLMs) from 2024-2025, focusing on structural innovations beyond benchmarks.

A detailed comparison of architectural developments in major large language models (LLMs) released in 2024-2025, focusing on structural changes beyond benchmarks.

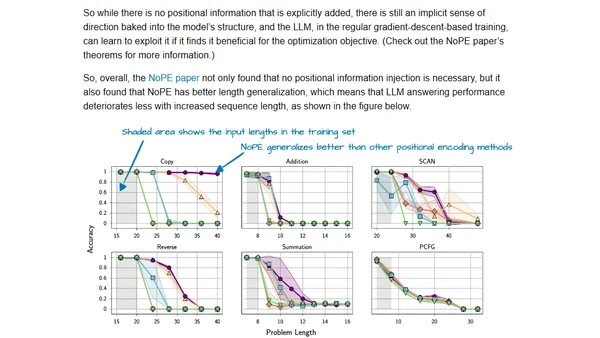

A curated list of 12 influential LLM research papers from 2024, highlighting key advancements in AI and machine learning.

A curated list of 12 influential LLM research papers from each month of 2024, covering topics like Mixture of Experts, LoRA, and scaling laws.

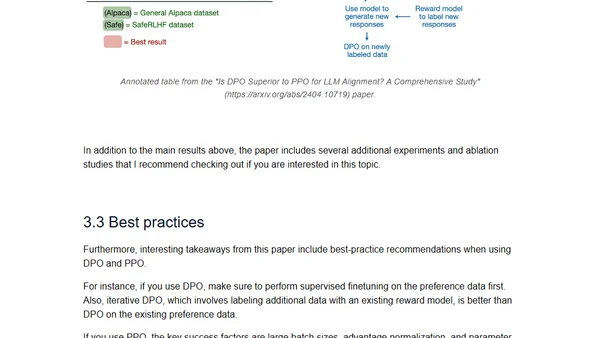

A review and comparison of the latest open LLMs (Mixtral, Llama 3, Phi-3, OpenELM) and a study on DPO vs. PPO for LLM alignment.

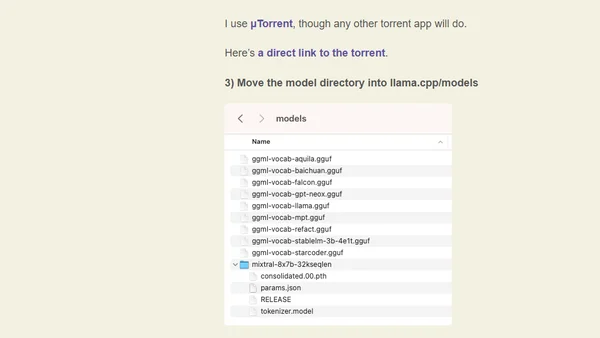

A technical guide on running the Mistral 8x7B Mixture of Experts AI model locally on a MacBook, including setup steps and performance notes.

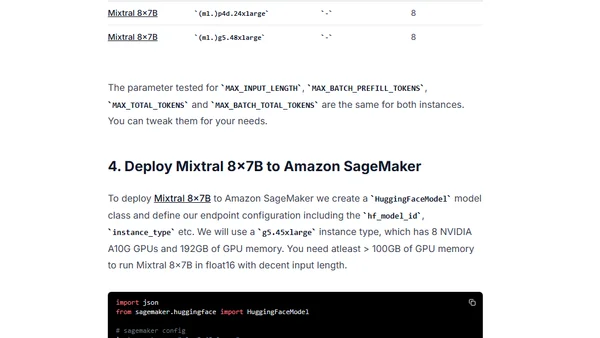

A technical guide on deploying the Mixtral 8x7B open-source LLM from Mistral AI to Amazon SageMaker using the Hugging Face LLM DLC.

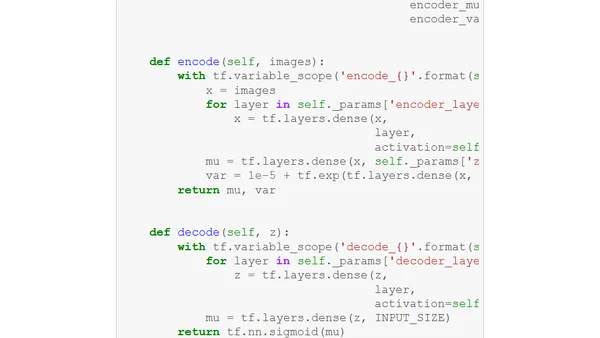

Explores an unsupervised approach combining Mixture of Experts (MoE) with Variational Autoencoders (VAE) for conditional data generation without labels.