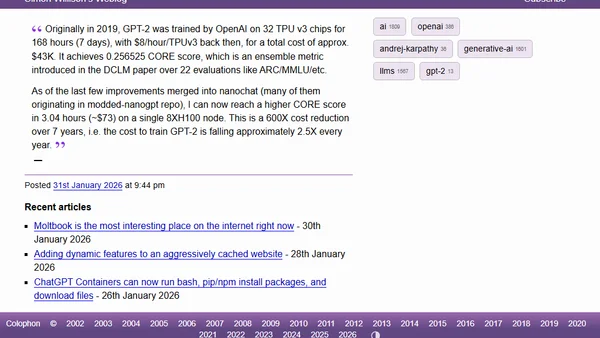

Quoting Andrej Karpathy

Andrej Karpathy notes a 600x cost reduction in training a GPT-2 level model over 7 years, highlighting rapid efficiency gains in AI.

Andrej Karpathy notes a 600x cost reduction in training a GPT-2 level model over 7 years, highlighting rapid efficiency gains in AI.

A review of key paradigm shifts in Large Language Models (LLMs) in 2025, focusing on RLVR training and new conceptual models of AI intelligence.

![The day piracy changed [blog]](https://alldevblogs.blob.core.windows.net/thumbs/article-5db2ab17df73-full-25f3e6da.webp)

The author argues that traditional piracy is dead, redefined by corporations like Meta using scraped, pirated content to train AI models without consequence.

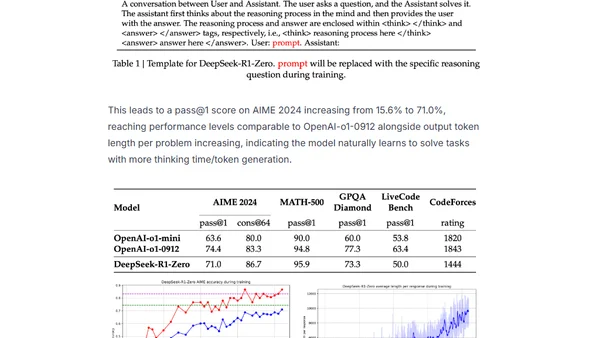

Explains the training of DeepSeek-R1, focusing on the Group Relative Policy Optimization (GRPO) reinforcement learning method.

Analyzes the limitations of AI chatbots like ChatGPT in providing accurate technical answers and discusses the need for curated data and human experts.