The State Of LLMs 2025: Progress, Problems, and Predictions

A 2025 year-in-review analysis of large language models (LLMs), covering key developments in reasoning, architecture, costs, and predictions for 2026.

A 2025 year-in-review analysis of large language models (LLMs), covering key developments in reasoning, architecture, costs, and predictions for 2026.

A 2025 year-in-review of Large Language Models, covering major developments in reasoning, architecture, costs, and predictions for 2026.

A technical analysis of the DeepSeek model series, from V3 to the latest V3.2, covering architecture, performance, and release timeline.

A technical analysis of DeepSeek V3.2's architecture, sparse attention, and reinforcement learning updates, comparing it to other flagship AI models.

Analysis of DeepSeek V3.2's architecture, sparse attention mechanism, and RL updates compared to its predecessor and proprietary models.

DeepSeek-Math-V2 is an open-source 685B parameter AI model that achieves gold medal performance on mathematical Olympiad problems.

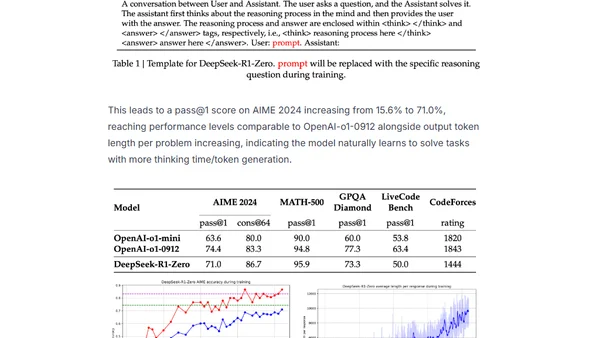

Explores how advanced AIs use 'chains of thought' reasoning to break complex problems into simpler steps, improving accuracy and performance.

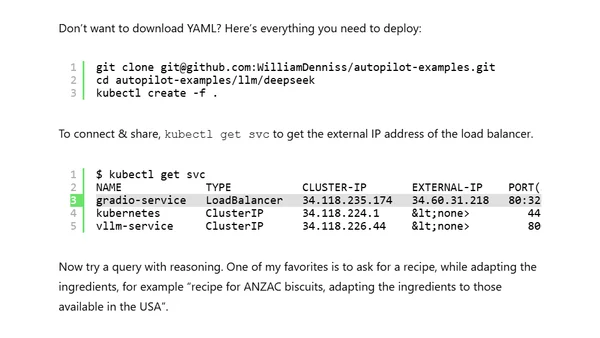

A technical guide on deploying DeepSeek's open reasoning AI models on Google Kubernetes Engine (GKE) using vLLM and a Gradio interface.

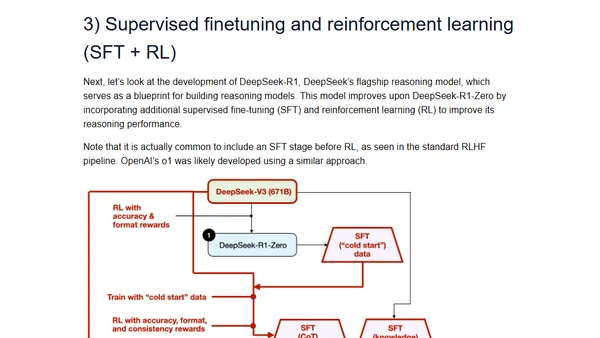

Explores four main approaches to building and enhancing reasoning capabilities in Large Language Models (LLMs) for complex tasks.

A tutorial on installing the Deepseek AI model locally on a Linux machine using the Ollama platform.

Explains the training of DeepSeek-R1, focusing on the Group Relative Policy Optimization (GRPO) reinforcement learning method.