Microsoft's 2026 Global ML Building Footprints

Analysis of Microsoft's 2026 Global ML Building Footprints dataset, including technical setup and data exploration using DuckDB and QGIS.

Analysis of Microsoft's 2026 Global ML Building Footprints dataset, including technical setup and data exploration using DuckDB and QGIS.

A beginner-friendly introduction to using PySpark for big data processing with Apache Spark, covering the fundamentals.

Announces 9 new free and paid books added to the Big Book of R collection, covering data science, visualization, and package development.

A comprehensive 2025 guide to Apache Iceberg, covering its architecture, ecosystem, and practical use for data lakehouse management.

Explains the hierarchical structure of Parquet files, detailing how pages, row groups, and columns optimize storage and query performance.

Final guide in a series covering performance tuning and best practices for optimizing Apache Parquet files in big data workflows.

Explores why Parquet is the ideal columnar file format for optimizing storage and query performance in modern data lake and lakehouse architectures.

An introduction to Apache Parquet, a columnar storage file format for efficient data processing and analytics.

An introduction to Apache Iceberg, a table format for data lakehouses, explaining its architecture and providing learning resources.

Interview with Suresh Srinivas on his career in big data, founding Hortonworks, scaling Uber's data platform, and leading the OpenMetadata project.

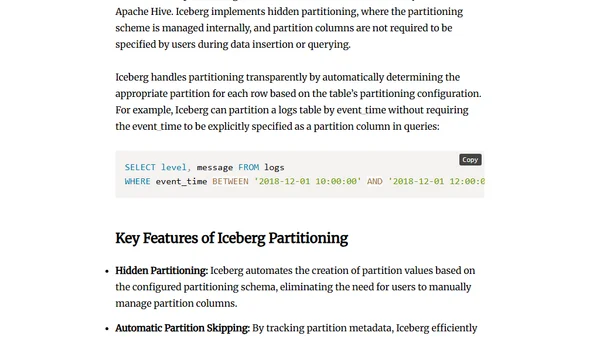

Compares partitioning techniques in Apache Hive and Apache Iceberg, highlighting Iceberg's advantages for query performance and data management.

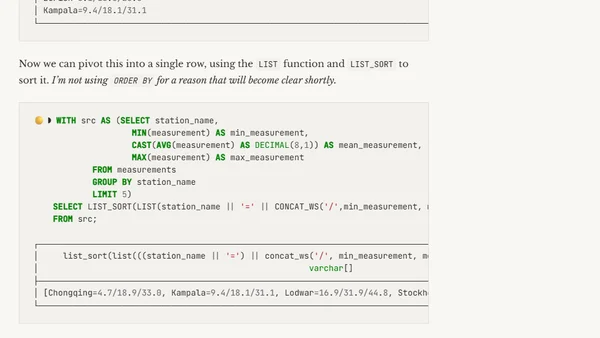

A technical guide to solving the One Billion Row Challenge (1BRC) using SQL and DuckDB, including data loading and aggregation.

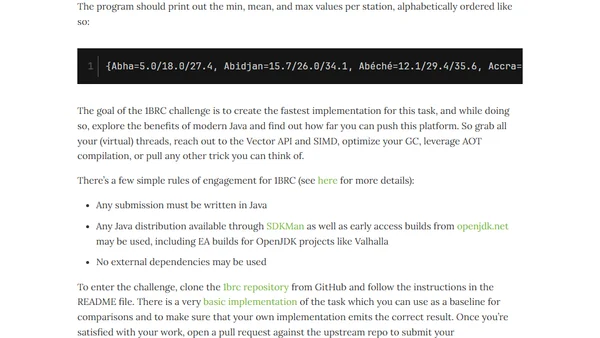

A Java programming challenge to process one billion rows of temperature data, focusing on performance optimization and modern Java features.

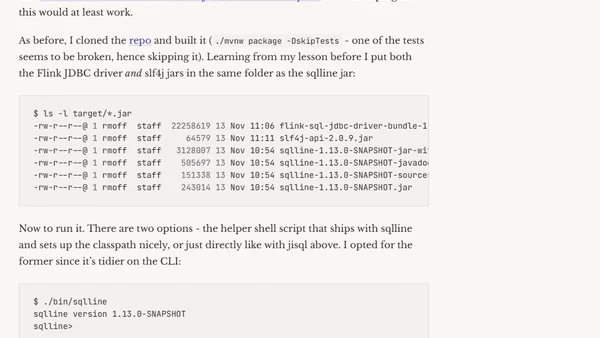

Exploring the two JDBC driver options for connecting to Apache Flink: the new Flink JDBC driver and the Hive JDBC driver via the SQL Gateway.

An introductory overview of Apache Flink, explaining its core concepts as a distributed stream processing framework, its history, and primary use cases.

A developer's personal journey and structured plan for learning Apache Flink, a stream processing framework, starting from the basics.

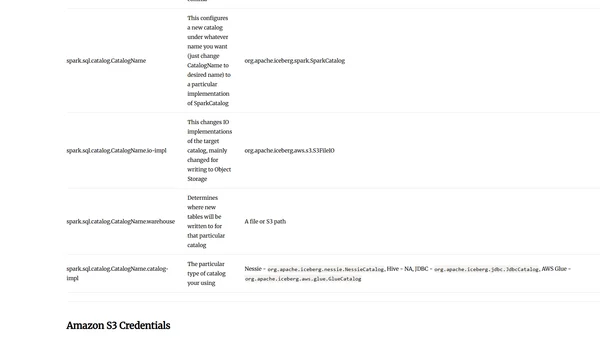

A guide to configuring Apache Spark for use with the Apache Iceberg table format, covering packages, flags, and programmatic setup.

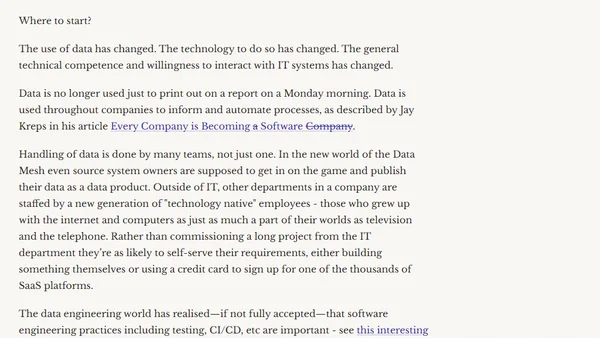

A data engineer explores the evolution of the data ecosystem, comparing past practices with modern tools and trends in 2022.

Argues that raw data is overvalued without proper context and conversion into meaningful information and knowledge.

Explains the APPROX_COUNT_DISTINCT function for faster, memory-efficient distinct counts in SQL, comparing it to exact COUNT(DISTINCT).