Quoting Andrej Karpathy

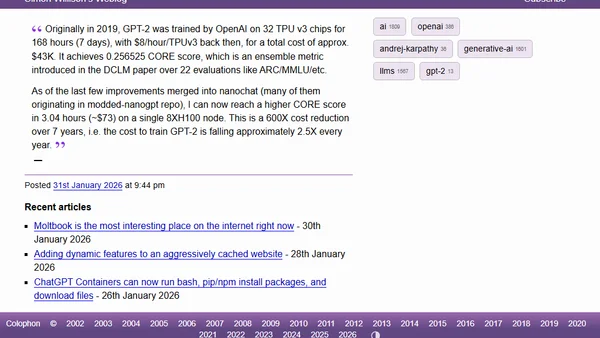

Andrej Karpathy notes a 600x cost reduction in training a GPT-2 level model over 7 years, highlighting rapid efficiency gains in AI.

Andrej Karpathy notes a 600x cost reduction in training a GPT-2 level model over 7 years, highlighting rapid efficiency gains in AI.

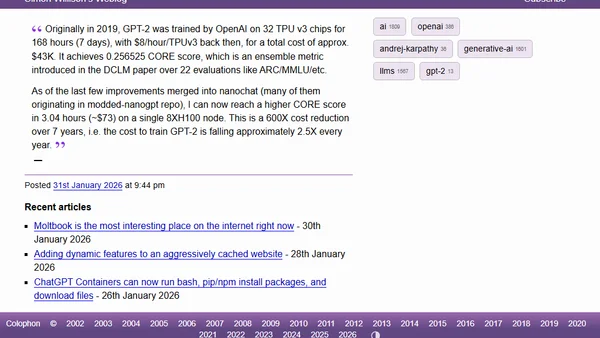

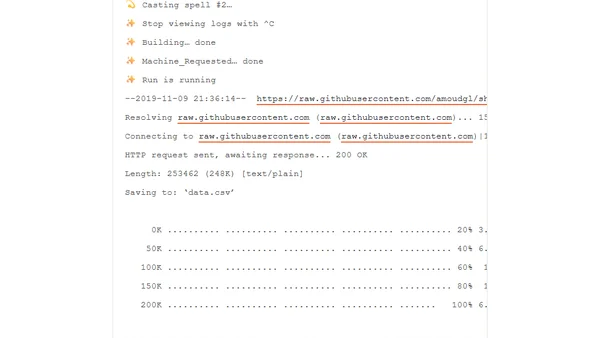

A 3-hour coding workshop teaching how to implement, train, and use Large Language Models (LLMs) from scratch with practical examples.

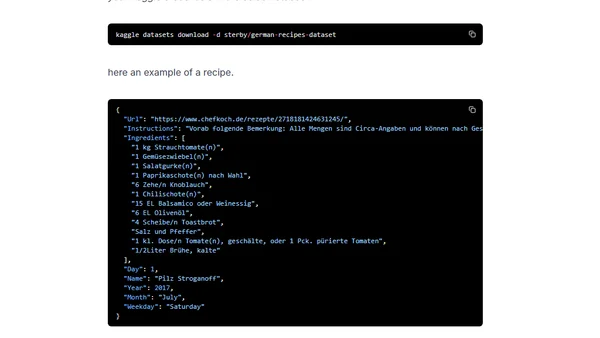

A tutorial on fine-tuning a German GPT-2 language model for text generation using Huggingface's Transformers library and a dataset of recipes.

A tutorial on using HuggingFace's API to access and fine-tune OpenAI's GPT-2 model for text generation.