All Roads Lead to Robotics

A robotics AI lead reflects on the field's future, discussing scaling robot autonomy with neural networks and parallels to large language models.

A robotics AI lead reflects on the field's future, discussing scaling robot autonomy with neural networks and parallels to large language models.

Announcing libactivation, a new Python package on PyPI providing activation functions and their derivatives for machine learning and neural networks.

Strategies for improving LLM performance through dataset-centric fine-tuning, focusing on instruction datasets rather than model architecture changes.

Explores dataset-centric strategies for fine-tuning LLMs, focusing on instruction datasets to improve model performance without altering architecture.

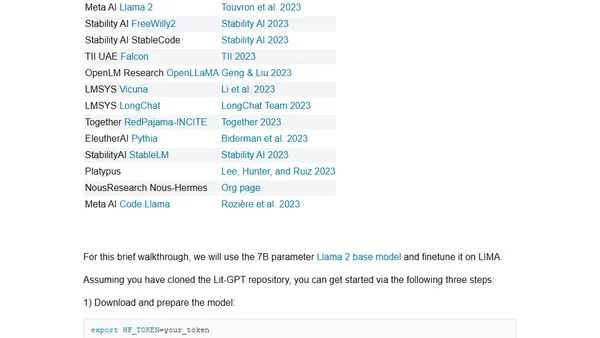

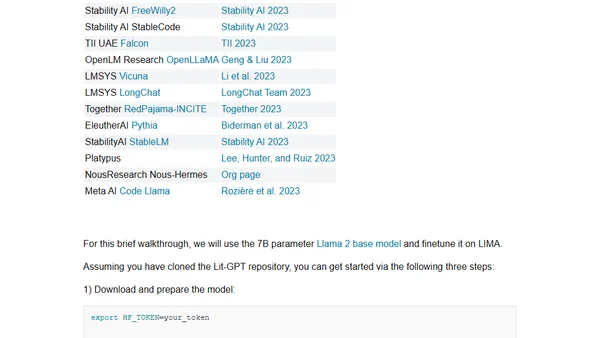

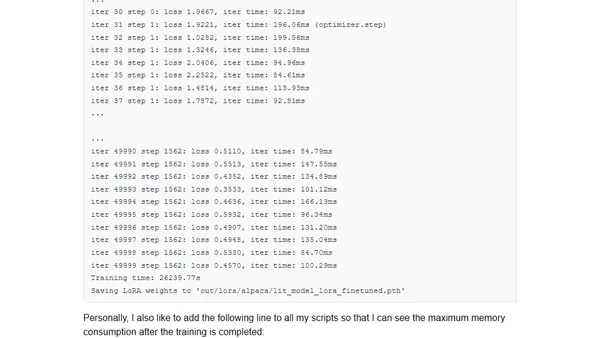

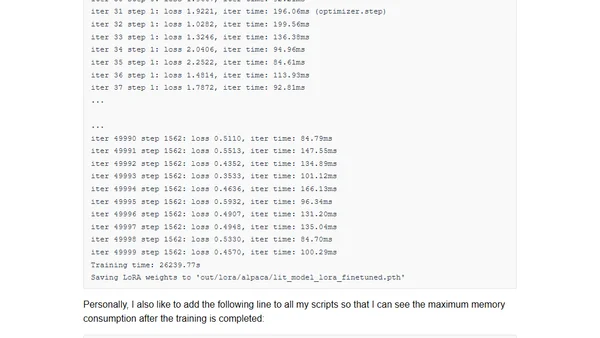

A guide to participating in the NeurIPS 2023 LLM Efficiency Challenge, focusing on efficient fine-tuning of large language models on a single GPU.

A guide to participating in the NeurIPS 2023 LLM Efficiency Challenge, covering setup, rules, and strategies for efficient LLM fine-tuning on limited hardware.

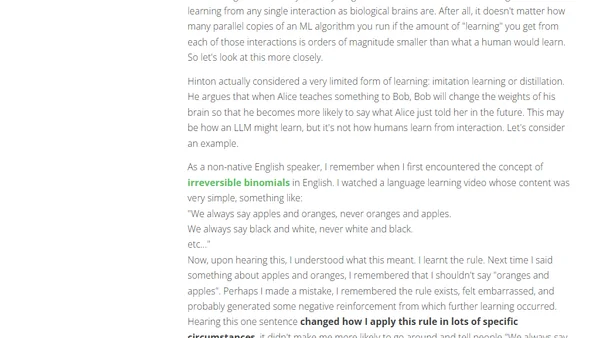

Analyzes Geoffrey Hinton's technical argument comparing biological and digital intelligence, concluding digital AI will surpass human capabilities.

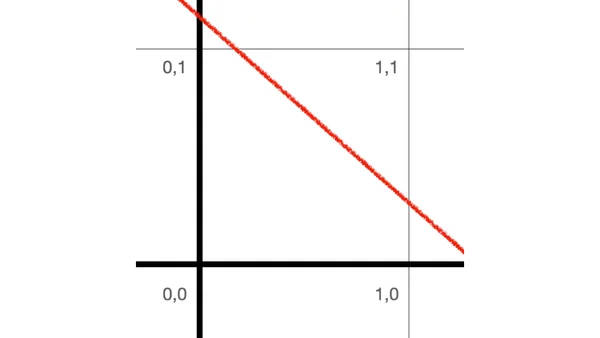

An introduction to artificial neural networks, explaining the perceptron as the simplest building block and its ability to learn basic logical functions.

Introducing Linear Diffusion, a novel diffusion model built entirely from linear components for generating simple images like MNIST digits.

Argues against the 'lossy compression' analogy for LLMs like ChatGPT, proposing instead that they are simulators creating temporary simulacra.

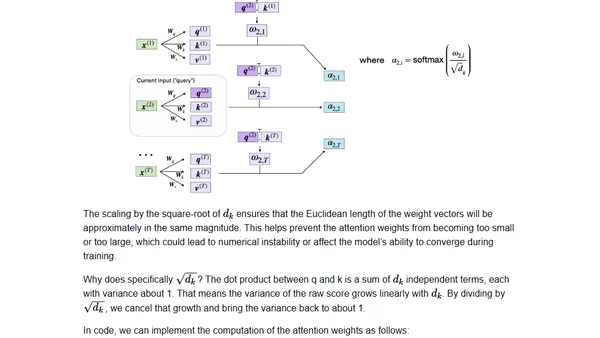

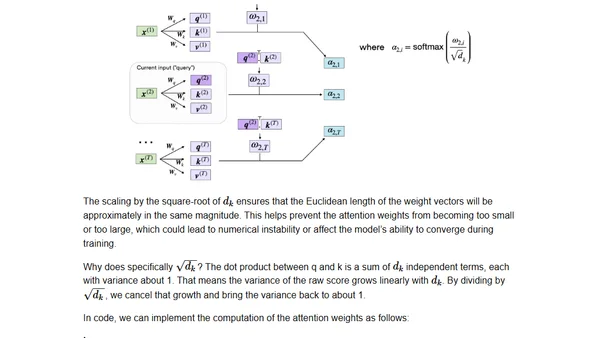

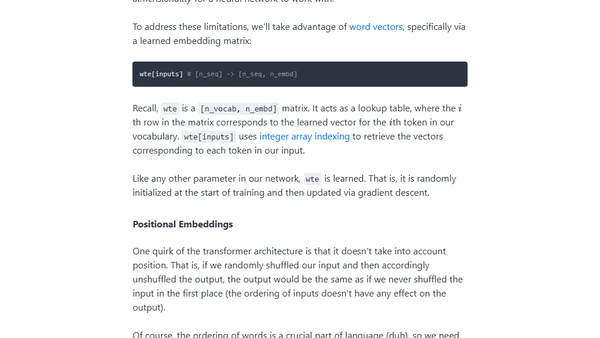

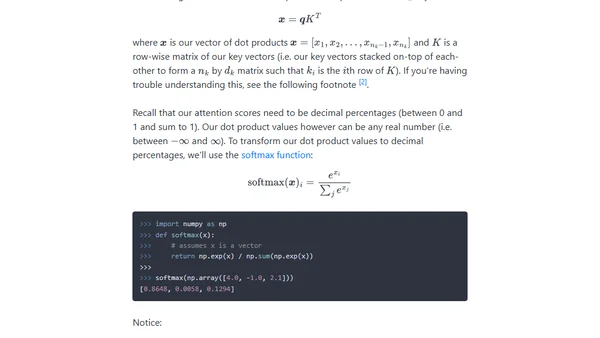

A technical guide to coding the self-attention mechanism from scratch, as used in transformers and large language models.

A technical guide to coding the self-attention mechanism from scratch, as used in transformers and large language models.

Argues that AI image generation won't replace human artists, using information theory to explain their unique creative value.

A technical guide to implementing a GPT model from scratch using only 60 lines of NumPy code, including loading pre-trained GPT-2 weights.

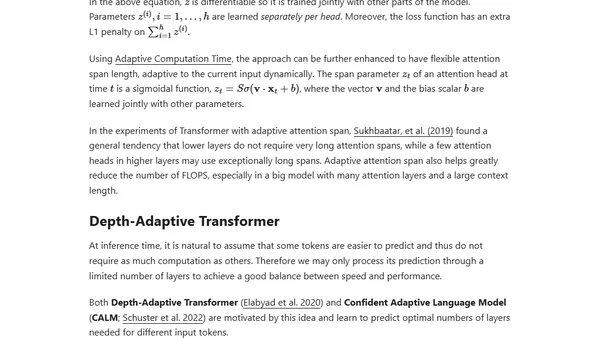

An updated, comprehensive overview of the Transformer architecture and its many recent improvements, including detailed notation and attention mechanisms.

A curated list of the top 10 open-source machine learning and AI projects released or updated in 2022, including PyTorch 2.0 and scikit-learn 1.2.

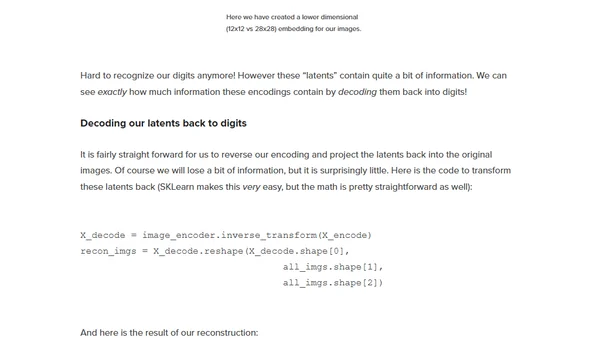

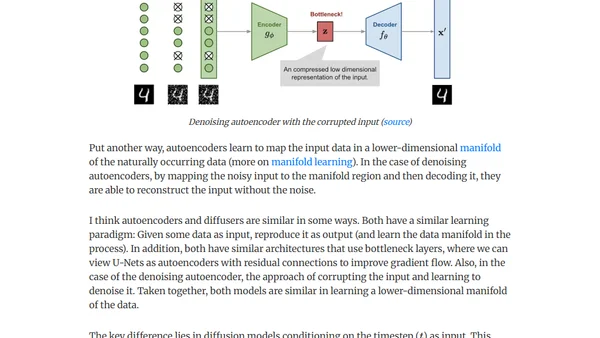

Compares autoencoders and diffusers, explaining their architectures, learning paradigms, and key differences in deep learning.

A technical explanation of the attention mechanism in transformers, building intuition from key-value lookups to the scaled dot product equation.

Author announces the launch of 'Ahead of AI', a monthly newsletter covering AI trends, educational content, and personal updates on machine learning projects.

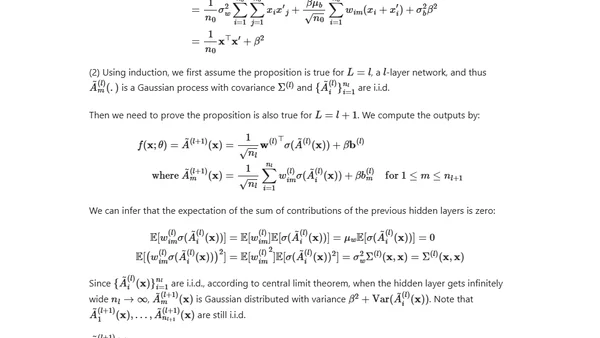

A deep dive into the Neural Tangent Kernel (NTK) theory, explaining the math behind why wide neural networks converge during gradient descent training.