The NeurIPS 2023 LLM Efficiency Challenge Starter Guide

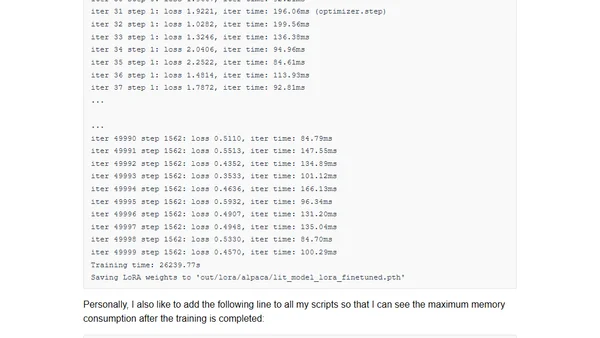

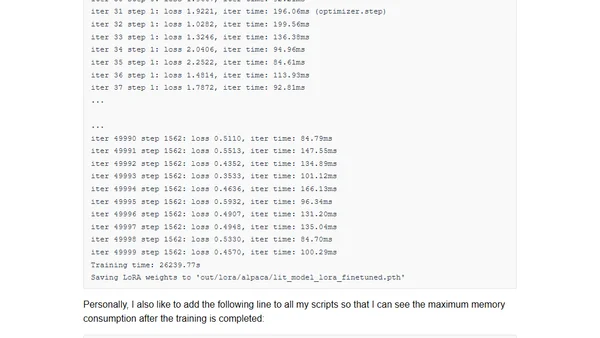

A guide to participating in the NeurIPS 2023 LLM Efficiency Challenge, covering setup, rules, and strategies for efficient LLM fine-tuning on limited hardware.

A guide to participating in the NeurIPS 2023 LLM Efficiency Challenge, covering setup, rules, and strategies for efficient LLM fine-tuning on limited hardware.

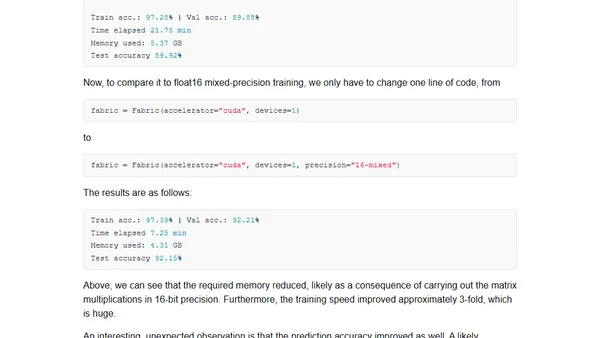

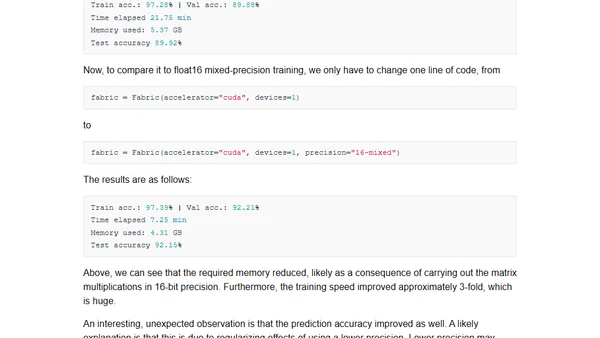

Exploring mixed-precision techniques to speed up large language model training and inference by up to 3x without losing accuracy.

Explores how mixed-precision training techniques can speed up large language model training and inference by up to 3x, reducing memory use.

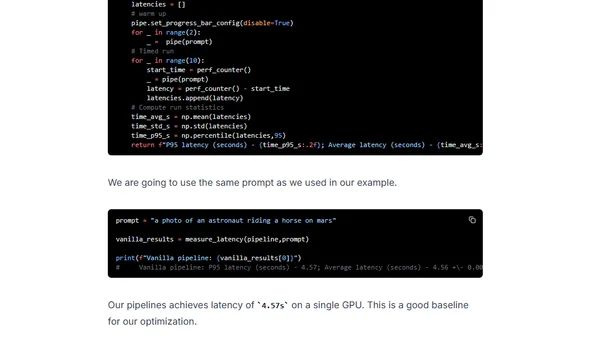

Learn to optimize Stable Diffusion for faster GPU inference using DeepSpeed-Inference and Hugging Face Diffusers.

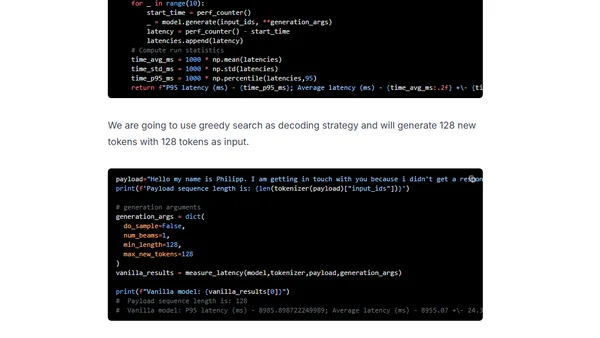

Learn to optimize GPT-J inference using DeepSpeed-Inference and Hugging Face Transformers for faster GPU performance.

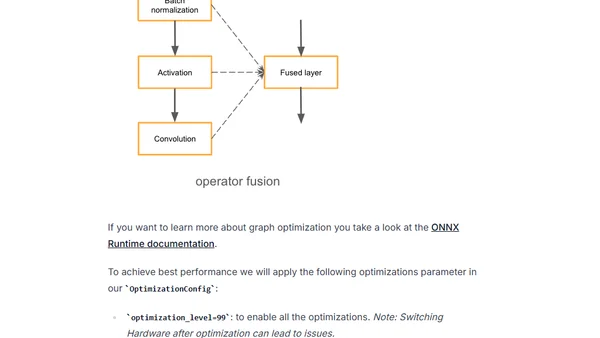

Learn to optimize Hugging Face Transformers models for GPU inference using Optimum and ONNX Runtime to reduce latency.

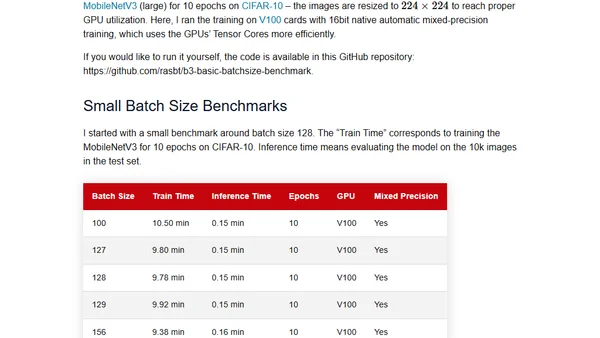

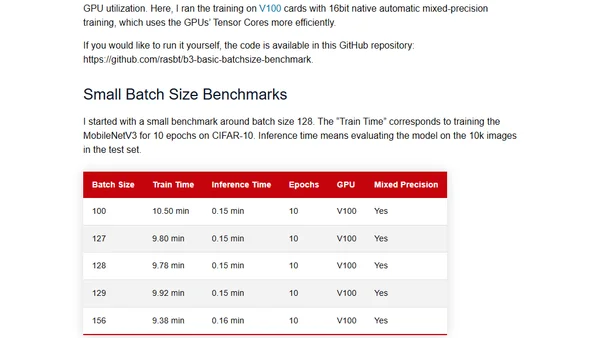

Challenges the common practice of using powers of 2 for neural network batch sizes, examining the theory and practical benchmarks.

Examines the common practice of using powers of 2 for neural network batch sizes, questioning its necessity with practical and theoretical insights.