Some Math behind Neural Tangent Kernel

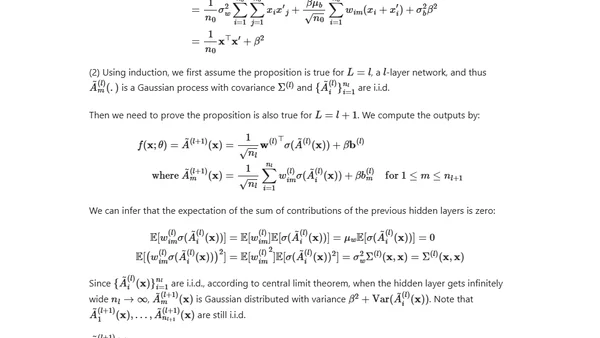

Read OriginalThis technical article explores the Neural Tangent Kernel (NTK), a theoretical framework for understanding the training dynamics of over-parameterized neural networks. It provides a math-intensive explanation of how NTK explains the consistent convergence of wide neural networks to a global minimum during gradient descent, including reviews of core concepts like Jacobian matrices and differential equations.

Comments

No comments yet

Be the first to share your thoughts!

Browser Extension

Get instant access to AllDevBlogs from your browser

Top of the Week

No top articles yet