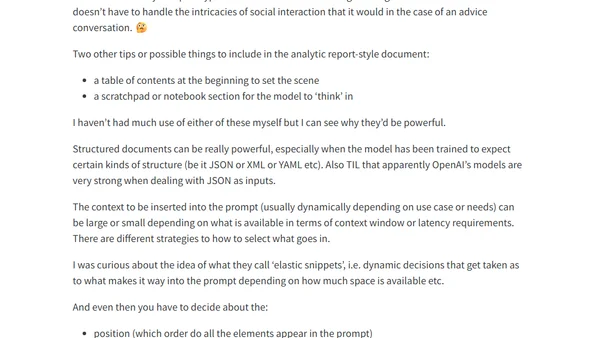

Assembling the Prompt: Notes on ‘Prompt Engineering for LLMs’ ch 6

A summary of Chapter 6 from 'Prompt Engineering for LLMs', covering prompt structure, document templates, and strategies for effective context inclusion.

A summary of Chapter 6 from 'Prompt Engineering for LLMs', covering prompt structure, document templates, and strategies for effective context inclusion.

A developer builds an AI-powered reading companion called Dewey, detailing its features, design, and technical implementation.

Developer revives his old AI startup's brainstorming tool by building a GitHub Copilot Extension, using VS Code's speech features and LLMs.

A curated list of notable LLM and AI research papers published in 2024, providing a resource for those interested in the latest developments.

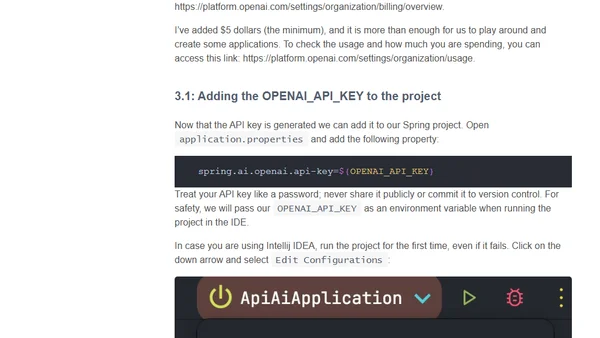

A tutorial on building a simple AI-powered chat client in Java using the Spring AI framework, covering setup, configuration, and provider abstraction.

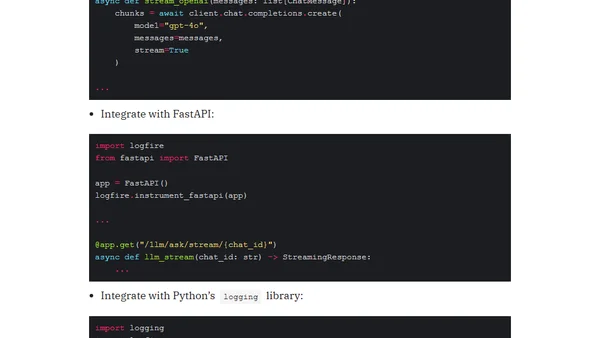

Introducing Logfire, Pydantic's new observability tool for Python, with easy integration for OpenAI LLM calls, FastAPI, and logging.

Explores using Azure AI Inference Service to simplify LLM integration, focusing on Python SDK and GitHub Marketplace for experimentation.

Argues that building a good search engine is more critical for effective RAG than just using a vector database, as poor retrieval misleads AI.

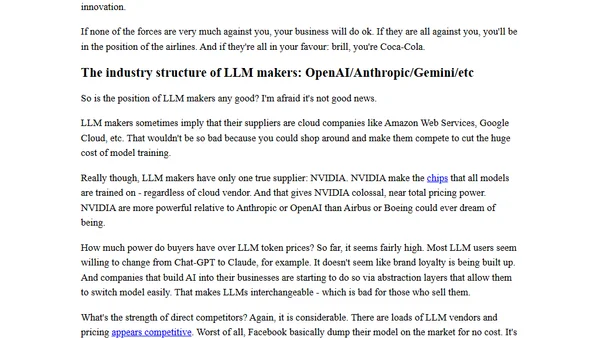

Analyzes why building Large Language Models (LLMs) may be a poor business, comparing the AI industry's structure to historically unprofitable sectors like airlines.

Explores a method using a 'Judging AI' (like o1-preview) to evaluate the performance of other AI models on tasks, relative to human capability.

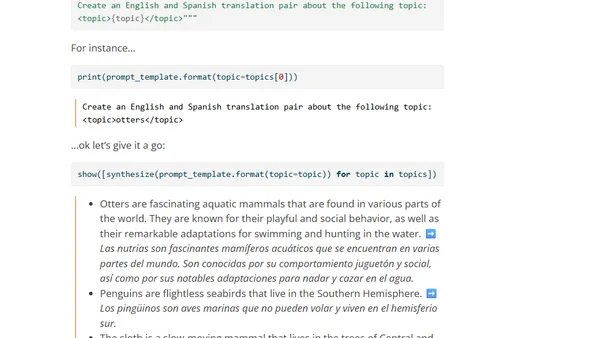

Explores the use of LLMs to generate synthetic data for training AI models, discussing challenges, an experiment with coding data, and a new library.

The article explores how the writing process of AI models can inspire humans to overcome writer's block by adopting a less perfectionist approach.

Explores the philosophical argument that AI, particularly LLMs, possess a form of understanding and model reality, challenging the notion they are mere token predictors.

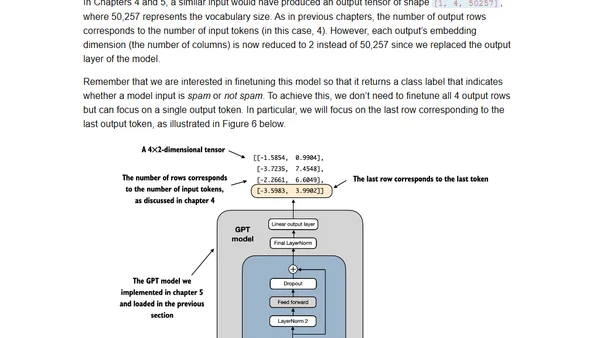

A guide to transforming pretrained LLMs into text classifiers, with insights from the author's new book on building LLMs from scratch.

Building a multi-service document extraction app using LLMs, Azure services, and Diagrid Catalyst for cloud-native architecture.

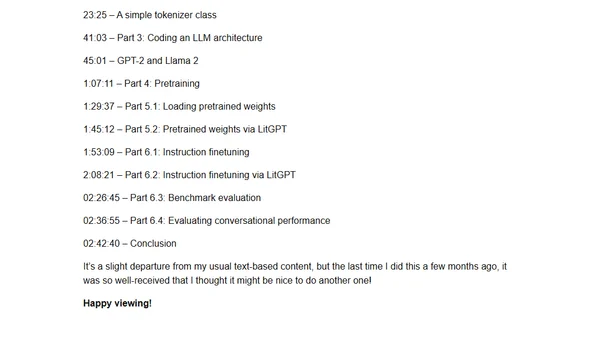

A 3-hour coding workshop teaching how to implement, train, and use Large Language Models (LLMs) from scratch with practical examples.

A 3-hour coding workshop video covering the implementation, training, and use of Large Language Models (LLMs) from scratch.

A philosophical and technical exploration of how Large Language Models (LLMs) transform 'next token prediction' into meaningful answer generation.

A hands-on review of K8sGPT, an AI-powered CLI tool for analyzing and troubleshooting Kubernetes clusters, including setup with local LLMs.

Analyzes the latest pre-training and post-training methodologies used in state-of-the-art LLMs like Qwen 2, Apple's models, Gemma 2, and Llama 3.1.