Why I Believe in SOTA Models Over Custom Ones

Argues for using general SOTA AI models over custom, specialized ones, predicting cheaper, open-source general models will dominate.

Argues for using general SOTA AI models over custom, specialized ones, predicting cheaper, open-source general models will dominate.

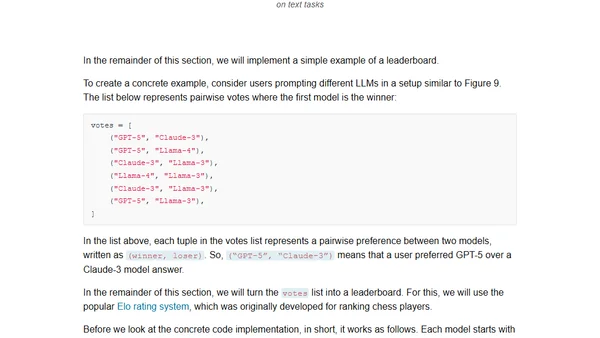

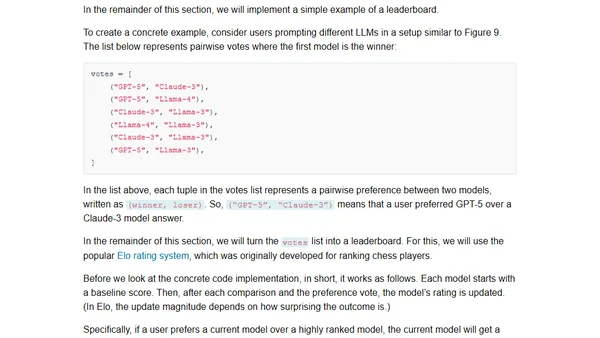

A guide to the four main methods for evaluating Large Language Models, including code examples and practical implementation details.

Explores four main methods for evaluating Large Language Models (LLMs), including code examples for implementing each approach from scratch.

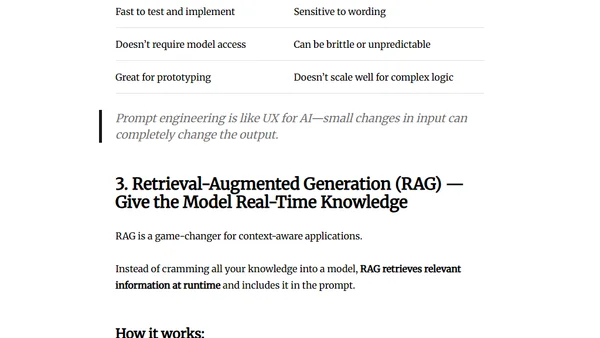

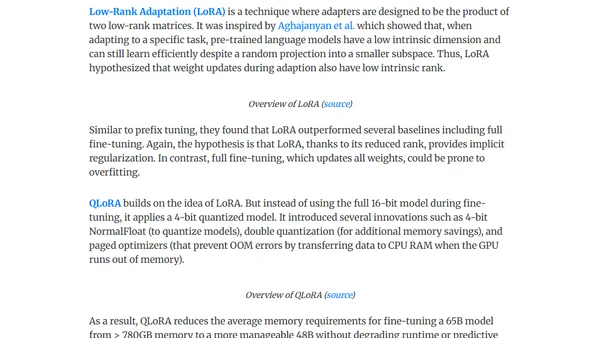

Explores three key methods to enhance LLM performance: fine-tuning, prompt engineering, and RAG, detailing their use cases and trade-offs.

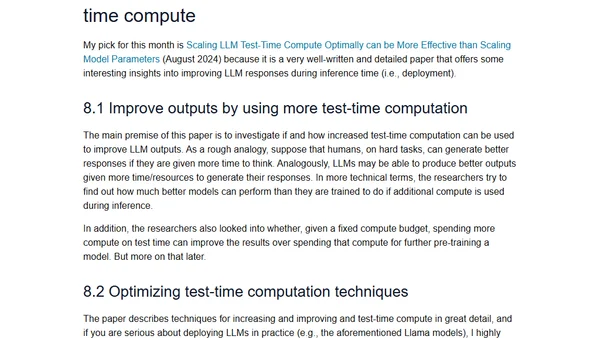

A curated list of 12 influential LLM research papers from 2024, highlighting key advancements in AI and machine learning.

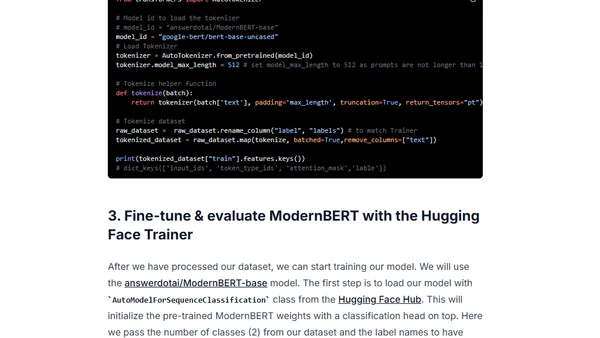

A tutorial on fine-tuning the ModernBERT model for classification tasks to build an efficient LLM router, covering setup, training, and evaluation.

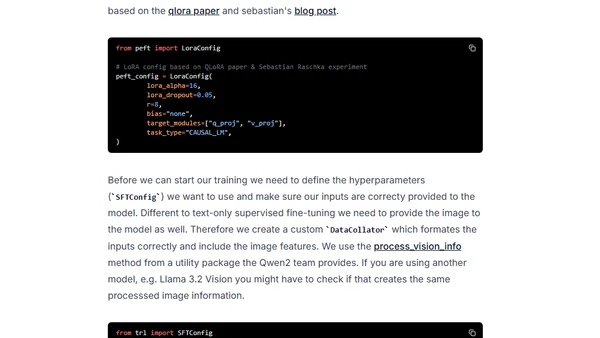

A technical guide on fine-tuning Vision-Language Models (VLMs) using Hugging Face's TRL library for custom applications like image-to-text generation.

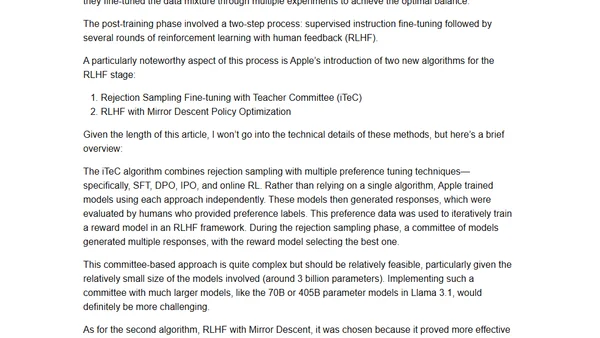

Analyzes the latest pre-training and post-training methodologies used in state-of-the-art LLMs like Qwen 2, Apple's models, Gemma 2, and Llama 3.1.

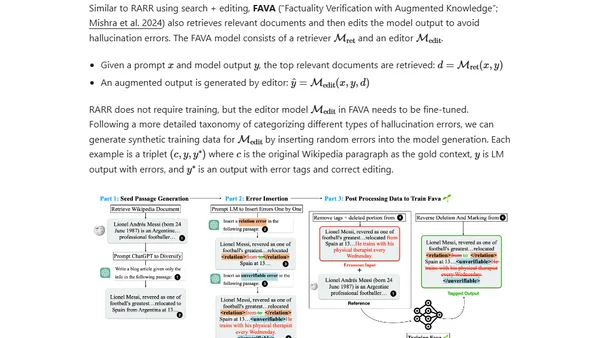

Explores the causes and types of hallucinations in large language models, focusing on extrinsic hallucinations and how training data affects factual accuracy.

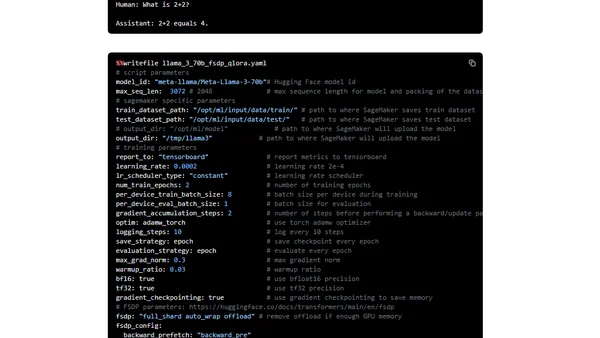

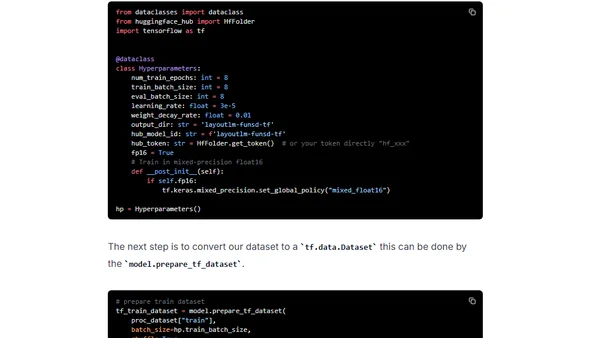

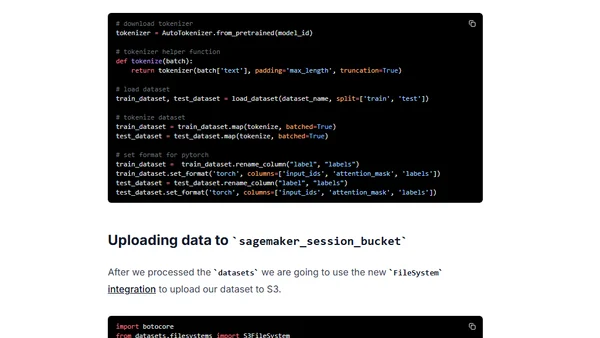

A technical guide on fine-tuning the Llama 3 LLM using PyTorch FSDP and Q-Lora on Amazon SageMaker for efficient training.

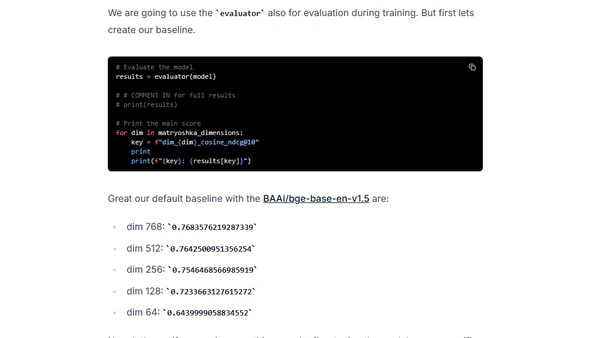

A guide to fine-tuning embedding models for RAG applications using Sentence Transformers 3, featuring Matryoshka Representation Learning for efficiency.

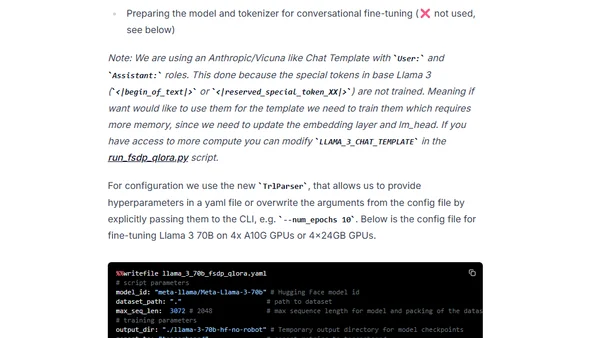

A technical guide on fine-tuning the Llama 3 70B model using PyTorch FSDP and Q-Lora for efficient training on limited GPU hardware.

A guide to selecting the right LLM architectural patterns (like RAG, fine-tuning, caching) to solve common production challenges such as performance metrics and data constraints.

A practical guide outlining seven key patterns for integrating Large Language Models (LLMs) into robust, production-ready systems and products.

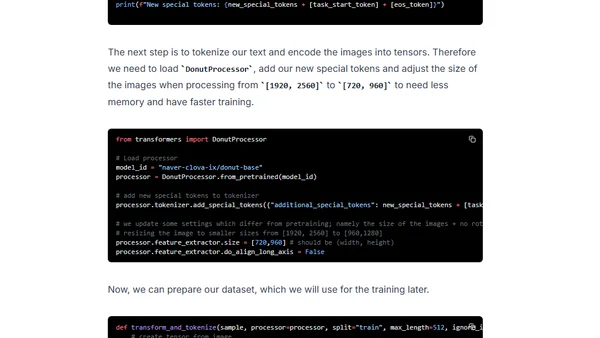

Tutorial on fine-tuning and deploying the Donut model for OCR-free document understanding using Hugging Face and Amazon SageMaker.

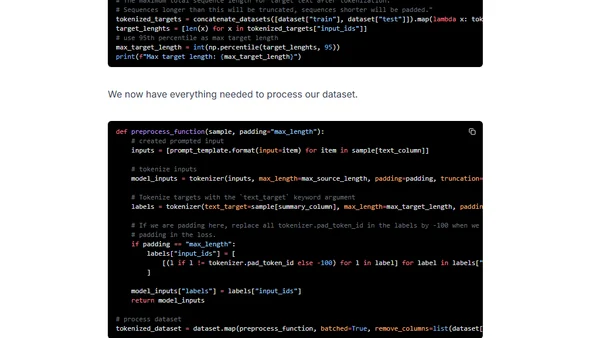

Guide to fine-tuning the large FLAN-T5 XXL model using Amazon SageMaker managed training and DeepSpeed for optimization.

A tutorial on fine-tuning Microsoft's LayoutLM model for document understanding using TensorFlow, Keras, and the FUNSD dataset.

Guide to fine-tuning a Hugging Face BERT model for text classification using Amazon SageMaker and the new Training Compiler to accelerate training.

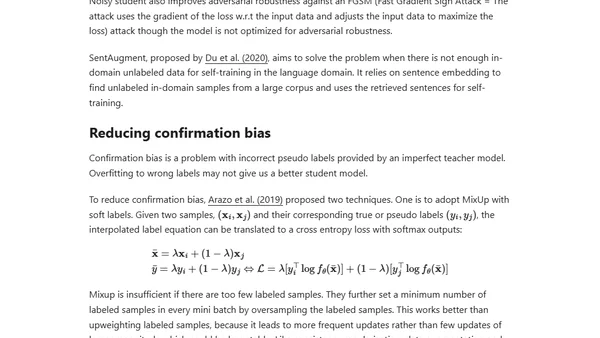

Explores semi-supervised learning techniques for training models when labeled data is scarce, focusing on combining labeled and unlabeled data.

A tutorial on using HuggingFace's API to access and fine-tune OpenAI's GPT-2 model for text generation.