New LLM Pre-training and Post-training Paradigms

Analyzes the latest pre-training and post-training methodologies used in state-of-the-art LLMs like Qwen 2, Apple's models, Gemma 2, and Llama 3.1.

Analyzes the latest pre-training and post-training methodologies used in state-of-the-art LLMs like Qwen 2, Apple's models, Gemma 2, and Llama 3.1.

A technical review of the latest pre-training and post-training methodologies used in state-of-the-art large language models (LLMs) like Qwen 2 and Llama 3.1.

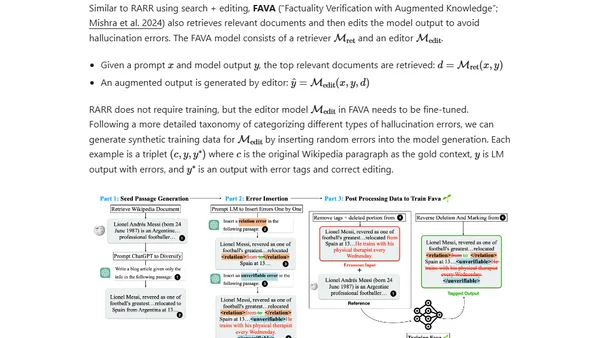

Explores the causes and types of hallucinations in large language models, focusing on extrinsic hallucinations and how training data affects factual accuracy.

Explores semi-supervised learning techniques for training models when labeled data is scarce, focusing on combining labeled and unlabeled data.