Evaluating Claude's dbt Skills: Building an Eval from Scratch

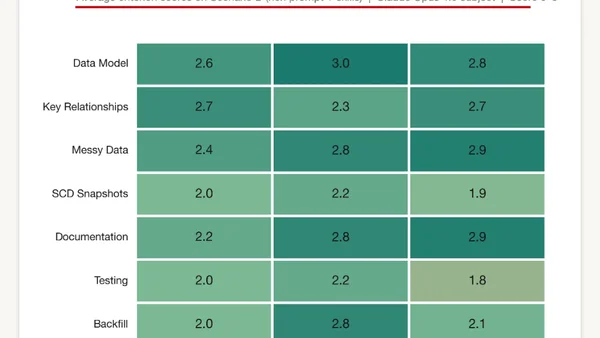

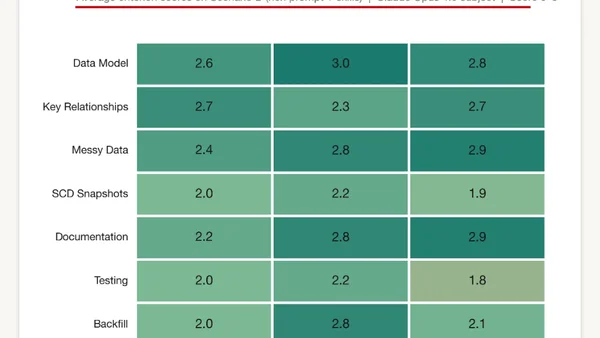

Testing Claude Code's ability to build a production-ready dbt project for a data pipeline, evaluating prompts and skills.

Testing Claude Code's ability to build a production-ready dbt project for a data pipeline, evaluating prompts and skills.

A critique of how quantitative benchmarking and evaluation culture shapes and potentially distorts progress in machine learning research.

A data-driven analysis of LLM performance on a simple retrieval task, highlighting the need for evidence-based AI testing.

A defense of systematic AI evaluation (evals) in development, arguing they are essential for measuring application quality and improving models.

A summary of a practical session on analyzing and improving LLM applications by identifying failure modes through data clustering and iterative testing.

Summarizes key challenges and methods for evaluating open-ended responses from large language models and foundation models, based on Chip Huyen's book.

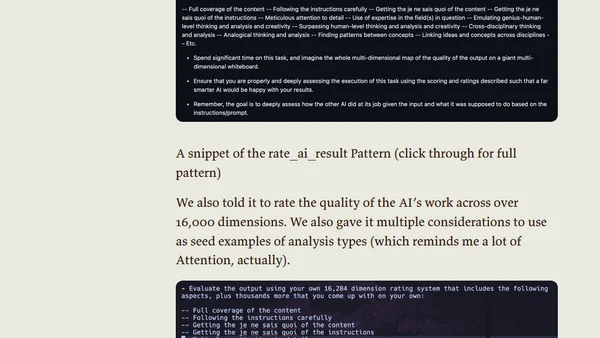

Explores a method using a 'Judging AI' (like o1-preview) to evaluate the performance of other AI models on tasks, relative to human capability.

Author judges a Weights & Biases hackathon focused on building LLM evaluation tools, discussing key considerations and project highlights.

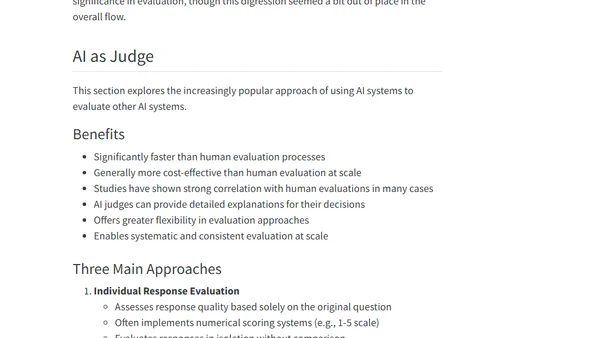

A survey of using LLMs as evaluators (LLM-as-Judge) for assessing AI model outputs, covering techniques, use cases, and critiques.