DeepSeek V4 - almost on the frontier, a fraction of the price

DeepSeek V4 preview models offer frontier-level performance at a fraction of the cost, with up to 1M token context and open weights.

DeepSeek V4 preview models offer frontier-level performance at a fraction of the cost, with up to 1M token context and open weights.

A tutorial on building a chatbot UI with Streamlit to interact with a language model inference service deployed on Azure Kubernetes (AKS) using KAITO.

A technical guide to installing KAITO v0.8.x on Azure Kubernetes Service to run the Phi-4 language model for AI inference.

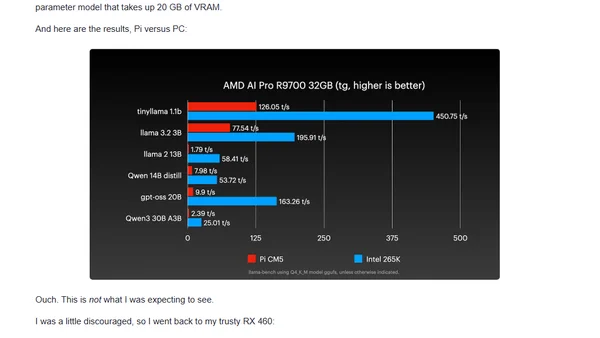

Testing GPU performance on a Raspberry Pi 5 versus a desktop PC for transcoding, AI, and multi-GPU tasks, showing surprising efficiency.

Google's report details the measured energy, emissions, and water consumption of a single Gemini AI text prompt in production.

A guide to deploying and comparing open-weight LLM families (DeepSeek, Falcon, Llama, etc.) using the KAITO operator on Azure Kubernetes Service (AKS).

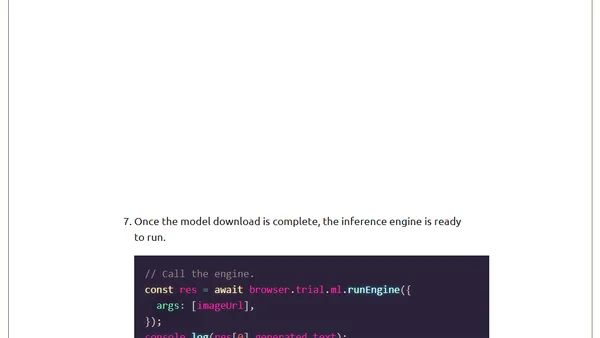

Mozilla's experiment enabling AI model inference directly in Firefox Web extensions using Transformers.js and ONNX, with a practical example.

Explores using Azure AI Inference Service to simplify LLM integration, focusing on Python SDK and GitHub Marketplace for experimentation.

A developer's hands-on test of NVIDIA's Nemotron LLM for coding tasks, detailing setup on a cloud GPU server and initial impressions.