Efficiently fine-tune Llama 3 with PyTorch FSDP and Q-Lora

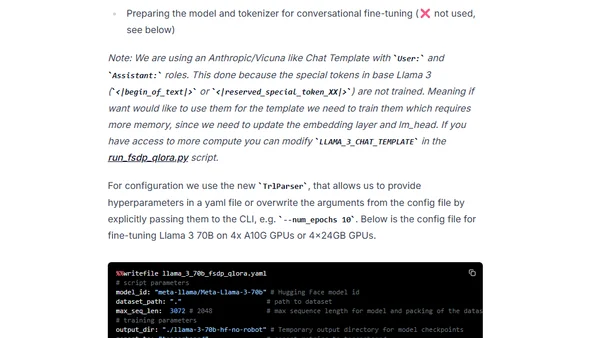

Read OriginalThis article provides a step-by-step tutorial for efficiently fine-tuning large language models like Meta's Llama 3 70B. It explains how to use PyTorch FSDP (Fully Sharded Data Parallel) and Q-Lora, combined with Hugging Face's TRL and PEFT libraries, to reduce memory requirements and enable training on consumer-grade GPUs. The guide covers environment setup, dataset preparation, and the fine-tuning process.

Comments

No comments yet

Be the first to share your thoughts!

Browser Extension

Get instant access to AllDevBlogs from your browser

Top of the Week

No top articles yet