Hugging Face Transformers BERT fine-tuning using Amazon SageMaker and Training Compiler

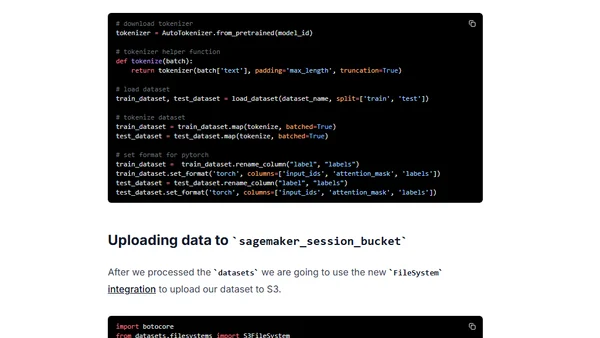

Read OriginalThis technical tutorial demonstrates how to fine-tune a pre-trained Hugging Face BERT model for multi-class text classification using the emotion dataset. It leverages Amazon SageMaker and the newly announced SageMaker Training Compiler, which optimizes deep learning models to accelerate training on GPU instances, potentially reducing costs. The article includes setup instructions, preprocessing with the datasets library, and environment configuration.

Comments

No comments yet

Be the first to share your thoughts!

Browser Extension

Get instant access to AllDevBlogs from your browser

Top of the Week

No top articles yet