Claude Token Counter, now with model comparisons

Upgraded Claude Token Counter with model comparison, showing token inflation in Opus 4.7 vs 4.6.

Upgraded Claude Token Counter with model comparison, showing token inflation in Opus 4.7 vs 4.6.

Upgrade to Claude Token Counter adds model comparison, revealing token inflation and cost impacts for Opus 4.7.

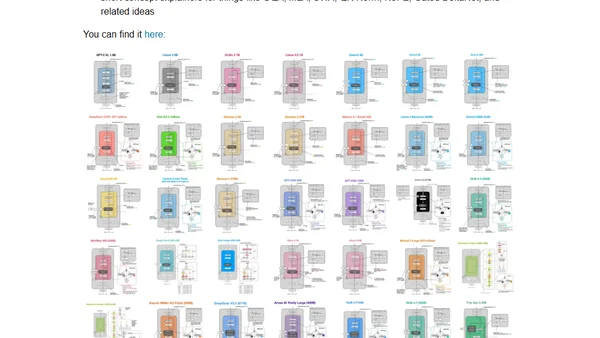

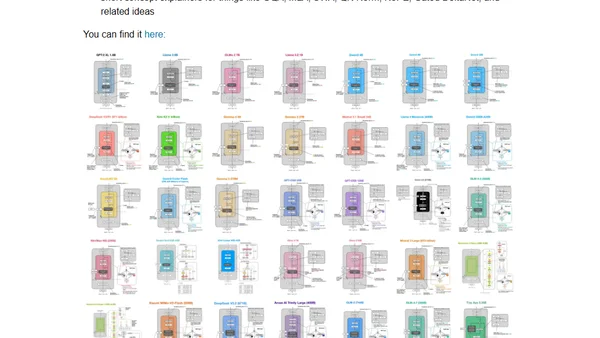

A gallery showcasing and comparing architecture diagrams and technical details of recent open-weight Large Language Models (LLMs).

A gallery showcasing architecture diagrams and technical details for recent open-weight Large Language Models (LLMs).

Analysis of Claude Opus 4.5 LLM release and the growing difficulty in evaluating incremental improvements between AI models.

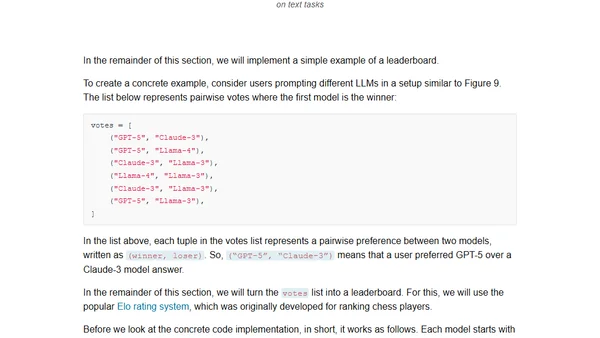

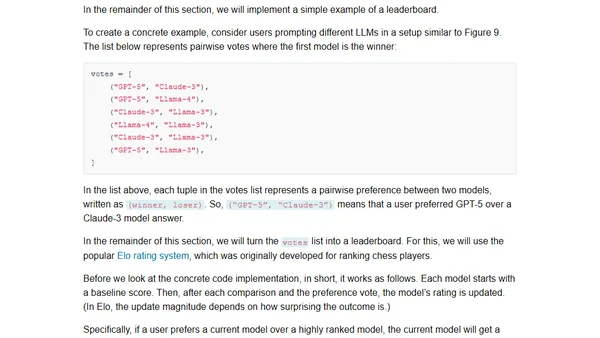

A guide to the four main methods for evaluating Large Language Models, including code examples and practical implementation details.

Explores four main methods for evaluating Large Language Models (LLMs), including code examples for implementing each approach from scratch.

Explains why AIC comparisons between discrete and continuous statistical models are invalid, using examples with binomial and Normal distributions.

A hands-on review of Google's updated Gemini Deep Research tool with the 2.5 Pro model, covering its features, usability, and areas for improvement.

A detailed comparison of Anthropic's Claude 3 and the newer Claude 3.5 Sonnet AI models, covering performance, capabilities, and benchmarks.

A developer compares 8 LLMs on a custom retrieval task using medical transcripts, analyzing performance on simple to complex questions.