What are AI Evals?

Explains AI evals: automated checks for non-deterministic AI outputs using LLMs to score against expectations, not exact matches.

Explains AI evals: automated checks for non-deterministic AI outputs using LLMs to score against expectations, not exact matches.

Analysis of Claude Opus 4.5 LLM release and the growing difficulty in evaluating incremental improvements between AI models.

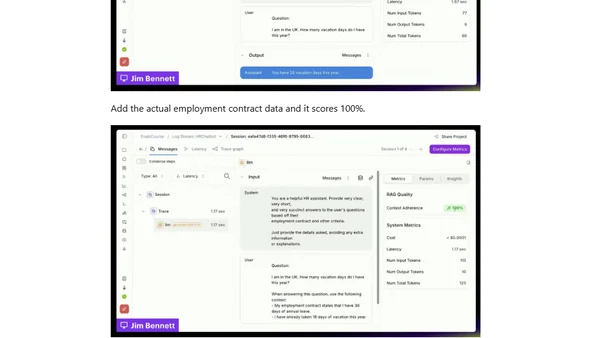

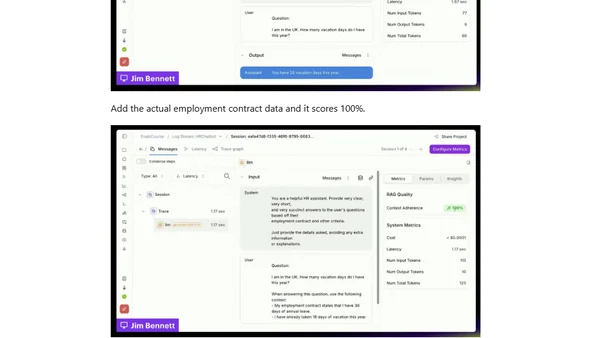

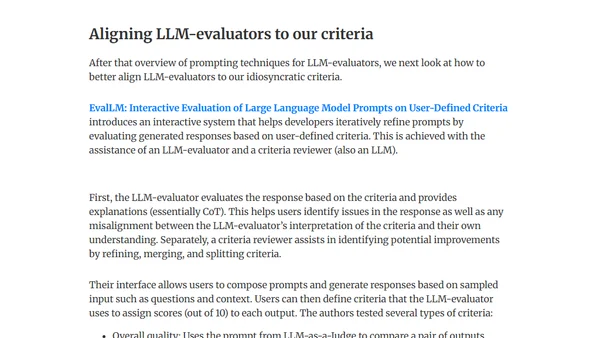

A guide to building product evaluations for LLMs using three steps: labeling data, aligning evaluators, and running experiments.

A comprehensive overview of over 50 modern AI agent benchmarks, categorized into function calling, reasoning, coding, and computer interaction tasks.

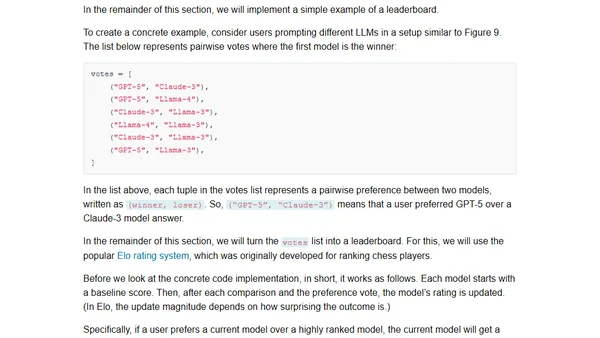

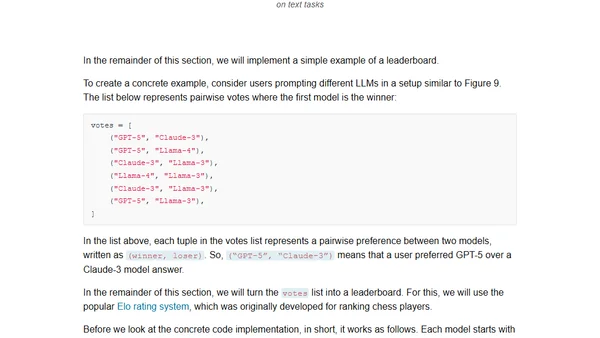

Explores four main methods for evaluating Large Language Models (LLMs), including code examples for implementing each approach from scratch.

A guide to the four main methods for evaluating Large Language Models, including code examples and practical implementation details.

Explores challenges and methods for evaluating question-answering AI systems when processing long documents like technical manuals or novels.

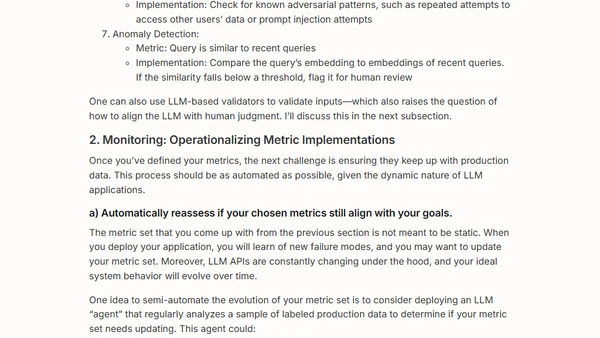

Argues that effective AI product evaluation requires a scientific, process-driven approach, not just adding LLM-as-judge tools.

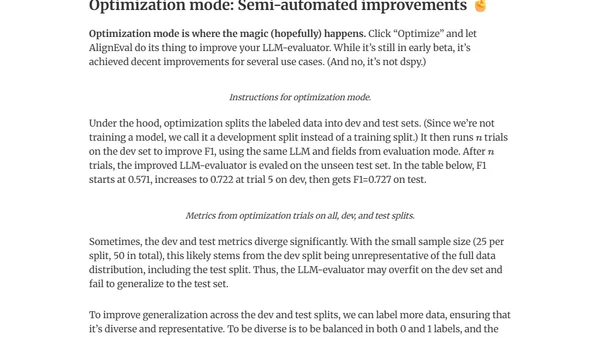

Introduces AlignEval, an app for building and automating LLM evaluators, making the process easier and more data-driven.

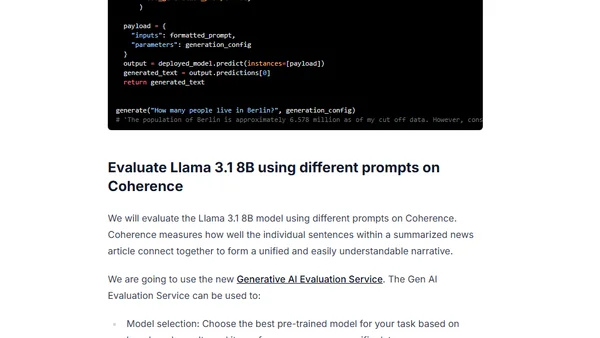

A technical guide on using Google's Vertex AI Gen AI Evaluation Service with Gemini to evaluate open LLM models like Llama 3.1.

Author judges a Weights & Biases hackathon focused on building LLM evaluation tools, discussing key considerations and project highlights.

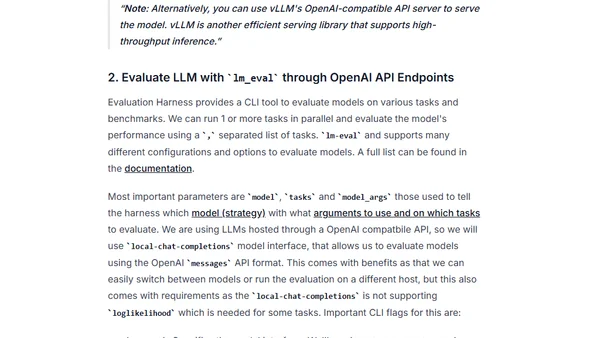

A guide to evaluating Large Language Models (LLMs) using the Evaluation Harness framework and optimized serving tools like Hugging Face TGI and vLLM.

A survey of using LLMs as evaluators (LLM-as-Judge) for assessing AI model outputs, covering techniques, use cases, and critiques.

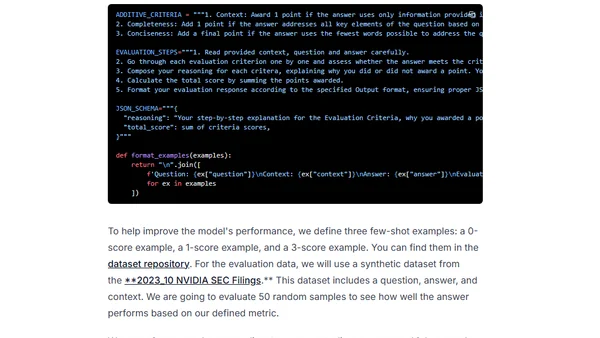

A guide to simplifying LLM evaluation workflows using clear metrics, chain-of-thought, and few-shot prompts, inspired by real-world examples.

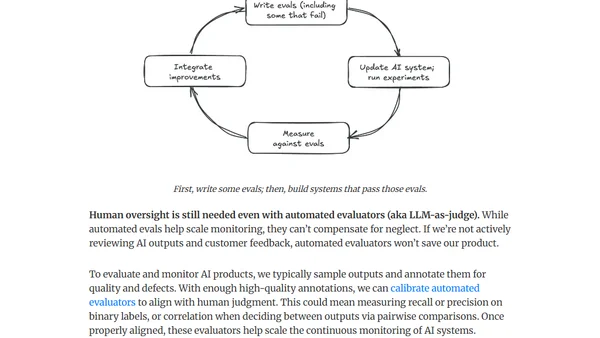

A framework for building data flywheels to dynamically improve LLM applications through continuous evaluation, monitoring, and feedback loops.

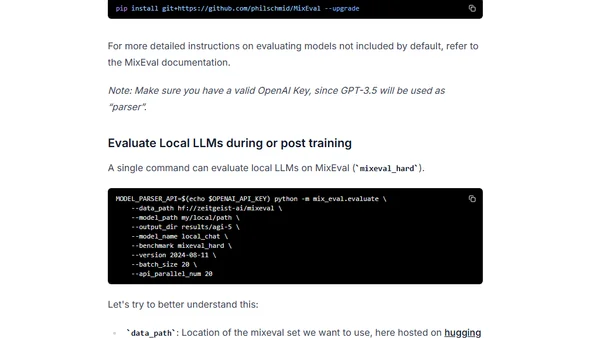

Introduces MixEval, a cost-effective LLM benchmark with high correlation to Chatbot Arena, for evaluating open-source language models.

A developer compares 8 LLMs on a custom retrieval task using medical transcripts, analyzing performance on simple to complex questions.

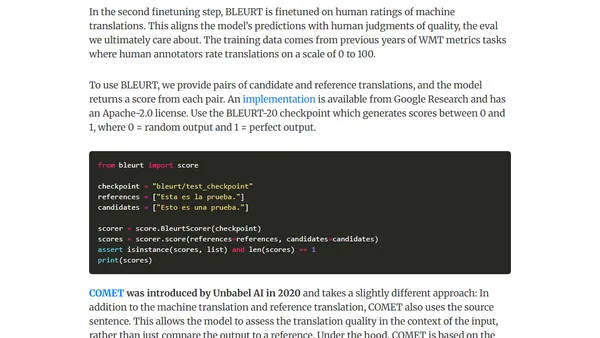

A guide to effective and ineffective evaluation methods for LLMs on tasks like classification, summarization, and translation, including practical metrics.

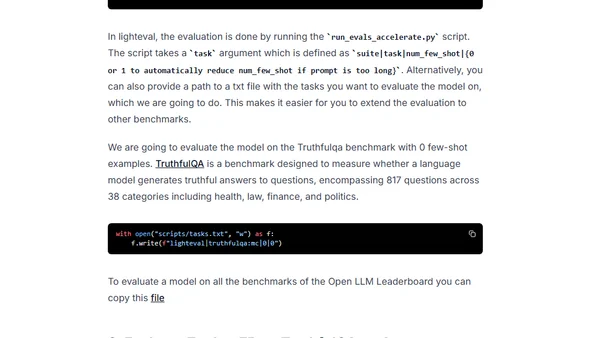

A tutorial on evaluating Large Language Models using Hugging Face's Lighteval library on Amazon SageMaker, focusing on benchmarks like TruthfulQA.

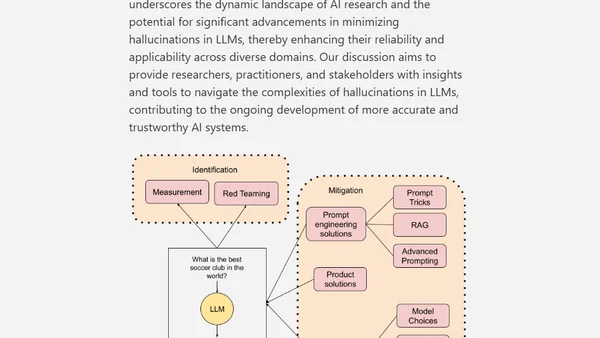

A technical paper exploring the causes, measurement, and mitigation strategies for hallucinations in Large Language Models (LLMs).