Notes on ‘AI Engineering’ (Chip Huyen) chapter 7: Finetuning

A summary of Chip Huyen's chapter on AI fine-tuning, arguing it's a last resort after prompt engineering and RAG, detailing its technical and organizational complexities.

A summary of Chip Huyen's chapter on AI fine-tuning, arguing it's a last resort after prompt engineering and RAG, detailing its technical and organizational complexities.

A curated list of 12 influential LLM research papers from 2024, highlighting key advancements in AI and machine learning.

A curated list of 12 influential LLM research papers from each month of 2024, covering topics like Mixture of Experts, LoRA, and scaling laws.

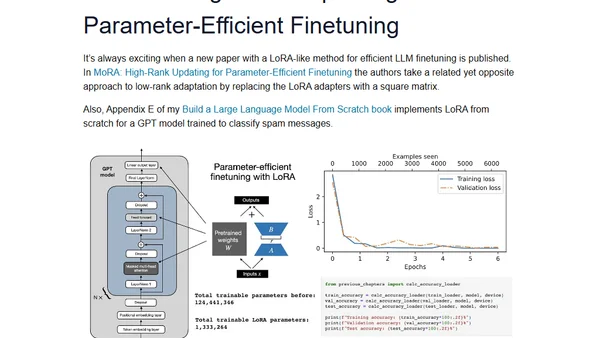

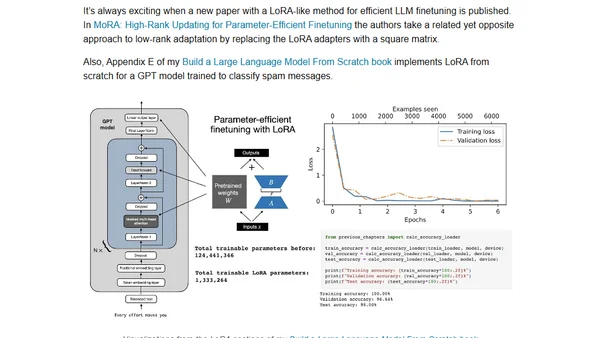

Analysis of new LLM research on instruction masking and LoRA finetuning methods, with practical insights for developers.

Explores new research on instruction masking and LoRA finetuning techniques for improving large language models (LLMs).

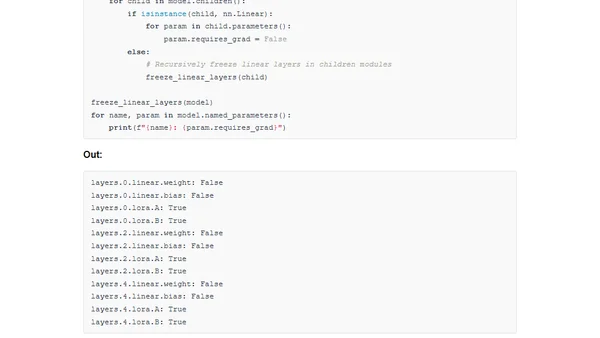

A guide to implementing LoRA and the new DoRA method for efficient model finetuning in PyTorch from scratch.

A technical guide implementing DoRA, a new low-rank adaptation method for efficient model finetuning, from scratch in PyTorch.

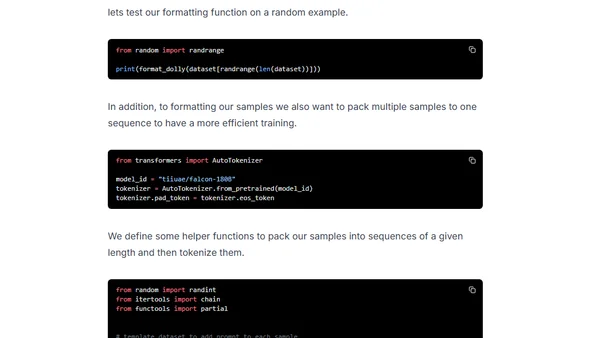

A technical guide on fine-tuning the massive Falcon 180B language model using DeepSpeed ZeRO, LoRA, and Flash Attention for efficient training.

A guide to efficiently finetuning Falcon LLMs using parameter-efficient methods like LoRA and Adapters to reduce compute time and cost.

A guide to efficiently finetuning Falcon LLMs using parameter-efficient methods like LoRA and adapters to reduce compute costs.

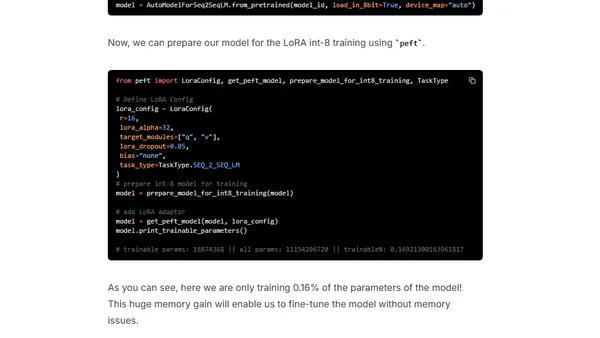

Learn about Low-Rank Adaptation (LoRA), a parameter-efficient method for finetuning large language models with reduced computational costs.

Explains Low-Rank Adaptation (LoRA), a parameter-efficient technique for fine-tuning large language models to reduce computational costs.

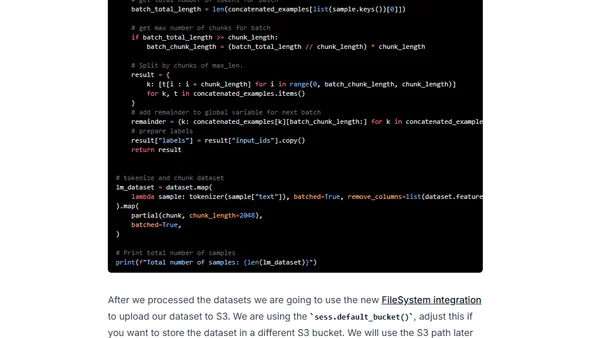

A technical guide on fine-tuning the BLOOMZ language model using PEFT and LoRA techniques, then deploying it on Amazon SageMaker.

A technical guide on fine-tuning the large FLAN-T5 XXL model efficiently using LoRA and Hugging Face libraries on a single GPU.