How Can We Make Robotics More like Generative Modeling?

A roboticist argues for scaling robotics research like generative AI, focusing on data quality and iteration over algorithms for better generalization.

A roboticist argues for scaling robotics research like generative AI, focusing on data quality and iteration over algorithms for better generalization.

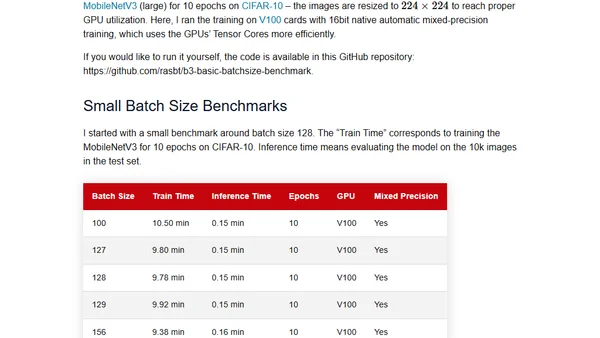

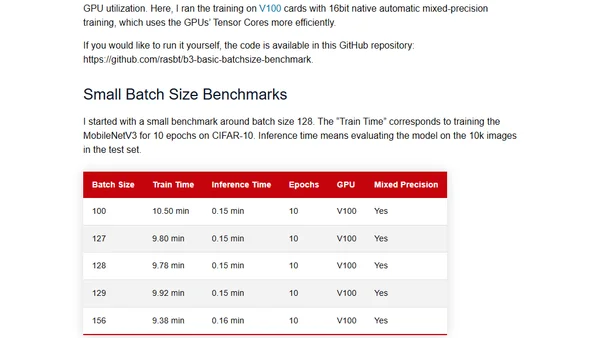

Challenges the common practice of using powers of 2 for neural network batch sizes, examining the theory and practical benchmarks.

Examines the common practice of using powers of 2 for neural network batch sizes, questioning its necessity with practical and theoretical insights.

A guide on converting Hugging Face Transformers models to the ONNX format using the Optimum library for optimized deployment.

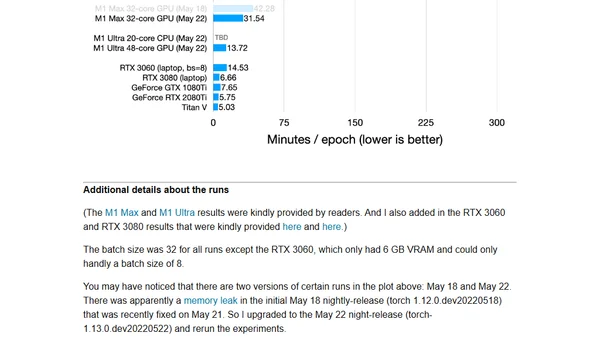

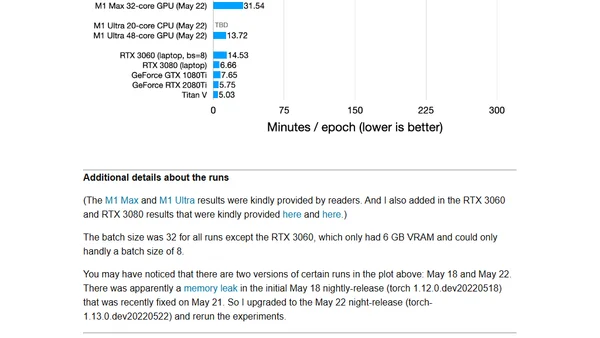

A hands-on review of PyTorch's new M1 GPU support, including installation steps and performance benchmarks for deep learning tasks.

A hands-on review and benchmark of PyTorch's new official GPU support for Apple's M1 chips, covering installation and performance.

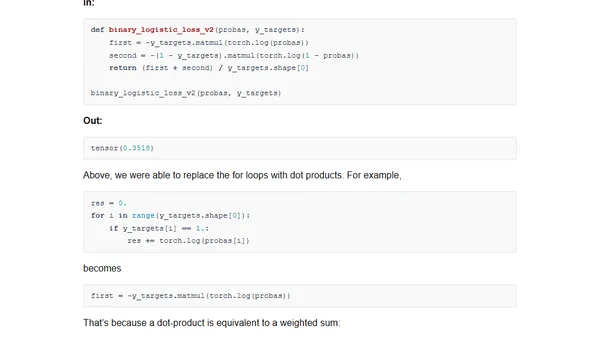

Explains cross-entropy loss in PyTorch for binary and multiclass classification, highlighting common implementation pitfalls and best practices.

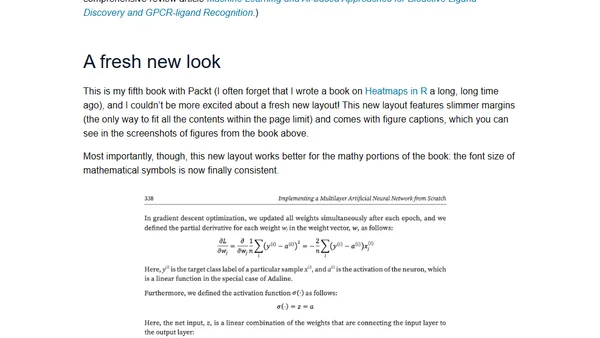

Author announces a new machine learning book covering scikit-learn, deep learning with PyTorch, neural networks, and reinforcement learning.

Announcing a new book on machine learning, covering fundamentals with scikit-learn and deep learning with PyTorch, including neural networks from scratch.

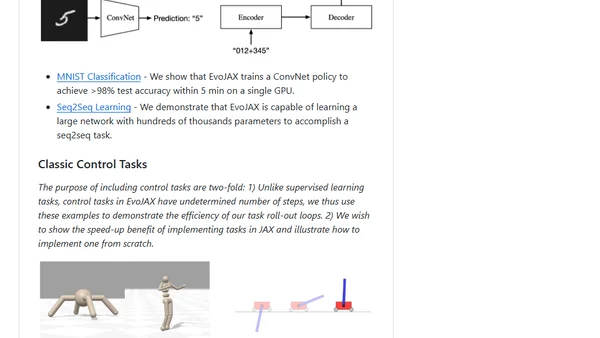

EvoJAX is a hardware-accelerated neuroevolution toolkit built on JAX for running parallel evolution experiments on TPUs/GPUs.

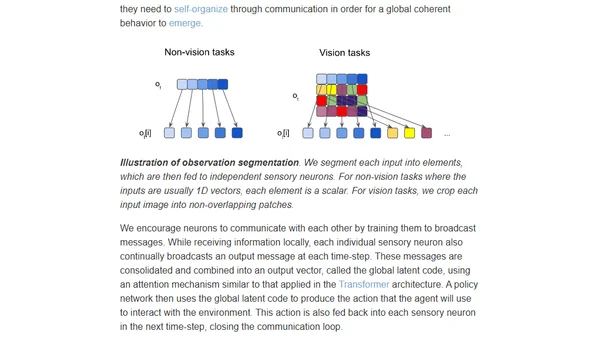

Introduces permutation-invariant neural networks for RL agents, enabling robustness to shuffled, noisy, or incomplete sensory inputs.

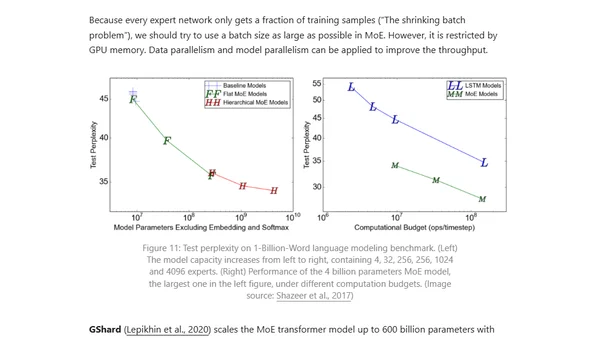

Explores parallelism techniques and memory optimization strategies for training massive neural networks across multiple GPUs.

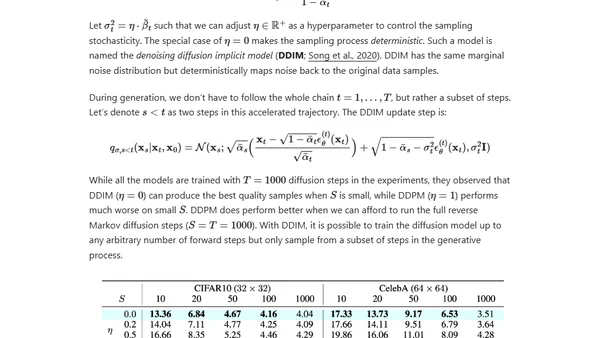

An in-depth technical explanation of diffusion models, a class of generative AI models that create data by reversing a noise-adding process.

A comprehensive deep learning course covering fundamentals, neural networks, computer vision, and generative models using PyTorch.

A comprehensive deep learning course overview with PyTorch tutorials, covering fundamentals, neural networks, and advanced topics like CNNs and GANs.

A review of the book 'Deep Learning with PyTorch', covering its structure, content, and suitability for students and beginners in deep learning.

A detailed review of the book 'Deep Learning with PyTorch,' covering its structure, content, and suitability for students and practitioners.

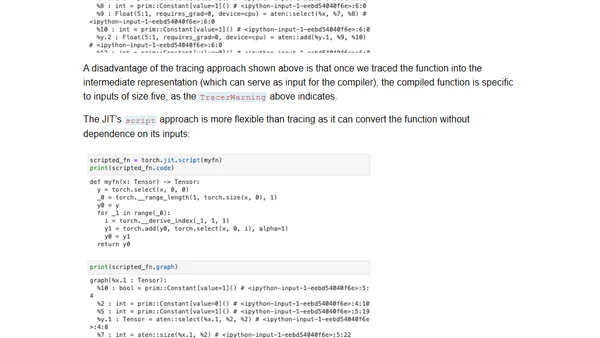

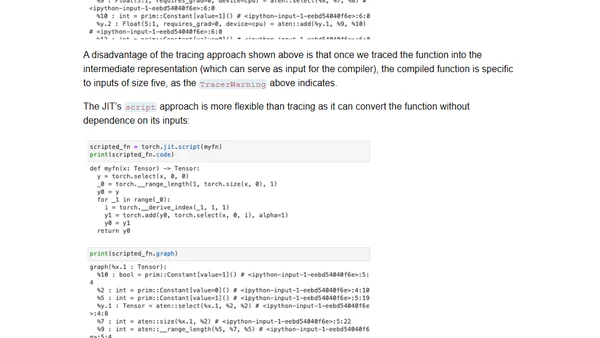

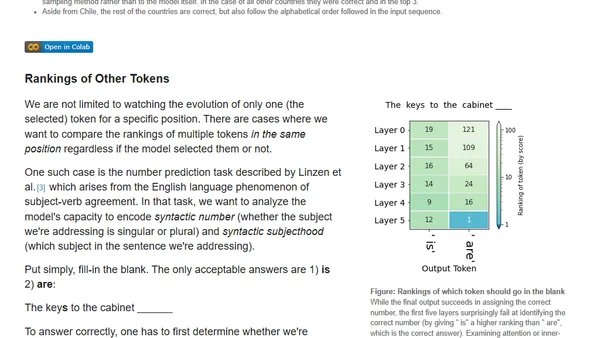

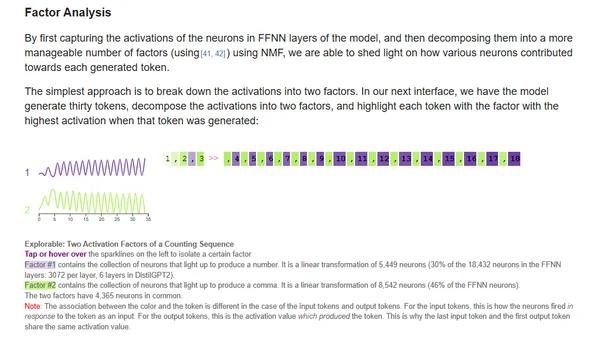

Explores visualizing hidden states in Transformer language models to understand their internal decision-making process during text generation.

Explores interactive methods for interpreting transformer language models, focusing on input saliency and neuron activation analysis.

Explains the Neural Tangent Kernel concept through simple 1D regression examples to illustrate how neural networks evolve during training.