Evaluating Open LLMs with MixEval: The Closest Benchmark to LMSYS Chatbot Arena

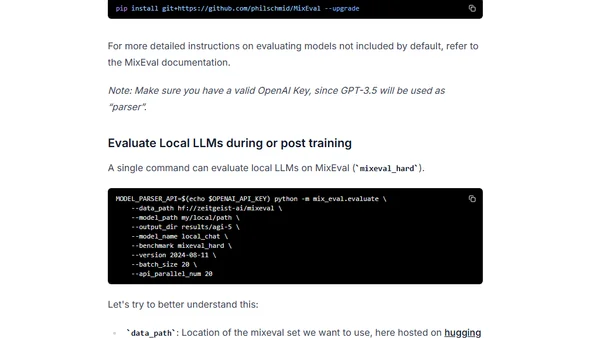

Read OriginalThe article discusses MixEval, a benchmark for evaluating open-source Large Language Models (LLMs) that combines real-world user queries with existing benchmarks. It highlights its 0.96 ranking correlation with LMSYS Chatbot Arena, low cost (~$0.6 to run), and features like dynamic updates and fair grading. It also covers a forked version with enhancements for local model evaluation and Hugging Face integration.

Comments

No comments yet

Be the first to share your thoughts!

Browser Extension

Get instant access to AllDevBlogs from your browser

Top of the Week

No top articles yet