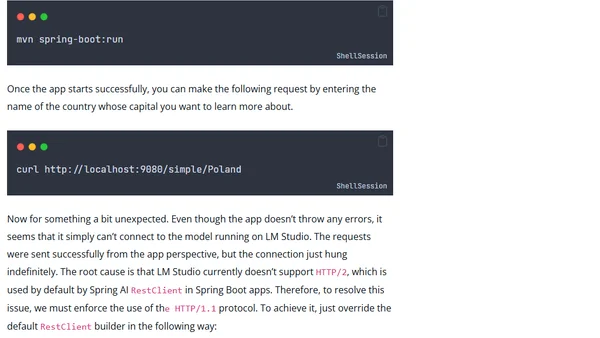

Local AI Models with LM Studio and Spring AI

Guide to running local AI models with LM Studio and integrating them into Java applications using the Spring AI framework.

Guide to running local AI models with LM Studio and integrating them into Java applications using the Spring AI framework.

Explores the mental challenges and best practices for managing multiple AI agent-driven software development projects simultaneously.

A guide to critical, irreversible decisions when setting up Azure Kubernetes Service (AKS) clusters to avoid costly rebuilds.

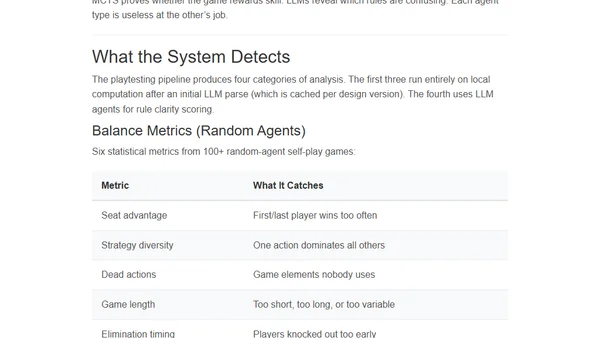

Explores using AI and LLMs to automate board game playtesting, analyzing balance, skill gaps, and rule clarity without human testers.

A daily tech link roundup covering .NET, Windows, web dev, AI, and software engineering news and tutorials.

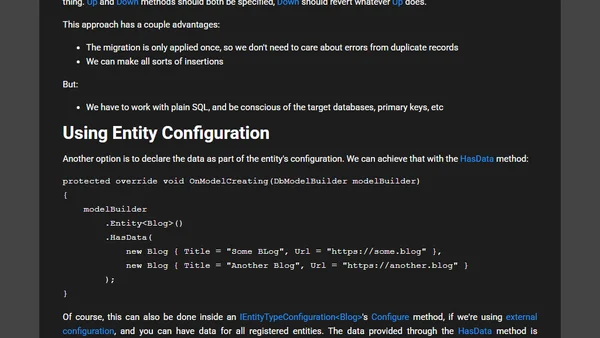

Explores four methods for seeding initial data in EF Core, including explicit insertions, data-only migrations, and entity configuration.

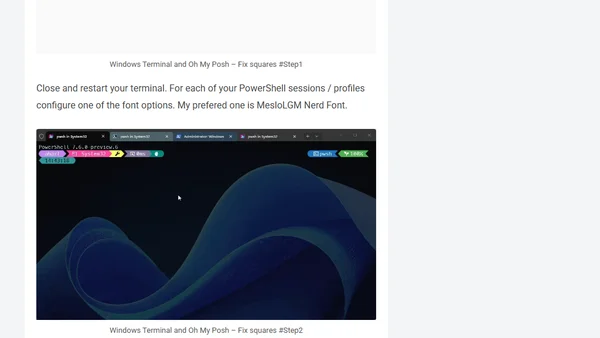

Guide to personalizing Windows Terminal with Oh My Posh for a more functional and informative command-line interface.

Microsoft closes a security loophole in Azure ACS that allowed unauthenticated enumeration of tenant domains, hardening M365 against reconnaissance.

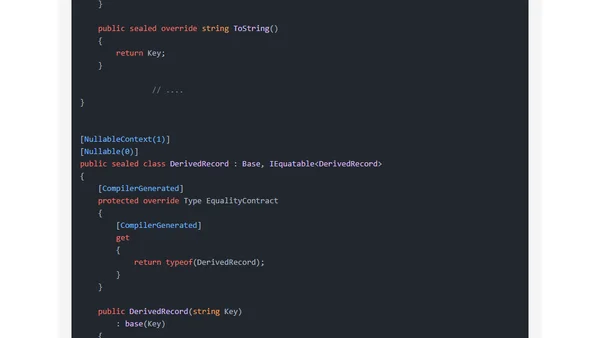

Explores C# record inheritance and ToString behavior, showing how the 'sealed' modifier in C# 10 fixes unexpected output.

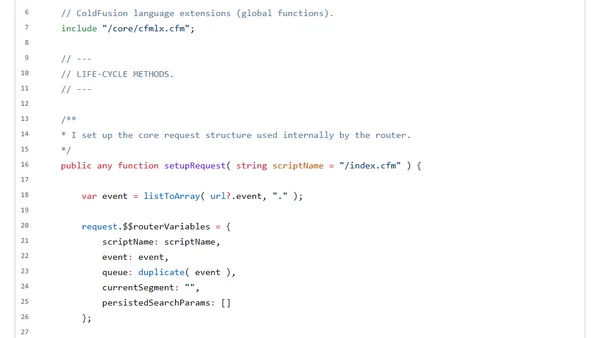

Explores using cached ColdFusion components as scoped proxies for request-specific state, detailing implementation within a DI/IoC context.

Explains data validation options in the Wolverine .NET framework, covering Data Annotations and Fluent Validation integration.

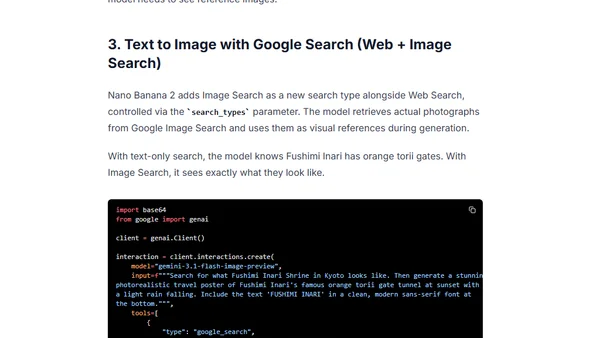

A developer guide for using the Gemini Interactions API with the Nano Banana 2 image generation model to create personalized, search-grounded images.

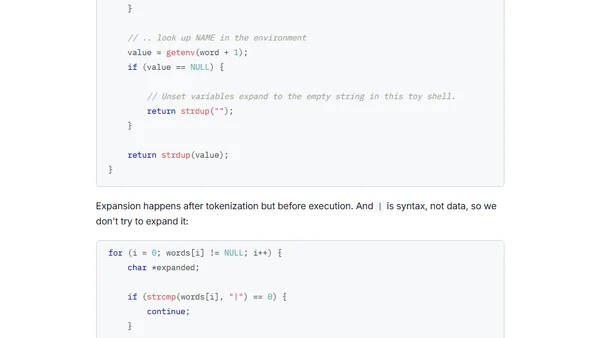

A developer documents the process of building a toy Unix shell from scratch, covering the REPL, command parsing, and execution.

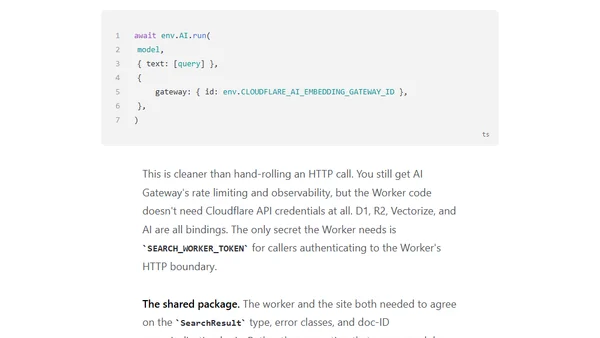

Explores building a hybrid search system combining semantic (meaning-based) and lexical (keyword-based) search for better results on a developer blog.

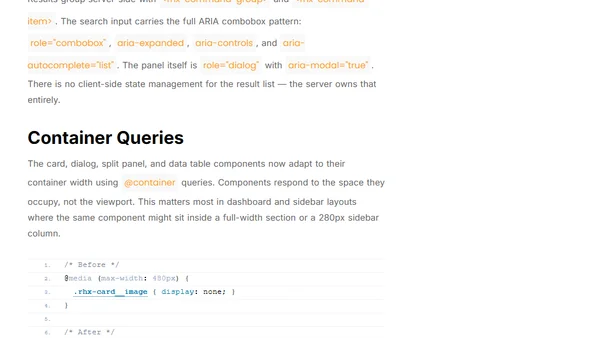

Announcing htmxRazor v1.3.0, a .NET library update featuring a new data table component, accessibility improvements, and modern CSS.

Explores Langford's problem: arranging two copies of numbers 1-n so that k numbers separate the two k's, with solutions for n ≡ 0 or 3 mod 4.

An R function to find palindrome dates across multiple international date formats within a specified range.

Author announces a new blog focused on software architecture, sharing knowledge and practical solutions for building adaptable software.

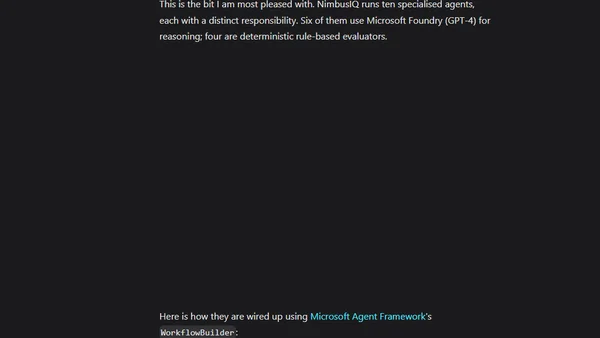

Introducing NimbusIQ, a multi-agent AI platform built on Microsoft Agent Framework to automate Azure drift detection, analysis, and remediation planning.

Explains how to implement SVG favicons that adapt to user light/dark theme preferences, covering browser support and a WebKit bug.