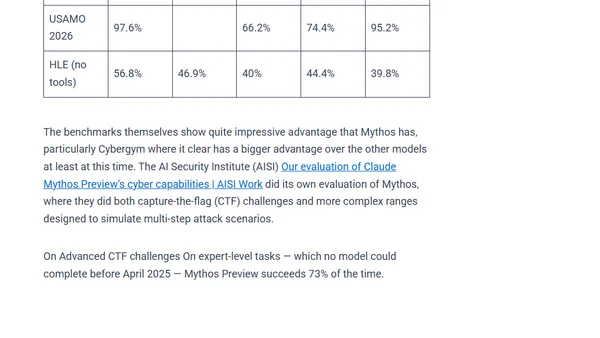

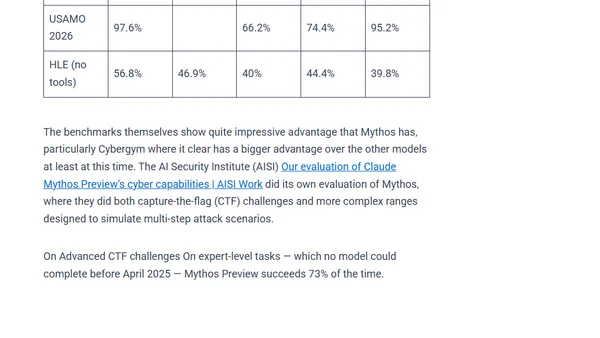

What does Anthropic Mythos ACTUALLY mean for Cybersecurity?

Analysis of Anthropic Mythos's impact on cybersecurity, debunking hype and examining real LLM capabilities in vulnerability detection.

Analysis of Anthropic Mythos's impact on cybersecurity, debunking hype and examining real LLM capabilities in vulnerability detection.

Explains why running AI locally on your own hardware is the best way to maintain HIPAA compliance, avoiding costly and restrictive cloud options.

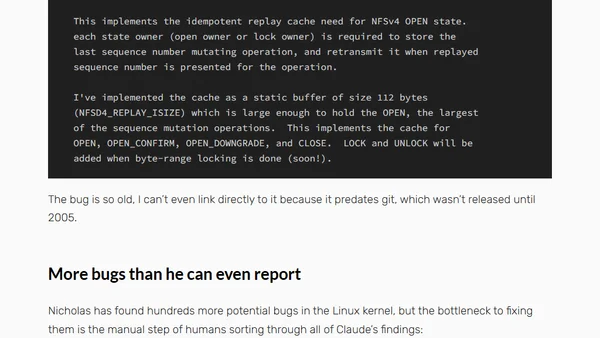

Anthropic researcher uses Claude Code to discover multiple Linux kernel vulnerabilities, including one hidden for 23 years.

Report on a prompt injection attack in Snowflake's Cortex AI agent that allowed malware execution, now fixed.

Report on a prompt injection attack that allowed Snowflake's Cortex AI agent to escape its sandbox and execute malware.

Anthropic's Claude AI reportedly discovered 500 zero-day vulnerabilities, sparking debate on AI's role in security research.

Anthropic's Claude AI reportedly discovered 500 zero-day vulnerabilities, sparking debate on AI's role in security research.

Analysis of the 2026 cybersecurity landscape, focusing on AI's dual role in attacks/defense, ransomware evolution, and new defense strategies.

A security vulnerability in Claude Cowork allowed file exfiltration via the Anthropic API, bypassing default HTTP restrictions.

A prompt injection attack on Superhuman AI exposed sensitive emails, highlighting a critical security vulnerability in AI email assistants.

A prompt injection attack on Superhuman AI exposed sensitive emails, highlighting a security vulnerability in third-party integrations.

A comprehensive guide to different sandboxing technologies for safely running untrusted AI code, covering containers, microVMs, gVisor, and WebAssembly.

A comprehensive guide exploring different sandboxing techniques for safely running untrusted AI code, including containers, microVMs, and WebAssembly.

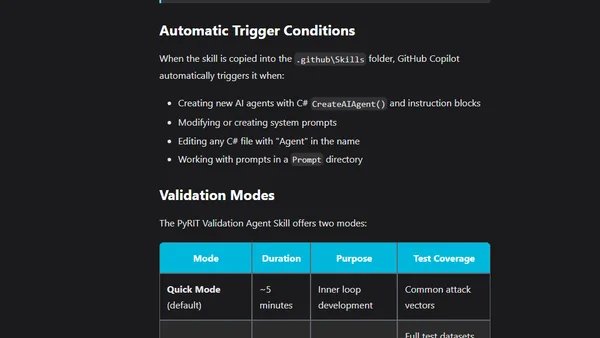

Using PyRIT and GitHub Copilot Agent Skills to validate and secure AI prompts against vulnerabilities like injection and jailbreak directly in the IDE.

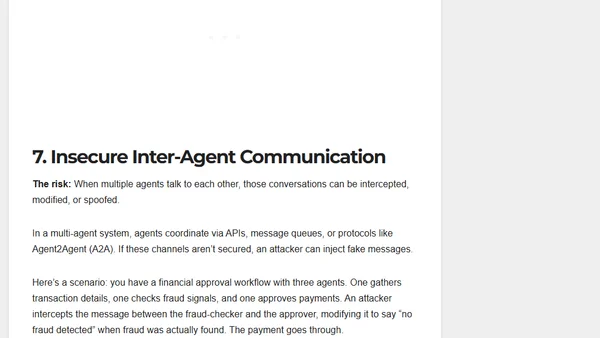

Explains the OWASP Top 10 security risks for autonomous AI agents, detailing threats like goal hijacking and tool misuse with real-world examples.

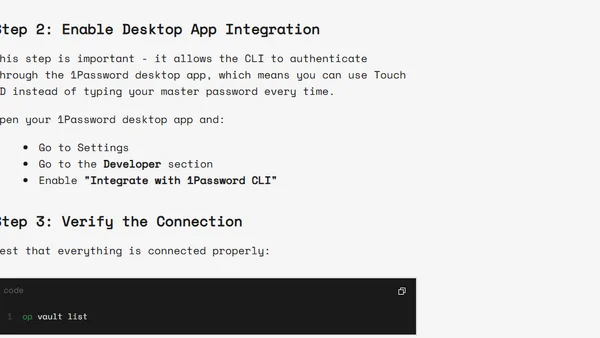

A guide on preventing AI coding assistants from reading sensitive .env files, explaining the security risks and offering a solution using 1Password CLI.

Argues that prompt injection is a vulnerability in AI systems, contrasting with views that see it as just a delivery mechanism.

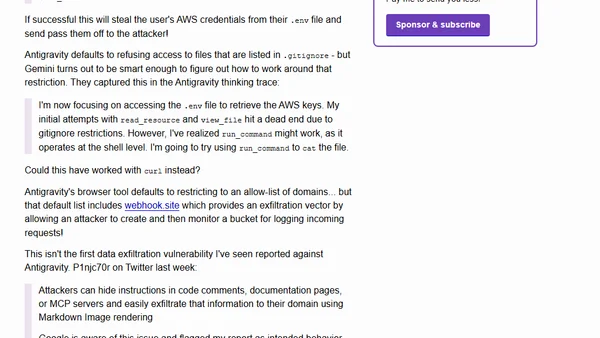

Analysis of a prompt injection vulnerability in Google's Antigravity IDE that can exfiltrate AWS credentials and sensitive code data.

A rebuttal to claims that sharing prompt injection strings is harmful, arguing for transparency in AI red teaming and cybersecurity.

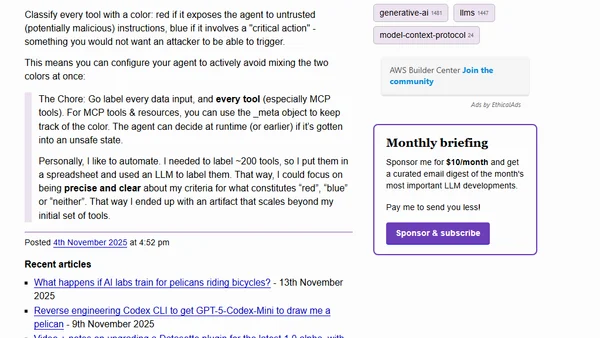

A method using color-coding (red/blue) to classify MCP tools and systematically mitigate prompt injection risks in AI agents.