Deploy T5 11B for inference for less than $500

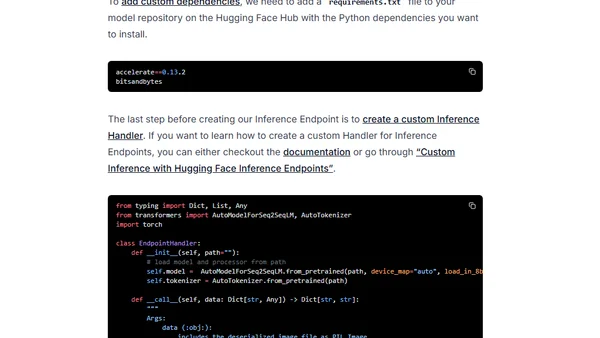

Read OriginalThis technical guide details how to deploy the 11-billion-parameter T5 Transformer model for production inference at a cost under $500. It covers preparing a model repository with sharded fp16 weights, creating a custom inference handler, and deploying the model on a single NVIDIA T4 GPU using Hugging Face Inference Endpoints, including sending API requests.

Comments

No comments yet

Be the first to share your thoughts!

Browser Extension

Get instant access to AllDevBlogs from your browser

Top of the Week

No top articles yet