Generative transformer from first principles in Julia

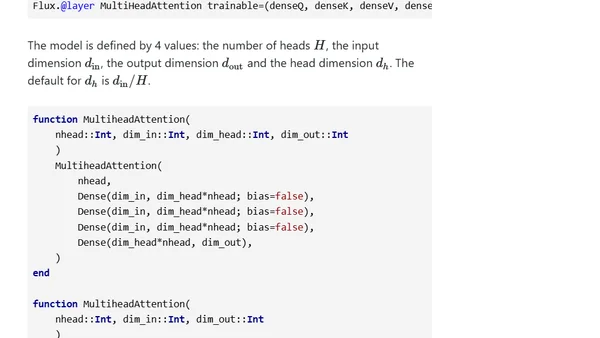

Read OriginalThis technical article details the implementation of a Generative Pre-trained Transformer (GPT) from first principles using the Julia programming language. Inspired by Andrej Karpathy's work, it follows the original GPT-1 paper architecture to train a model on Shakespeare's plays for text generation. The post includes code structure, parameter counts, and links to a full GitHub repository, serving as an educational guide for understanding transformer internals.

Comments

No comments yet

Be the first to share your thoughts!

Browser Extension

Get instant access to AllDevBlogs from your browser

Top of the Week

No top articles yet