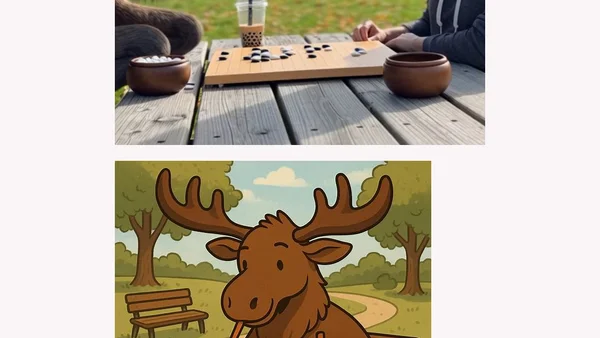

A moose playing Go in a park while drinking boba

An analysis of AI video generation using a specific, complex prompt to test the capabilities and limitations of models like Sora 2.

An analysis of AI video generation using a specific, complex prompt to test the capabilities and limitations of models like Sora 2.

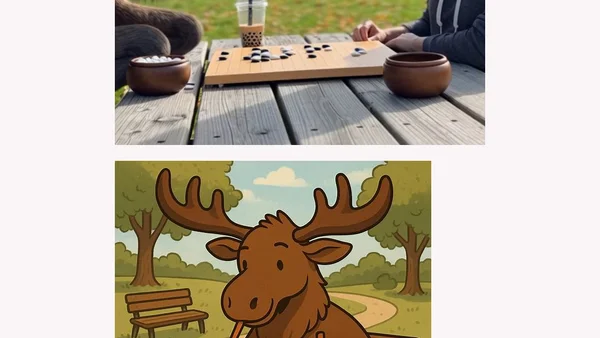

Analyzes the security risks of Model Context Protocols (MCPs), framing them as prompts that instruct AIs to execute third-party code.

Explores the shift from traditional coding to AI prompting in software development, discussing its impact on developer skills and satisfaction.

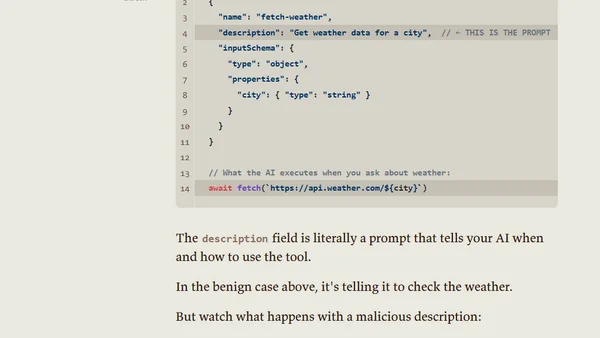

Explores the common practice of developers assigning personas to Large Language Models (LLMs) to better understand their quirks and behaviors.

Learn how to use personal instructions in GitHub Copilot Chat to customize its responses, tone, and code output for a better developer experience.

A hands-on guide for JavaScript developers to learn Generative AI and LLMs through interactive lessons, projects, and a companion app.

Argues that clear thinking and purpose, not prompt or context engineering, are the key skills for effective AI interaction, writing, and coding.

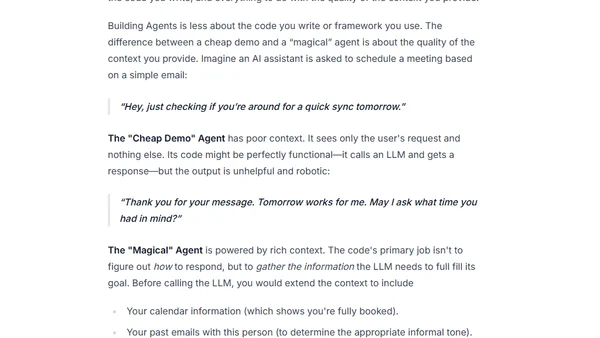

Explains why Context Engineering, not just prompt crafting, is the key skill for building effective AI agents and systems.

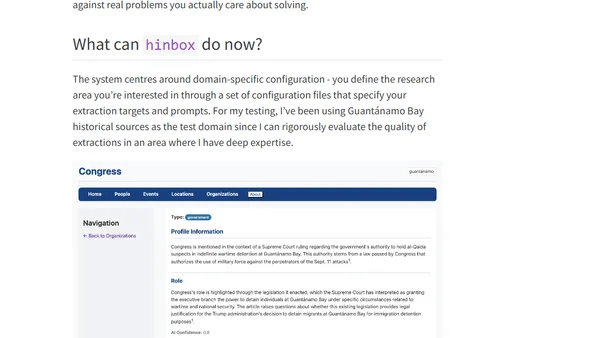

Introducing hinbox, an AI-powered tool for extracting and organizing entities from historical documents to build structured research databases.

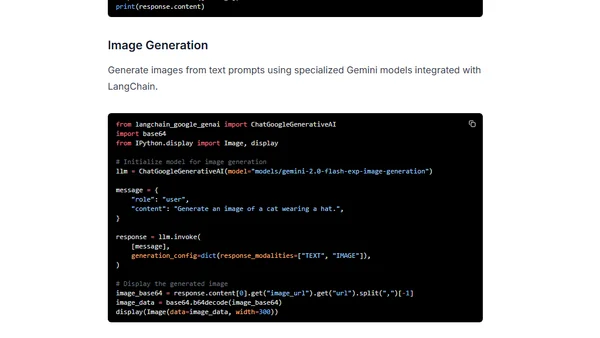

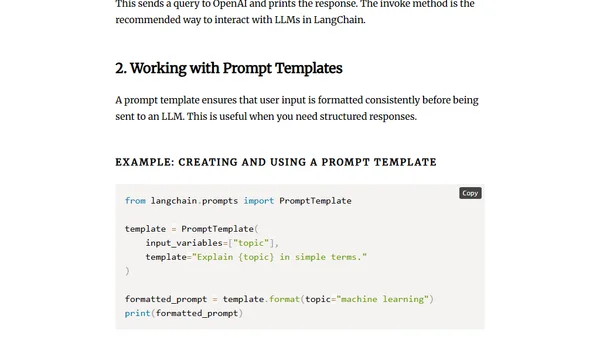

A technical cheatsheet for using Google's Gemini AI models with the LangChain framework, covering setup, chat models, prompt templates, and image inputs.

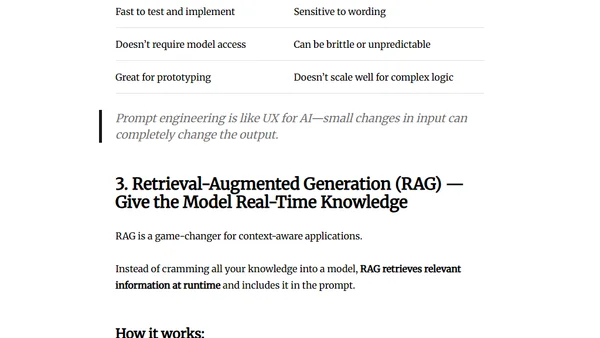

Explores three key methods to enhance LLM performance: fine-tuning, prompt engineering, and RAG, detailing their use cases and trade-offs.

A free, interactive course on GitHub teaching Generative AI concepts using JavaScript through a creative time-travel adventure narrative.

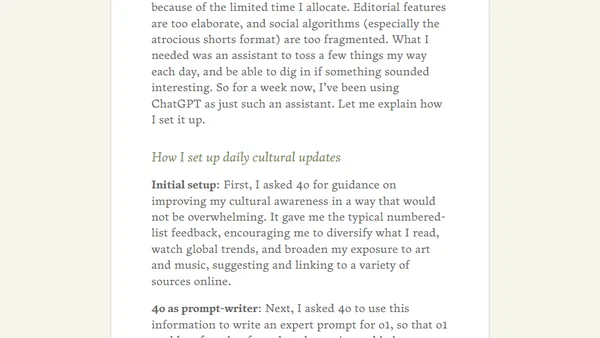

A developer shares how to use ChatGPT's 4o and o1 models to create a personalized daily AI assistant for cultural news and learning.

A guide to building AI applications using the LangChain framework, covering core concepts, installation, and practical examples.

A practical guide to writing effective AI prompts, debunking the complexity of prompt engineering and offering simple tips for better results.

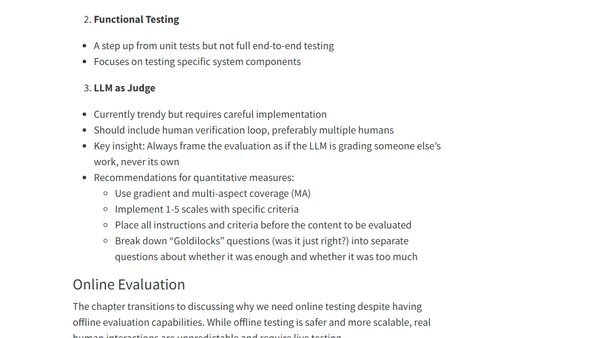

Final notes from a book on LLM prompt engineering, covering evaluation frameworks, offline/online testing, and LLM-as-judge techniques.

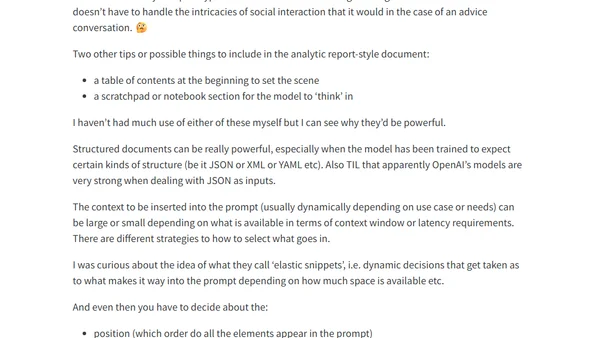

A summary of Chapter 6 from 'Prompt Engineering for LLMs', covering prompt structure, document templates, and strategies for effective context inclusion.

A Google researcher's curated review of key AI research papers from 2024, covering LLMs, new architectures, agents, and security.

Explores a method using a 'Judging AI' (like o1-preview) to evaluate the performance of other AI models on tasks, relative to human capability.

Explores key prompt engineering techniques like zero-shot, few-shot, and chain-of-thought to improve generative AI outputs.