LLM Powered Autonomous Agents

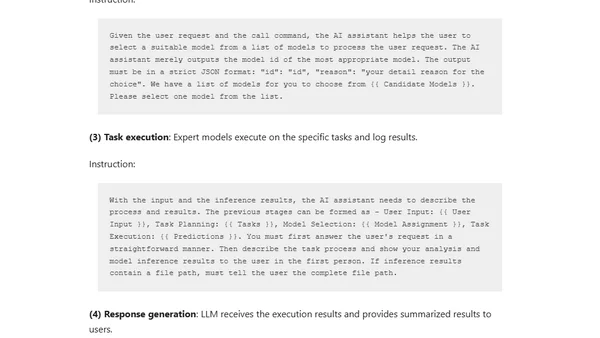

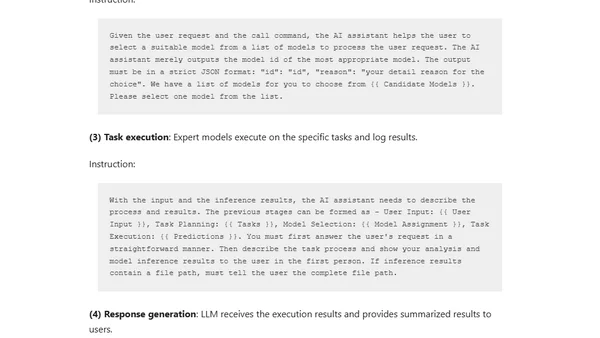

An overview of LLM-powered autonomous agents, covering their core components like planning, memory, and tool use for complex problem-solving.

An overview of LLM-powered autonomous agents, covering their core components like planning, memory, and tool use for complex problem-solving.

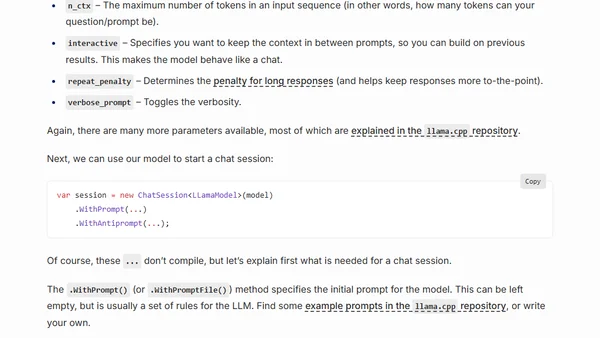

A guide to running open-source Large Language Models (LLMs) like LLaMA locally on your CPU using C# and the LLamaSharp library.

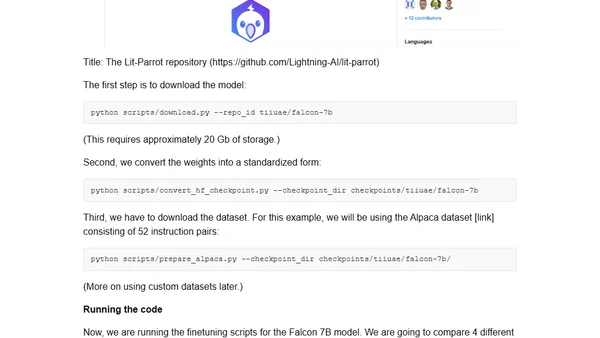

A guide to efficiently finetuning Falcon LLMs using parameter-efficient methods like LoRA and adapters to reduce compute costs.

Explores the potential and implications of using AI to automate mathematical theorem proving, framing it as a 'tame' problem solvable by machines.

An AI-generated, alliterative rewrite of Genesis 1 where every word starts with the letter 'A', created using GPT-4.

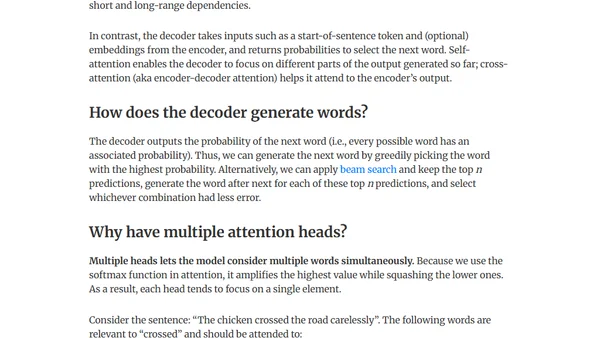

Explains the intuition behind the Attention mechanism and Transformer architecture, focusing on solving issues in machine translation and language modeling.

Explores user interfaces for LLMs that minimize text chat, using clicks and user context for more intuitive interactions.

The article distinguishes between interactive and transactional prompting, arguing that prompt engineering is most valuable for transactional, objective tasks with LLMs.

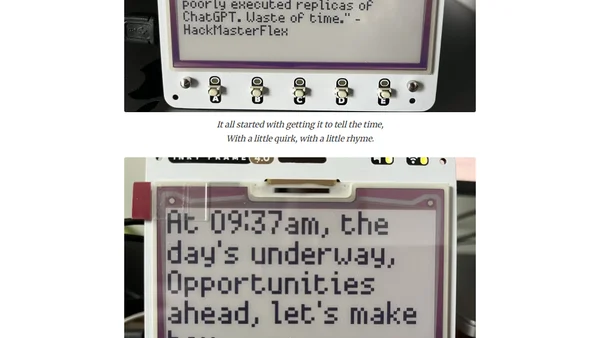

A developer explores running LLMs on a Raspberry Pi Pico with memory constraints, creating a witty e-ink display that generates content from news feeds.

A software engineer compares the hype around cryptocurrencies and LLMs, arguing that LLMs provide tangible value while crypto is plagued by scams.

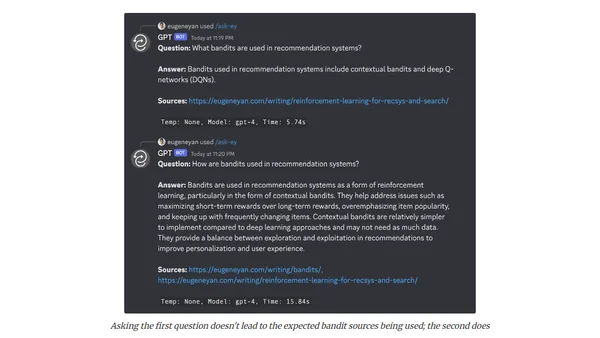

A developer shares experiments building LLM-powered tools for research, reflection, and planning, including URL summarizers, SQL agents, and advisory boards.

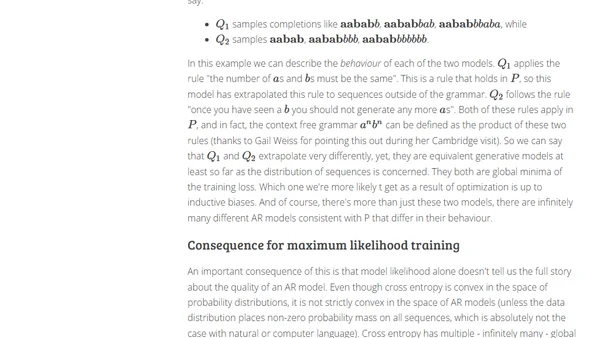

Explores autoregressive models, their relationship to joint distributions, and how they handle out-of-distribution prompts, with insights relevant to LLMs.

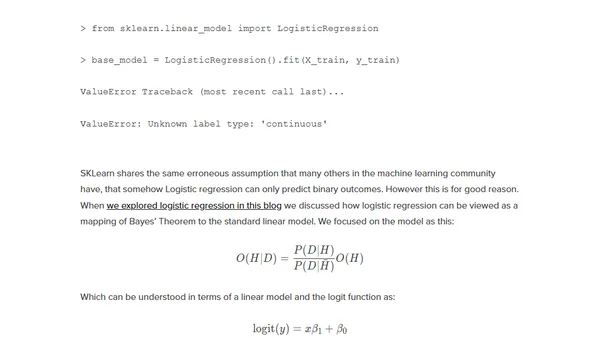

Explores using GPT-3 text embeddings and a simple classifier to predict the winner of a headline A/B test, potentially replacing traditional testing.

Explores the Reflexion technique where LLMs like GPT-4 can critique and self-correct their own outputs, a potential new tool in prompt engineering.

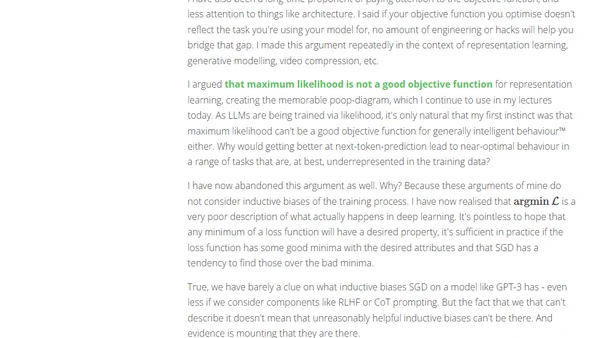

A reflection on past skepticism of deep learning and why similar dismissal of Large Language Models (LLMs) might be a mistake.

An experiment comparing how different large language models (GPT-4, Claude, Cohere) write a biography, analyzing their accuracy and training data.

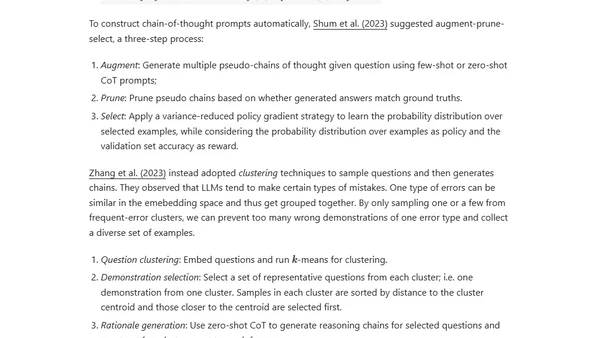

An overview of prompt engineering techniques for large language models, including zero-shot and few-shot learning methods.

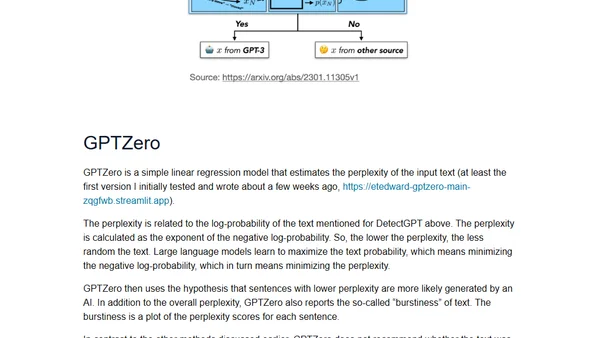

An overview of four different methods for detecting AI-generated text, including OpenAI's AI Classifier, DetectGPT, GPTZero, and watermarking.