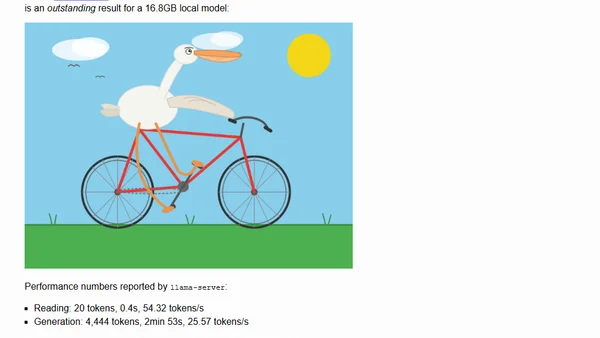

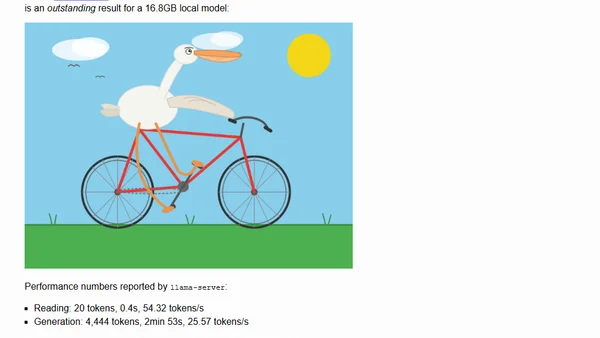

Qwen3.6-27B: Flagship-Level Coding in a 27B Dense Model

Qwen3.6-27B is a new 27B dense model delivering flagship-level coding performance, surpassing larger models, tested locally with GGUF.

Qwen3.6-27B is a new 27B dense model delivering flagship-level coding performance, surpassing larger models, tested locally with GGUF.

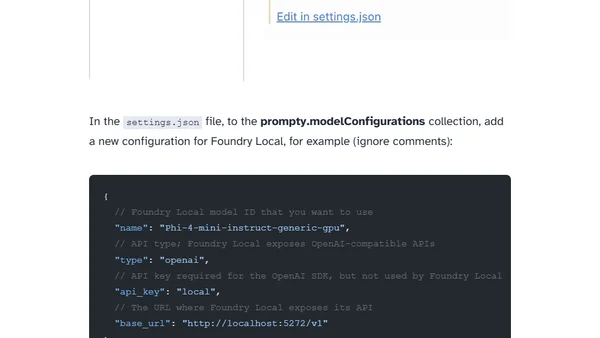

Guide on integrating Prompty with Microsoft's Foundry Local for managing AI prompts and running models locally for development.

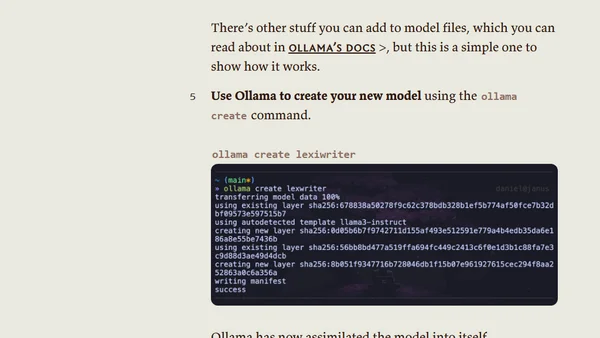

A tutorial on integrating Hugging Face's vast model library with Ollama's easy-to-use local AI platform for running custom models.

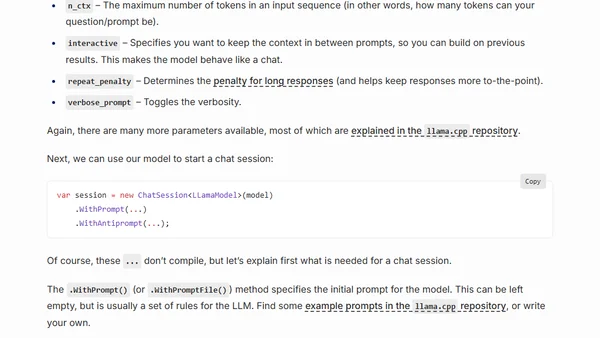

A guide to running open-source Large Language Models (LLMs) like LLaMA locally on your CPU using C# and the LLamaSharp library.