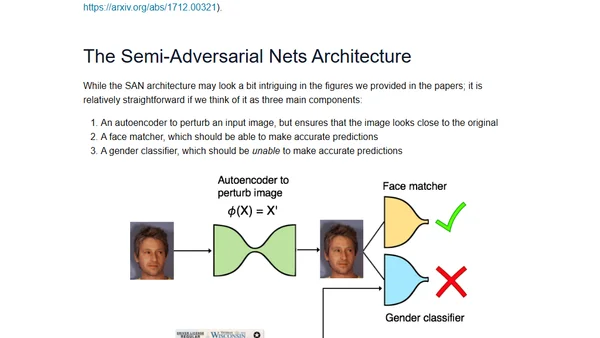

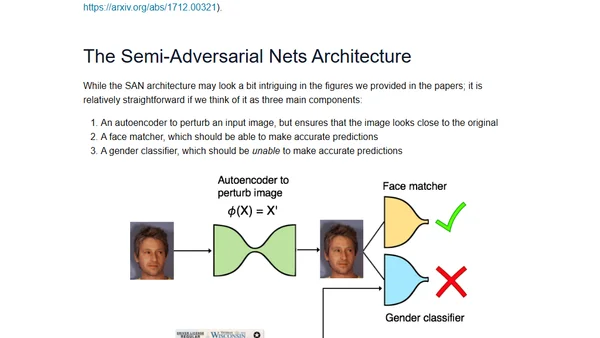

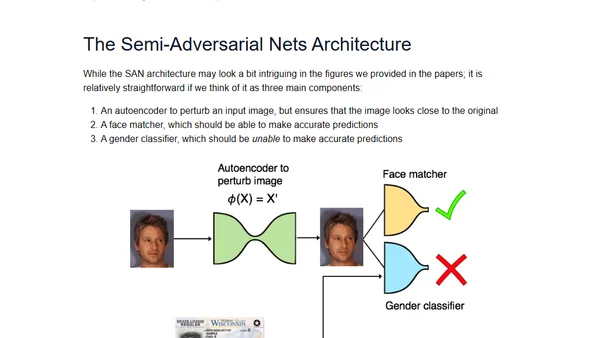

Generating Gender-Neutral Face Images with Semi-Adversarial Neural Networks to Enhance Privacy

Research on using semi-adversarial neural networks to generate gender-neutral face images, enhancing privacy while preserving biometric utility.

Research on using semi-adversarial neural networks to generate gender-neutral face images, enhancing privacy while preserving biometric utility.

Explores using semi-adversarial neural networks to generate gender-neutral face images, enhancing privacy by hiding gender while preserving biometric utility.

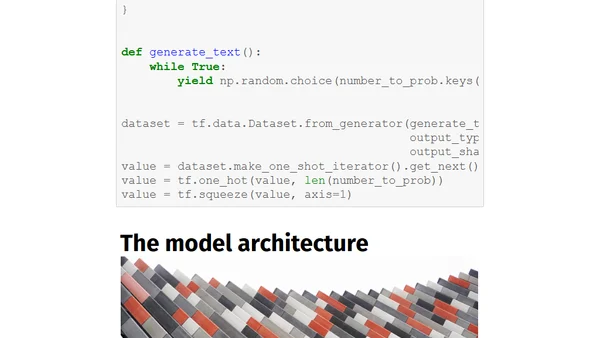

Explains the Gumbel-Softmax trick, a method for training neural networks that need to sample from discrete distributions, enabling gradient flow.

Explains the attention mechanism in deep learning, its motivation from human perception, and its role in improving seq2seq models like Transformers.

Highlights from a deep learning conference covering optimization algorithms' impact on generalization and human-in-the-loop efficiency.

A practical guide to implementing a hyperparameter tuning script for machine learning models, based on real-world experience from Taboola's engineering team.

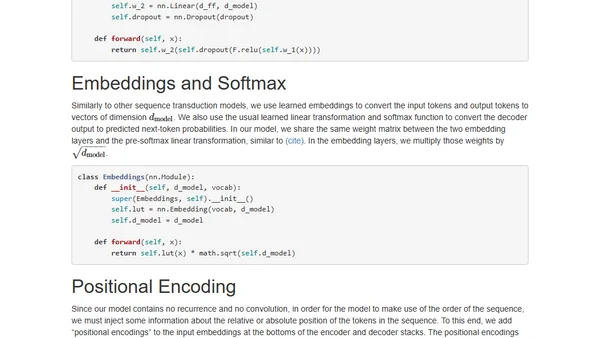

An annotated, line-by-line implementation of the Transformer architecture from 'Attention is All You Need' in PyTorch.

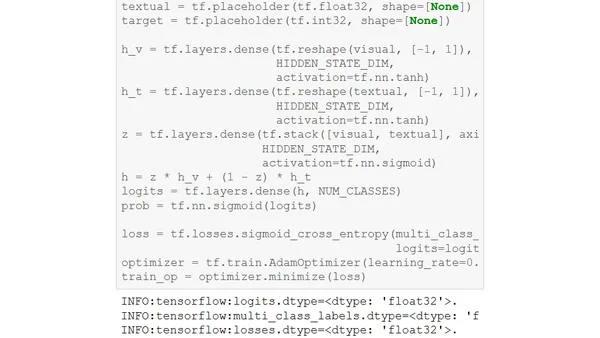

Explains the Gated Multimodal Unit (GMU), a deep learning architecture for intelligently fusing data from different sources like images and text.

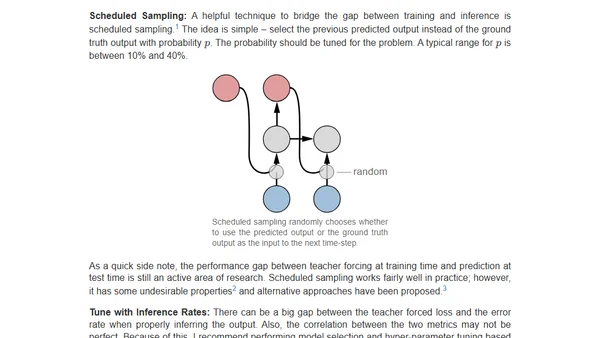

Practical tips for training sequence-to-sequence models with attention, focusing on debugging and ensuring the model learns to condition on input.

A tutorial on implementing a neural network in JavaScript using Google's deeplearn.js library to improve web accessibility by choosing font colors.

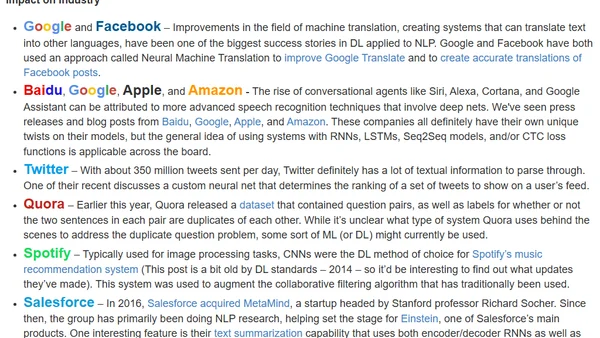

A retrospective on the transformative impact of deep learning over the past five years, covering its rise, key applications, and future potential.

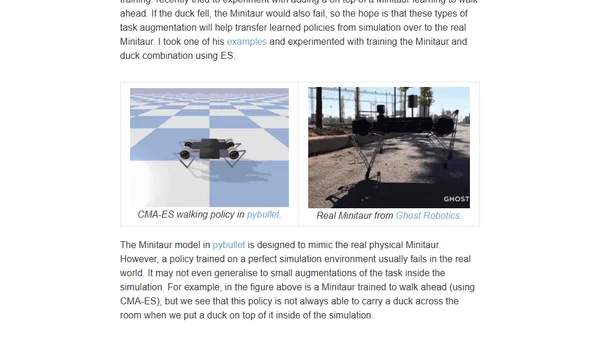

Explores applying Evolution Strategies (ES) to reinforcement learning problems for finding stable and robust neural network policies.

An overview of Machine Learning applications in Remote Sensing, covering key algorithms and the typical workflow for data analysis.

A visual guide explaining Evolution Strategies (ES) as a gradient-free optimization alternative to reinforcement learning for training neural networks.

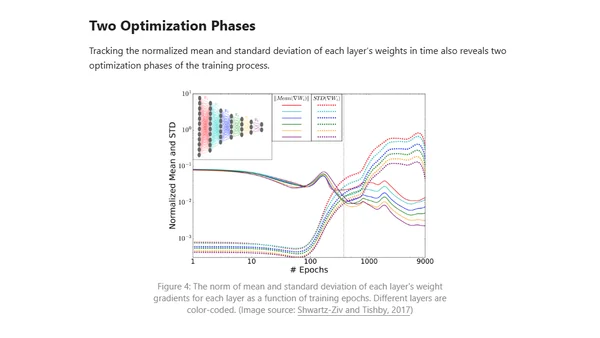

Explores applying information theory, specifically the Information Bottleneck method, to analyze training phases and learning bounds in deep neural networks.

A comparison of PyTorch and TensorFlow deep learning frameworks, focusing on programmability, flexibility, and ease of use for different project scales.

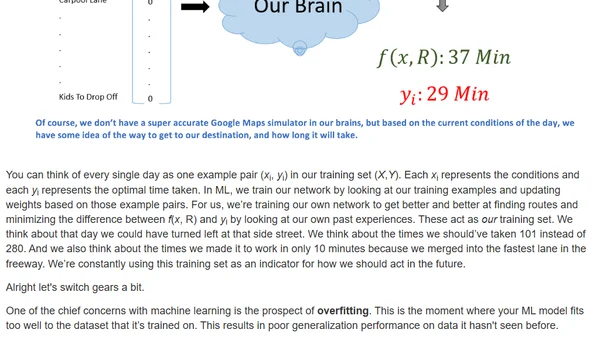

Explores how machine learning concepts like neural network training and optimization mirror daily life challenges and decision-making processes.

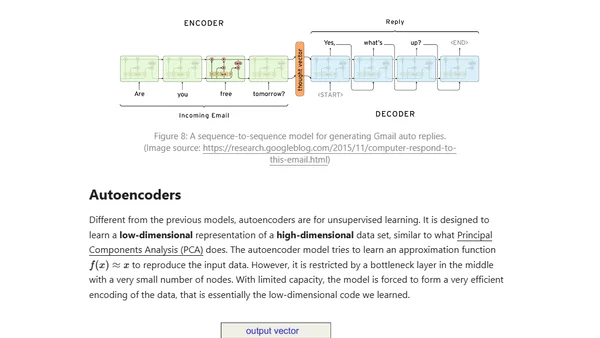

A developer explores using deep learning and sequence-to-sequence models to train a chatbot on personal social media data to mimic their conversational style.

An introduction to deep learning, explaining its rise, key concepts like CNNs, and why it's powerful now due to data and computing advances.

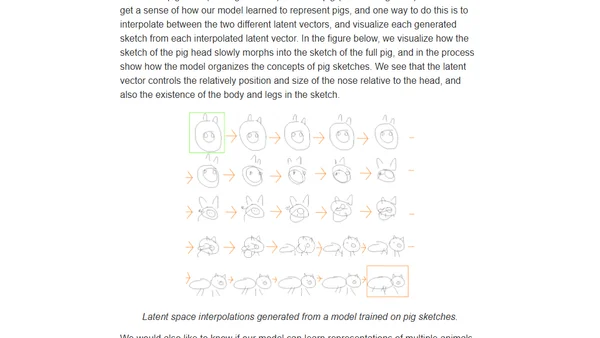

Explores a neural network model, sketch-rnn, that generates vector drawings by learning from human sketch sequences, mimicking abstract visual concepts.