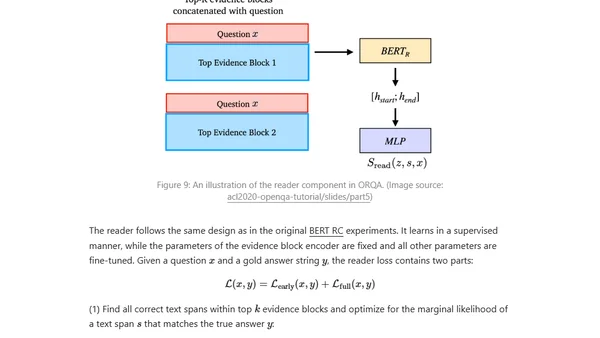

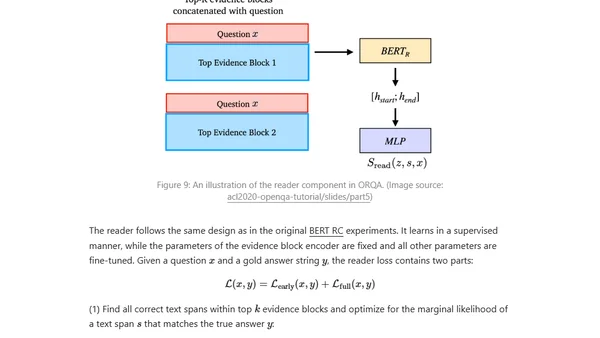

How to Build an Open-Domain Question Answering System?

A technical overview of approaches for building open-domain question answering systems using pretrained language models and neural networks.

A technical overview of approaches for building open-domain question answering systems using pretrained language models and neural networks.

Explores whether deep learning creates a new kind of program, using the philosophy of operationalism to compare it with traditional programming.

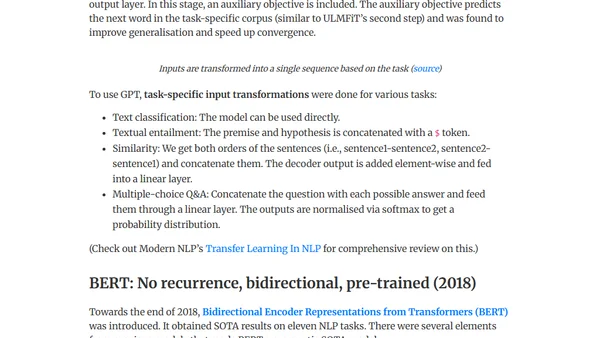

A chronological survey of key NLP models and techniques for supervised learning, from early RNNs to modern transformers like BERT and T5.

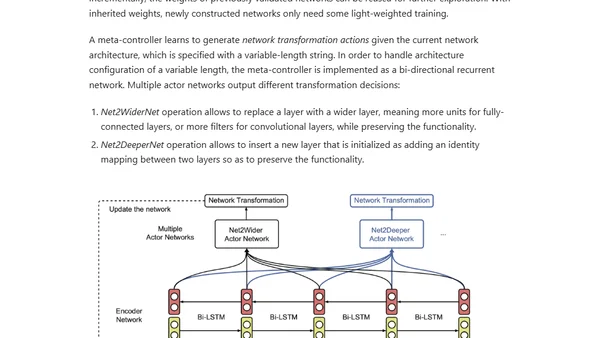

An overview of Neural Architecture Search (NAS), covering its core components: search space, algorithms, and evaluation strategies for automating AI model design.

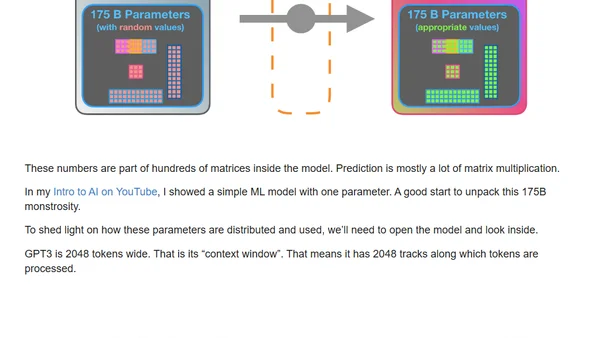

A visual guide explaining how GPT-3 is trained and generates text, breaking down its transformer architecture and massive scale.

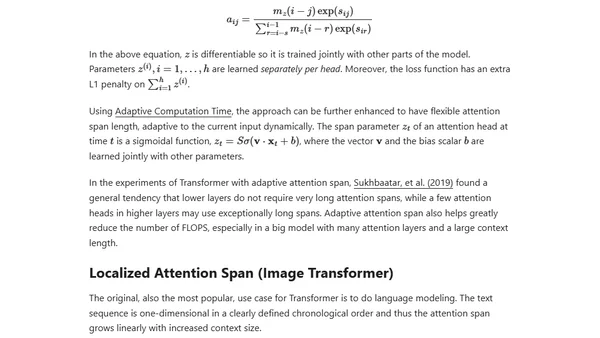

An updated overview of the Transformer model family, covering improvements for longer attention spans, efficiency, and new architectures since 2020.

A personal blog about machine learning, data annotation projects, and professional experiences in deep learning and AI product development.

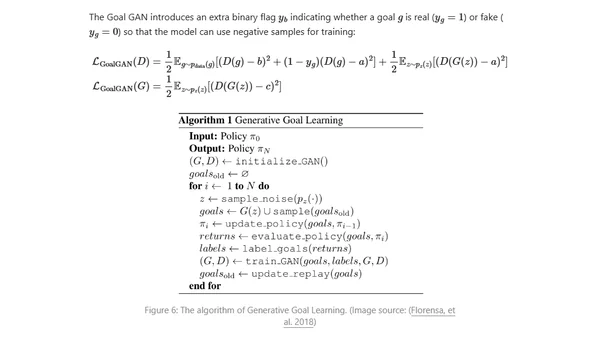

Explores curriculum learning strategies for training reinforcement learning models more efficiently, from simple to complex tasks.

A review of Janelle Shane's AI humor book, discussing neural network limitations and the real-world impact of class imbalance in machine learning.

Explores the visual similarities between images generated by neural networks and human experiences in dreams or under psychedelics.

A blog post exploring the parallels and differences between human cognition and machine learning, including biases and inspirations.

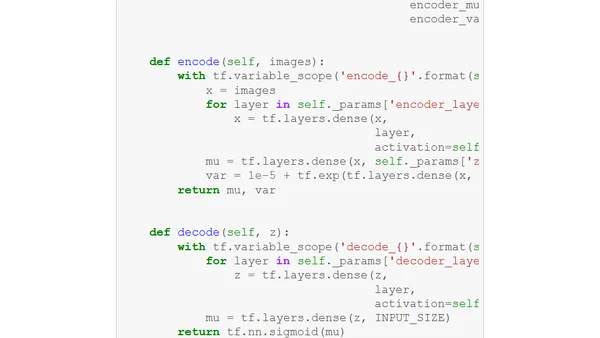

Explores an unsupervised approach combining Mixture of Experts (MoE) with Variational Autoencoders (VAE) for conditional data generation without labels.

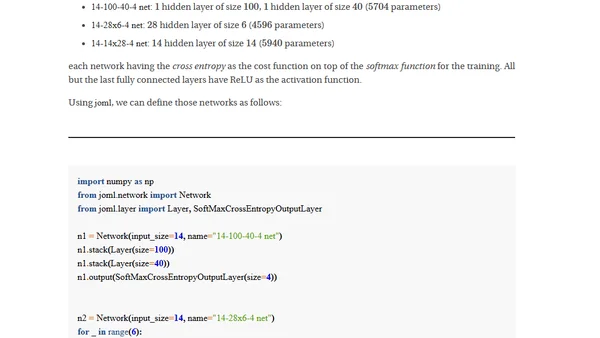

A deep dive into designing and implementing a Multilayer Perceptron from scratch, exploring the core concepts of neural network architecture and training.

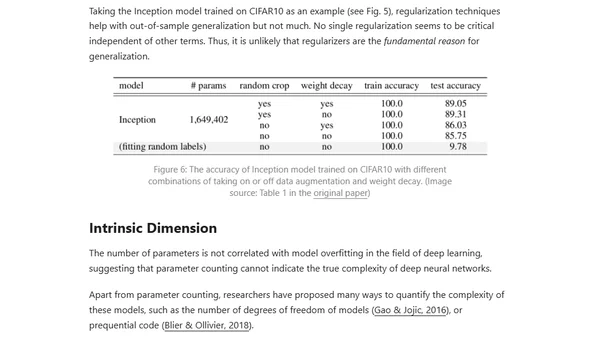

Explores the paradox of why deep neural networks generalize well despite having many parameters, discussing theories like Occam's Razor and the Lottery Ticket Hypothesis.

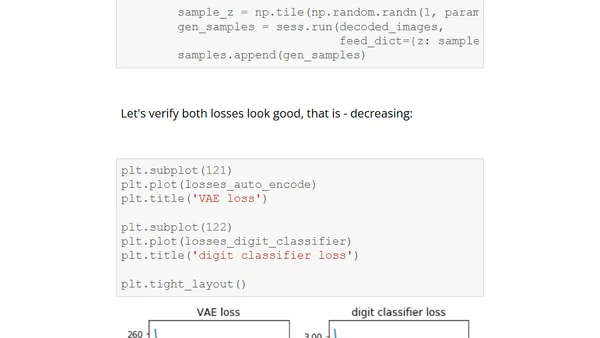

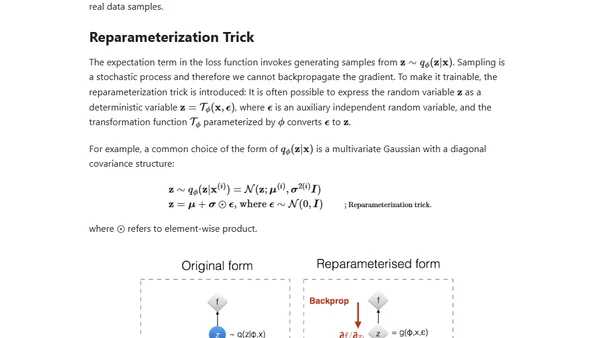

A detailed technical tutorial on implementing a Variational Autoencoder (VAE) with TensorFlow, including code and conditioning on digit types.

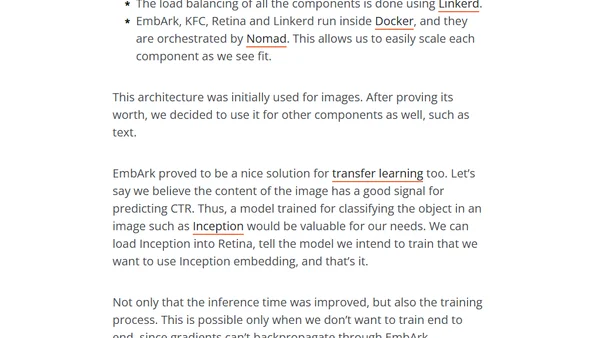

A technical case study on optimizing a slow multi-modal ML model for production using caching, async processing, and a microservices architecture.

An overview of tools and techniques for creating clear and insightful diagrams to visualize complex neural network architectures.

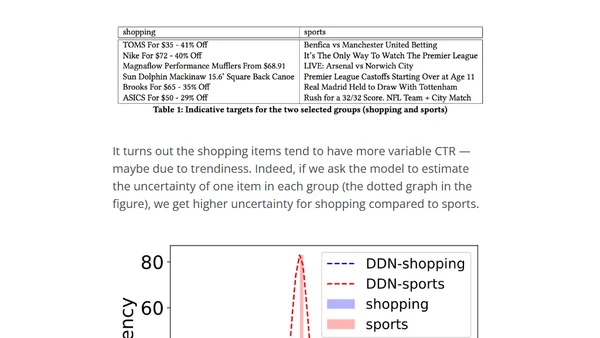

Explains how Taboola built a unified neural network model to predict CTR and estimate prediction uncertainty for recommender systems.

Explores the evolution from basic Autoencoders to Beta-VAE, covering their architecture, mathematical notation, and applications in dimensionality reduction.

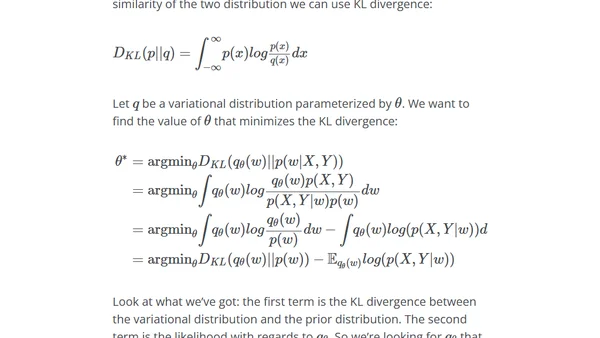

Explores Bayesian methods for quantifying uncertainty in deep neural networks, moving beyond single-point weight estimates.