Inference-Time Compute Scaling Methods to Improve Reasoning Models

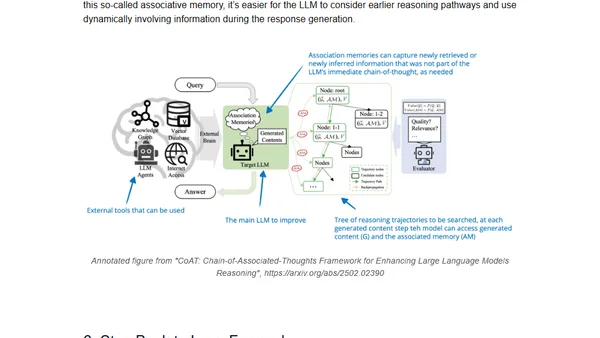

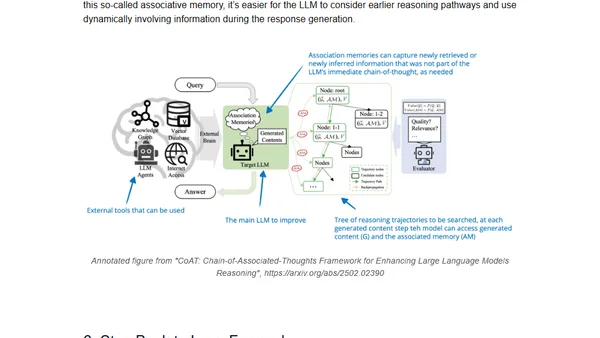

Explores recent research on improving LLM reasoning through inference-time compute scaling methods, comparing various techniques and their impact.

Explores recent research on improving LLM reasoning through inference-time compute scaling methods, comparing various techniques and their impact.

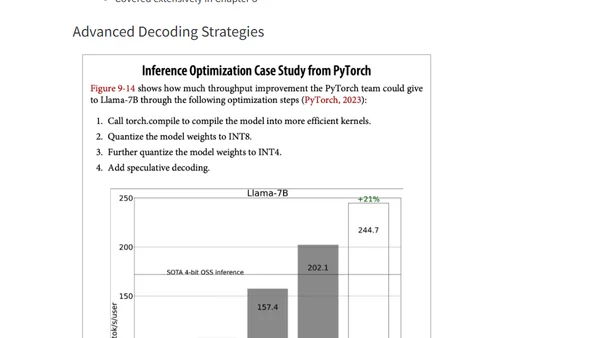

Summary of key concepts for optimizing AI inference performance, covering bottlenecks, metrics, and deployment patterns from Chip Huyen's book.

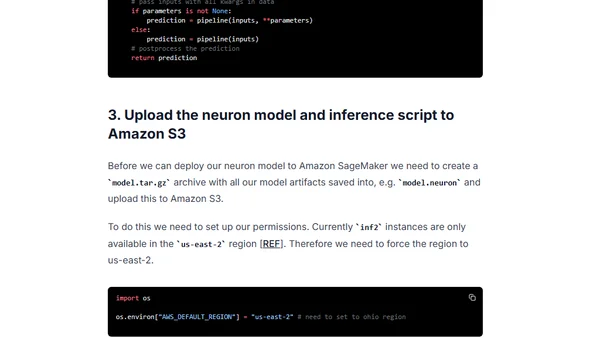

A tutorial on optimizing and deploying a BERT model for low-latency inference using AWS Inferentia2 accelerators and Amazon SageMaker.

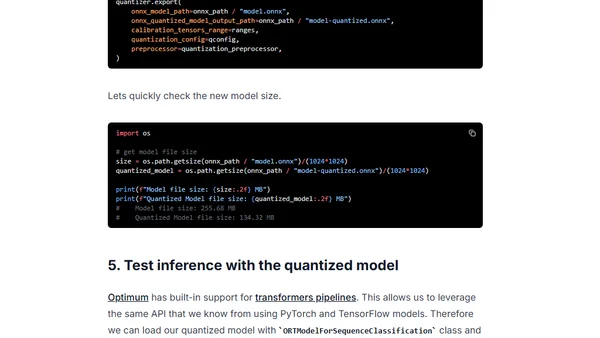

Learn to accelerate Vision Transformer (ViT) models using quantization with Hugging Face Optimum and ONNX Runtime for improved latency.

Learn how to use Hugging Face Optimum and ONNX Runtime to apply static quantization to a DistilBERT model, achieving ~3x latency improvements.

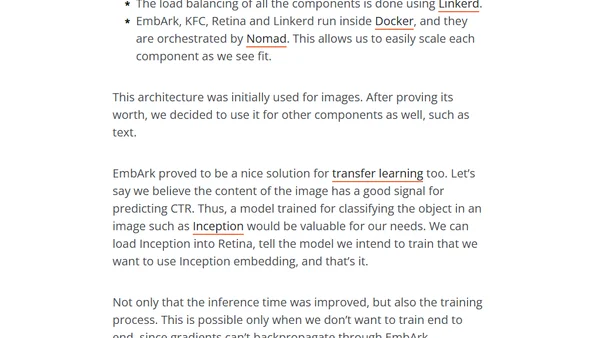

A technical case study on optimizing a slow multi-modal ML model for production using caching, async processing, and a microservices architecture.