KubeCon EU Amsterdam 2026 - My Guide to Managing the Chaos

A guide to navigating KubeCon EU 2026, offering tips on managing schedules, attending events, and avoiding burnout at the major cloud-native conference.

A guide to navigating KubeCon EU 2026, offering tips on managing schedules, attending events, and avoiding burnout at the major cloud-native conference.

A daily tech reading list covering AI agents, MCP, software development, data security, and industry trends.

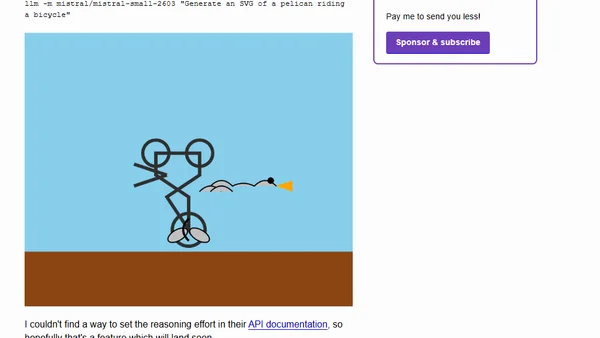

Mistral AI releases Mistral Small 4, a new 119B parameter open model combining reasoning, multimodal, and coding capabilities.

Mistral AI releases Mistral Small 4, a new 119B parameter open model combining reasoning, multimodal, and coding capabilities.

OpenAI Codex now supports subagents and custom agents for AI-assisted coding, similar to features in Claude Code and other platforms.

OpenAI Codex now supports subagents and custom agents for AI-assisted coding, similar to features in Claude Code and other platforms.

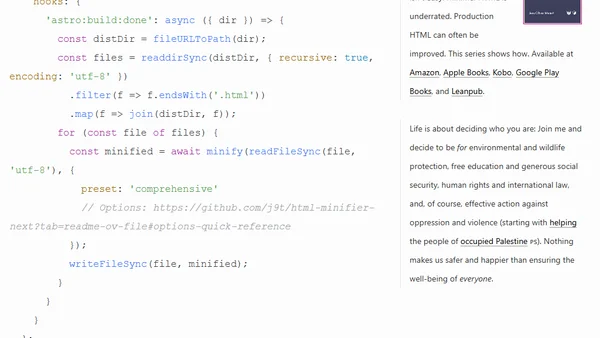

A tutorial on setting up HTML Minifier Next for more powerful HTML minification in Astro projects, improving site optimization.

Explains how to use natural keys alongside surrogate keys in Marten & Wolverine event streams for .NET applications.

Experiment shows using MCP tools and skills with custom AI agents can drastically reduce LLM token consumption, with a focus on Google Cloud's Agent Development Kit.

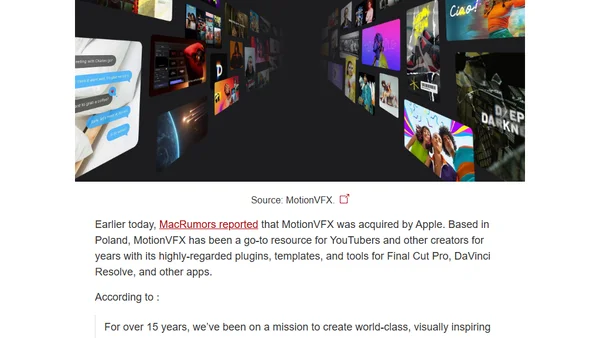

Explains how the MacBook Neo's software camera indicator runs in a secure chip enclave, preventing unauthorized access.

Explains how the MacBook Neo's camera indicator light runs in a secure chip enclave, preventing kernel-level exploits from disabling it.

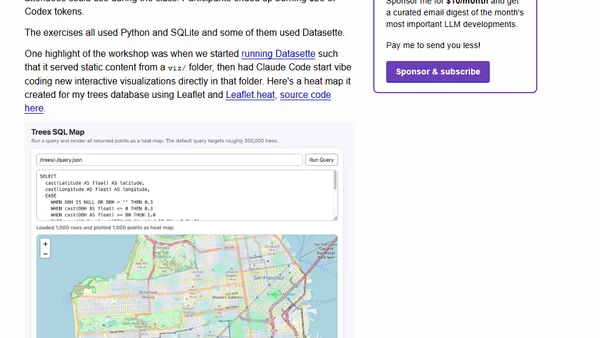

A workshop handout on using AI coding agents like Claude Code and OpenAI Codex for data analysis, visualization, and cleaning tasks.

A workshop handout on using AI coding agents like Claude Code and OpenAI Codex for data analysis, visualization, and cleaning tasks.

Apple acquires MotionVFX, a leading creator of plugins and tools for video editing software like Final Cut Pro.

Explains why the MacBook Neo's on-screen camera indicator is as secure as a hardware light, due to Apple's secure enclave architecture.

Announcing a talk on Microsoft Sovereign Private Cloud with Azure Local, covering data sovereignty, compliance, and hybrid cloud for regulated industries.

Explores the balance between model simplicity and precision in system identification for control engineering and machine learning.

Explores how AI is shifting software engineering from creation to supervisory work, introducing the 'middle loop' concept.

Apple unexpectedly announces the AirPods Max 2, featuring the H2 chip, improved ANC, and new intelligent audio features.

A retrospective look at Byte Magazine's March 1993 issue, analyzing early tech predictions on email, batteries, CPUs, and AI.