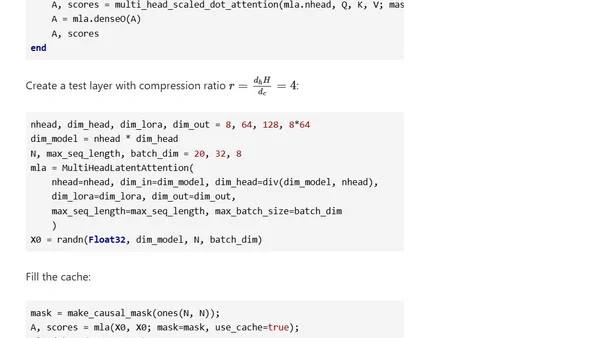

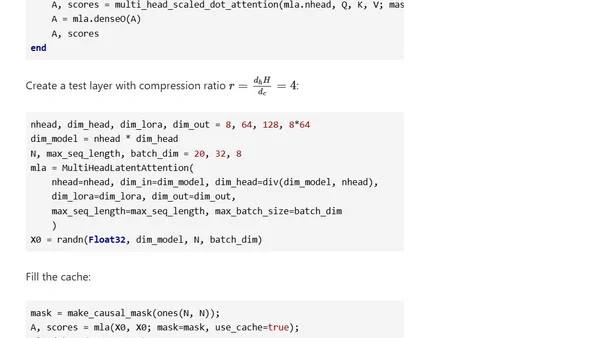

DeepSeek’s Multi-Head Latent Attention

A technical deep dive into DeepSeek's Multi-Head Latent Attention mechanism, covering its mathematics and implementation in Julia.

A technical deep dive into DeepSeek's Multi-Head Latent Attention mechanism, covering its mathematics and implementation in Julia.

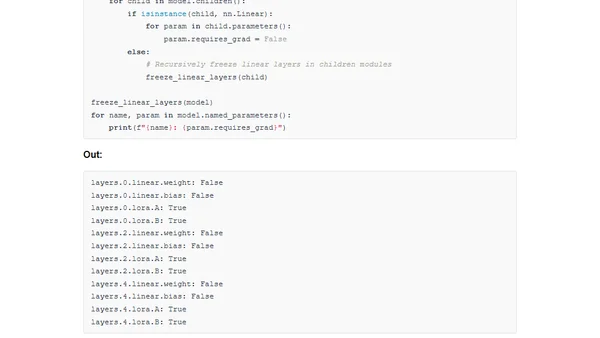

A guide to implementing LoRA and the new DoRA method for efficient model finetuning in PyTorch from scratch.

A technical guide implementing DoRA, a new low-rank adaptation method for efficient model finetuning, from scratch in PyTorch.

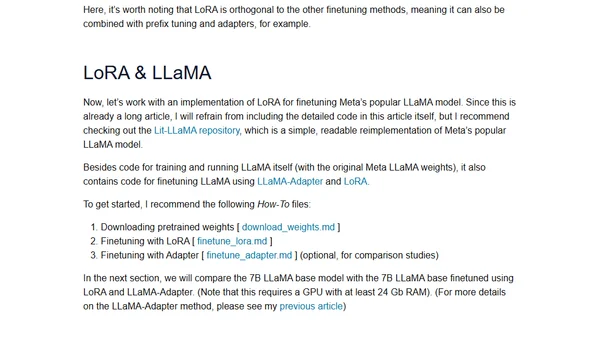

Learn about Low-Rank Adaptation (LoRA), a parameter-efficient method for finetuning large language models with reduced computational costs.

Explains Low-Rank Adaptation (LoRA), a parameter-efficient technique for fine-tuning large language models to reduce computational costs.