Optimizing Gemma 4 and Claude CLI for Macbook PRO M2,M3,M4,M5 Pro or Max

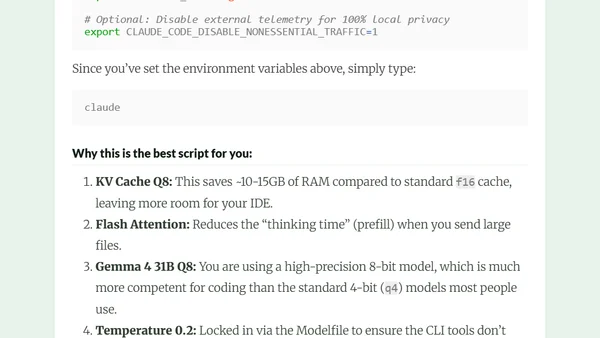

Guide to optimizing Gemma 4 and Claude CLI on Macbook PRO M2-M5 with Flash Attention and KV Cache quantization for local AI coding.

Guide to optimizing Gemma 4 and Claude CLI on Macbook PRO M2-M5 with Flash Attention and KV Cache quantization for local AI coding.

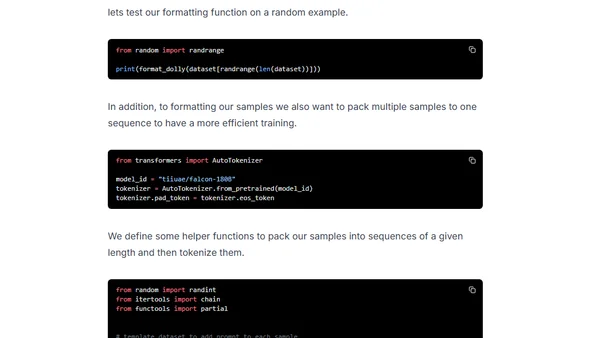

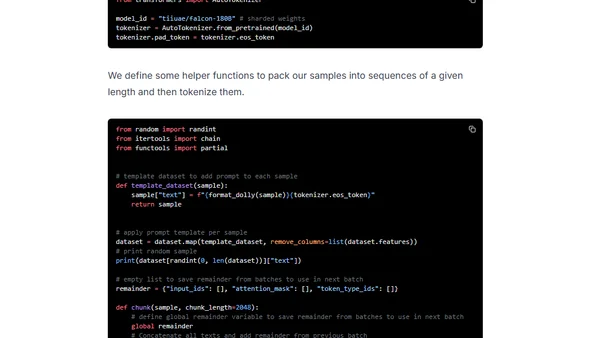

A technical guide on fine-tuning the massive Falcon 180B language model using DeepSpeed ZeRO, LoRA, and Flash Attention for efficient training.

A technical guide on fine-tuning the massive Falcon 180B language model using QLoRA and Flash Attention on Amazon SageMaker.