Efficient Large Language Model training with LoRA and Hugging Face

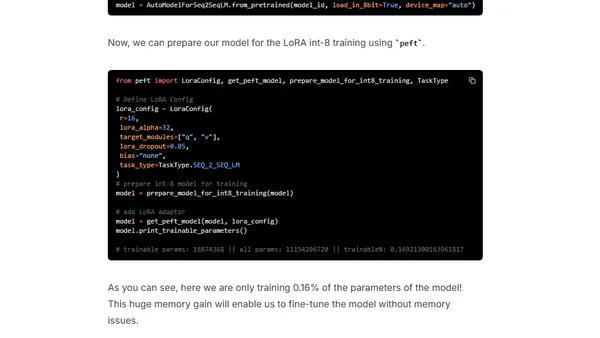

Read OriginalThis tutorial demonstrates how to apply Parameter Efficient Fine-Tuning (PEFT), specifically the LoRA technique, to fine-tune the 11-billion parameter FLAN-T5 XXL model for a summarization task using the samsum dataset. It provides a step-by-step guide covering environment setup with Hugging Face Transformers, Accelerate, and PEFT, dataset preparation, model training with LoRA and bitsandbytes int-8 quantization, and subsequent evaluation and inference.

Comments

No comments yet

Be the first to share your thoughts!

Browser Extension

Get instant access to AllDevBlogs from your browser

Top of the Week

No top articles yet