Impatienter and Dumberer

A developer reflects on how over-reliance on LLMs like Claude for coding tasks is making them impatient and hindering deep learning.

A developer reflects on how over-reliance on LLMs like Claude for coding tasks is making them impatient and hindering deep learning.

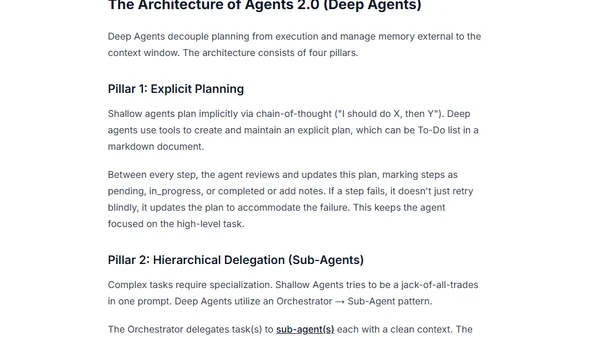

Explores the evolution from simple, stateless AI agents (Agent 1.0) to advanced, deep agents (Agent 2.0) capable of complex, multi-step tasks.

Explores the concept of AI Agents, defining them and examining their role in the AI ecosystem, with references to LangChain and Anthropic.

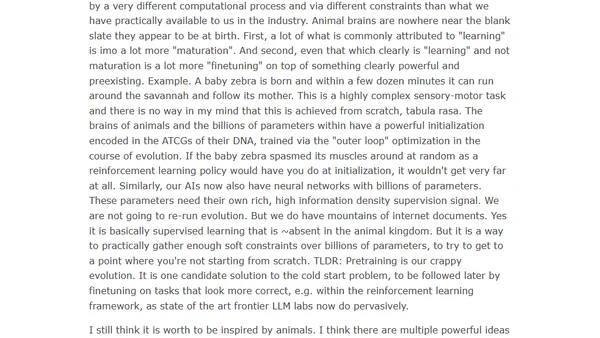

A discussion of AI researcher Rich Sutton's critique of LLMs and his vision for AI inspired by animal learning, contrasting with current approaches.

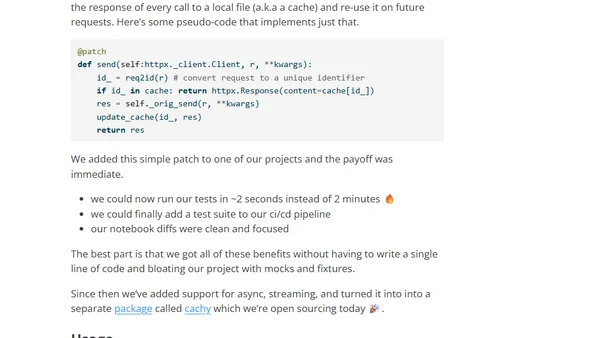

Introducing Cachy, an open-source Python package that caches LLM API calls to speed up development, testing, and clean up notebook diffs.

Explores how conversational LLMs actively reshape human thought patterns through neural mirroring, unlike passive social media algorithms.

A technical AI researcher questions if human 'world models' are as emergent and training-dependent as those in large language models (LLMs).

Argues that LLMs serve as a baseline for developer tools, not replacements, due to their general but non-specialized capabilities.

A blog post exploring the differences between AI and ML, clarifying terminology and common misconceptions in the field.

Explores training a hybrid LLM-recommender system using Semantic IDs for steerable, explainable recommendations.

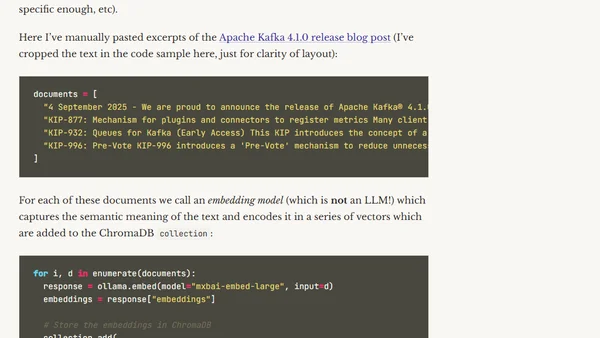

Explains Retrieval-Augmented Generation (RAG), a pattern for improving LLM accuracy by augmenting prompts with retrieved context.

Explains LLM API token limits (TPM) and strategies for managing concurrent requests to avoid rate limiting in production applications.

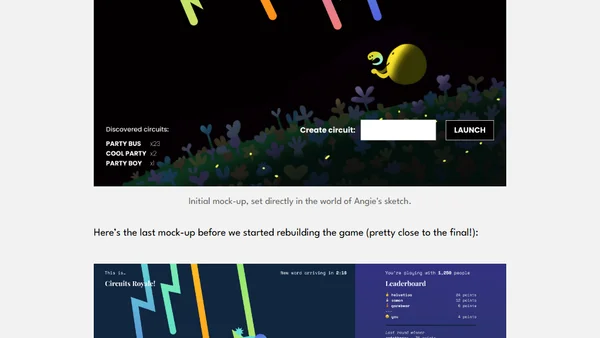

The development story of Circuits Royale, a fast-paced, communal web-based word game powered by LLMs for real-time validation.

Explores the role of Large Language Models (LLMs) in AI, covering major model families, providers, and concepts like hallucinations.

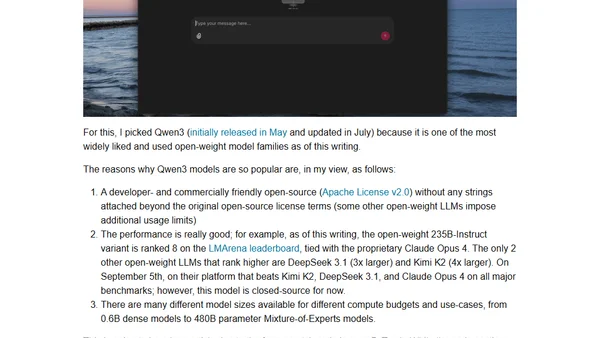

A hands-on guide to understanding and implementing the Qwen3 large language model architecture from scratch using pure PyTorch.

A hands-on tutorial implementing the Qwen3 large language model architecture from scratch using pure PyTorch, explaining its core components.

Explores the shift from RLHF to RLVR for training LLMs, focusing on using objective, verifiable rewards to improve reasoning and accuracy.

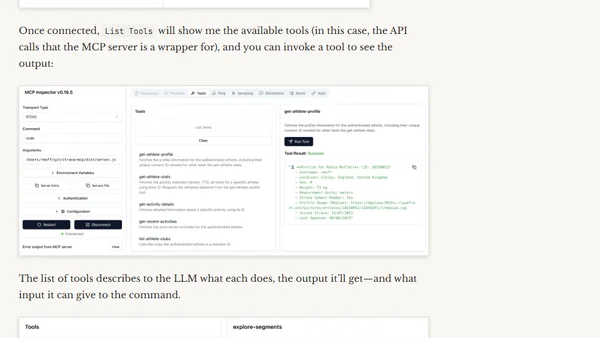

A developer's personal learning journey into the AI ecosystem, starting with an exploration of the Model Context Protocol (MCP) for connecting LLMs to APIs.

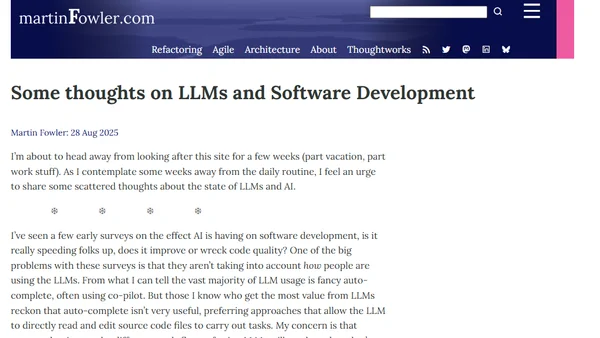

Martin Fowler shares thoughts on LLMs in software development, discussing usage workflows, the future of programming, and the AI economic bubble.

A guide to building a custom CLI coding agent using the Pydantic-AI framework and Model Context Protocol for project-specific development tasks.